OS X Lion Awakens: Can Akamai handle downloads for Apple's Cloud?

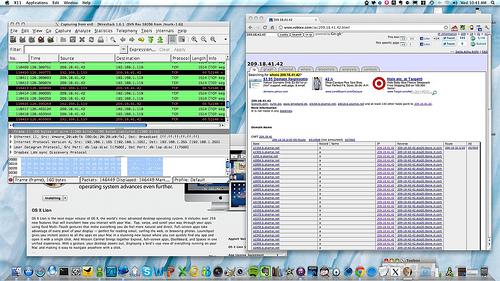

Akamai's servers and Apple's datacenters are being hammered for OS X Lion downloads. But going forward, is it the right content distribution network for Apple? Click on the photo to enlarge.

This morning, I awoke to the news that OS X Lion had been released and was available on the Mac App Store. Without even my first cup of coffee in hand, I clicked on the "Download" button.

What did I get? "This action cannot be completed at this time."

Over the next hour and a half, from 8am to 9:30am, I clicked, and I clicked, and I clicked. Same message. No Lion. Get in line.

I had wondered how Apple was going to handle large downloads from their App Store infrastructure on launch day. Were they going to do it centralized, all from their new billion-dollar datacenter in North Carolina, or would they use a content distribution network? And if they did use a CDN, were they going to build their own, or outsource it?

Well, I found out pretty quickly. They outsourced it, to Akamai. The header graphic in this article is the proof in the pudding.

Once the transaction was able to be kicked off, the download itself from Akamai happened pretty quickly. From my Optimum Online Ultra connection here in Northern NJ, I was able to download the DMG file/installer in about half an hour.

Now, Akamai's system architecture is a known quantity. We've covered this before, when we talked about how the 2008 Olympics live video feeds from MSN was serviced by one of Akamai's competitors, Limelight.

The two then duked it out on the blog over whose content distribution methodology is better.

Unlike Akamai, which uses DNS and datacenter replication as the foundation for its systems architecture, Limelight uses the edge of ISP networks via dedicated fiber connection to cache content, from its globally replicated datacenters, so that the "Hop" to your download is extremely short, eliminating latency and potentially throttling bottlenecks. In some cases, the content is actually co-located at the ISP.

Effectively, with LimeLight, you aren't even going over the public Internet to do a download. You're using a private network.

Akamai, which is also used by companies such as Microsoft to deliver large downloads for services such as MSDN, uses front-end load balancers which through the magic of DNS CNAMES and A-Records points to a single IP.

Akamai's IP load balancing algorithm then redirects your download to a datacenter that is supposed to be the fastest connection for you.

Also Read: Big Files, Fast Internet? Lion Download Speeds (ZDNet Networking)

When the re-direct occurs, the stuff is supposed to just work. But if it breaks at the redirect or the transaction kicking it off doesn't occur, all that DNS magic isn't particularly useful.

I suspect that this morning, when I couldn't get the App Store transaction to kick off for about an hour and a half, there were probably too many sessions hitting the Akamai load balancers at once, so there was no "slot" available. It either broke at the Apple datacenter side, or at the Akamai load-balancing side. Any way you want to slice it, the system got overwhelmed.

Clearly, Apple is going to become very dependent on cloud services in the future, as iCloud is launched with iOS 5 and a huge amount of content starts to get synchronized across millions and millions of devices.

Is a distributed, front-end IP load-balanced methodology such as Akamai's the best way to approach and to scale this, or is an "edge network" ISP-peering type architecture such as LimeLight's more appropriate?

Back in October of 2010 I wrote about how Apple might spend its $50 billion in cash. One of those suggestions was to buy or build out a CDN.

Given the heavy load which I expect Apple's App Store and iTunes Service to take over the coming months and years as Cloud becomes the prevalent form of content distribution for the company, I think Apple needs to very seriously look at how data should be distributed to the customer.

Personally, I think the ISP peering "Short hop" type of infrastructure with some intelligence occurring at the device level is probably a better systems architecture than Akamai's, but not all of these issues can be solved at the CDN itself.

All I know is something broke this morning -- either Apple's $1B datacenter and transaction processing back-end wasn't up to handling the sheer volume of requests, or Akamai's front-end choked.

Neither of which is going to fly when iCloud actually goes online, which is going to make Lion downloads look like child's play.

Are Apple's datacenters and Akamai really up to the task of delivering iCloud? Talk Back and Let Me Know.