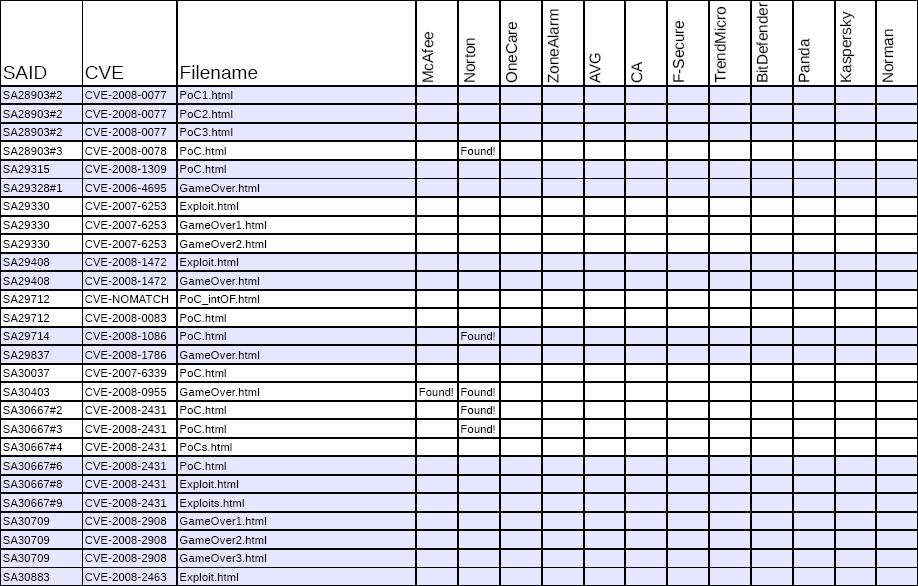

Secunia: popular security suites failing to block exploits

"These results clearly show that the major security vendors do not focus on vulnerabilities. Instead, they have a much more traditional approach, which leaves their customers exposed to new malware exploiting vulnerabilities.

While we did expect a fairly poor performance in this field, we were quite surprised to learn that this area is more or less completely ignored by most security vendors. Some of the vendors have taken other measures to try to combat this problem. One is Kaspersky who has implemented a feature very similar to the Secunia PSI, which can scan a computer for installed programs and notify the user about missing security updates. BitDefender also offers a similar system, albeit this is more limited in scope than the one offered by Kaspersky and Secunia. We do, however, still consider it to be the responsibility of the security vendors to be able to identify threats exploiting vulnerabilities, since this is the only way the end user can learn about where, when, and how they are attacked when surfing the Internet."

And while it's boring to scroll through the empty tables of the study, is Secunia's report a frontal attack against the security software vendors' inability to block exploits, or are they trying to emphasize on the fact that the end user should make better informed purchasing decisions when relying on All-in-One Security products?

In 2007, Secunia released data indicating that 28% of all installed apps are insecure, and despite that the vulnerabilities has been already addressed, the end users were still living in the reactive response world. Cybercriminals on the other hand, took notice, and following either common sense or publicly obtainable data indicating that end users remain susceptible to already patched vulnerabilities, started integrating outdated exploits into what's to become one of the main growth factors for web malware in the face of today's ubiqutous web malware exploitation kits.

What is more important, to detect the latest malware binary behind the exploit serving file, or prevent the latest malware binary from reaching the end user/company by blocking the relatively static exploit serving file? It's all a matter of perspective.

Naturally, the reactions to the comparative review, and the methodology used are already receiving criticism from the vendors. Sunbelt Software's Alex Eckelberry comments on the report, and also includes AV-Test.org's Andreas Marx opinion emphasizing on why it's important to prioritize :

"In most cases, it is simply not practical to scan all data files for possible exploits, as it would slow-down the scan speed dramatically. Instead of this, most companies focus on some widely used file-based exploits (like the ANI exploits) and some companies also remove the detection of such exploits after some time has passed by (as most users should have patched their systems in the meantime and in order to avoid more slow-downs). There are a lot more practical solutions built-in to security suites, like the URL filter (which checks and blocks known URLs which are hosting malware or phishing websites) and the exploit filter in the browser (which would also block access to many "bad" websites). Some tools also have virtualization and buffer/stack/heap overflow protection mechanisms included, too.

Then we have the traditional "scanner" -- and even if some exploit code gets executed, a HIPS, IDS or personal firewall system might be able to block the attack. For example, some security suites are knowing that Word, Excel or WinAmp won't write EXE files to disk -- so potentially dropped malware cannot get executed and the system is left in a "good" state."

Emphasizing on defense-in-depth, and prioritizing in the case of blocking the most popular exploits used is a very good point since it has the potential to protect as many customers as possible from the default set of exploits used in the majority of malware attacks. For instance, the massive SQL injections attacks that took place during the last couple of months, were all relying on relatively static javascript file, whose generic detection is a good example of prioritizing. Moreover, due to the evident template-ization of malware serving sites, and the commoditization of web malware exploitation kits, the impact of ensuring that your customers are protected from the default sets of exploits included within these kits, means that your customers will be protected from a huge percentage of web based malware attacks.

No Internet Security Suite can protect you from yourself, so do yourself and the Internet a favor - patch all your insecure applications - it's free.