Security considerations for brands using Twitter

There's been a lot of chatter about social media and security issues, from social engineering to the naivete of users. There's especially been a lot of talk about Twitter and security issues, especially in the case of last week's account hijackings. Much of this talk has focused on how individuals might better secure themselves. What about brands? And, more than that, how to brands take better social media security responsibility to protect their users? Because like it or not, some of the onus is on them. Here are two examples.

URL RedirectionI've written in the past about how some social networks are better handling URL redirection than others, but URL redirection is still a security consideration. Both consumer and business users are constantly hammered either by our IT departments or security-savvy friends never to click on untrusted or unrecognized URLs. Yet we still do it. Even the mass Security Twits using Twitter have been known to minimize URLs of their blogs or other interesting info they need to share. My company even got called on it for promoting a blog post about malicious Web addresses using a redirected URL. The problem here? Most of the time the URL redirection is done against our will.

Twitter has a policy (which is enforced through TweetDeck and other third-party Twitter apps) that no matter how many characters a user has available, if a URL is greater than 40 characters, the service will automatically turn it into a TinyURL.

Brands have a choice, of course. They can choose not to post URLs via Twitter. Or they can try to control the length of their originally posted URL (I did that with a blog post last week) but that's not exactly great for SEO. Nor can brands control items they want to promote from media and other third-party sites. They can also encourage users who use Firefox to run grease monkey scripts that allow for redirected URL previews, but that's tough to do. If they do make the choice to use redirected URLs on Twitter they have to consider that they are asking a great amount of trust from their customers. And if for some reason their account gets compromised and a malicious URL is posted, their customers may not trust them moving forward. They also need to be aware of the security risks:

"The negative part of this 'shortification' comes from the obscuring the visibility to the text of the URL before it gets sent to your browser -- it's a possible injection vector for direct browser URL exploits, of which there have been lots of varieties, and a way to send them to people without having the URL be inspected or visible. Or possibly just a way to send people to sketchy domains with worse hosted documents," said Dragos Ruiu, organizer of the CanSecWest security conference.

Another option that brands also have is rather than relying on Twitter to automatically shorten URLs to TinyURL, use Bit.ly instead. It's slightly more secure, as Bit.ly is one of the few redirectors that looks at Google's malware database before shortening. But should Twitter get rid of this feature in the first place and let brands decide if a URL is extended or not?

"My feeling is that Twitter should let users turn off this 'feature' if they desire, including all the auto-url-munging," Ruiu said.

Next: Twishing and brand monitoring -->

Twishing and Brand Monitoring What does one have to do with the other? A lot. We saw examples of "twishing" or "Twitter phishing" back in January when fake "direct message" notifications went out to some users and directed them to a fake Twitter-type URL and stole users credentials. It was the first time this kind of scam happened on such a wide scale, and it shook people up. However, as security expert Damon Cortesi wrote in a guest post he authored for this blog, this is just the tip of the iceberg.

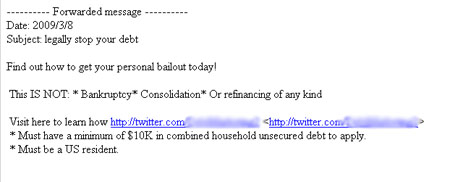

How does this apply to brands? Because spammers have gotten wind of the popularity of Twitter and are constantly figuring out new and creative ways to leverage it. Case in point, security consultant Mike Murray forwarded the following email to me the other day. It's the first time that either of us had seen a spammer direct users to a legitimate Twitter feed:

While I've blurred out the specific feed, it did direct users to a living, breathing Twitter feed. Fortunately Twitter quickly disabled this profile stating that it was suspended due to "strange activity" but for that reason we have no way of knowing for certain what type of scams were indeed included in the feed, though I imagine it linked off site to a phishing URL that requested personal details for people wanting to "manage their debt."

I know, how does this apply to brands? Stick with me. There's a huge list of brands on Twitter -- even some in the financial sector. What if, for instance, a spammer were to try to gain user trust in driving users to a malicious URL while also including a legitimate Twitter feed for verification (i.e. user checks out Twitter feed for a brand and it is legit; then clicks through to scam Web site). Or, what if a scammer decides to play off of a legitimate brand Twitter URL and create a deceptive off-shoot (i.e. twitter.com/Bank of America = twitter.com/|3ank of America) as is traditionally done in spam emails?

Twitter has been pretty good at quickly spotting and eliminating these types of accounts based on suspcious activity, but the infrastructure is not set up in such a way that it can proactively monitor for these special cases of "brandjacking." That's up to the brand. With spam emails, brands have little control over what is sent out. They can ask users at large to report in offenders and put up security measures on their own sites. But with parked sites -- Twitter, Facebook, FriendFeed, etc. -- there are more chances for brands to monitor how they might be used. With scammers and spammers becoming increasingly clever with social networks, brand and community managers need to do the same. If you manage a brand that has already been widely bastardized by spammers, make sure you are doing searches on social networks for these variations of your brand name or product names. If you think you might be at risk for such scams, come up with creative variations of your own and track them.

While the imminent danger for here on both counts -- URL redirection and twishing -- is not necessarily for the brands. It's for their customers and their users. And in this age of "trust" and "transparency" and "accessibility" and other buzz words, it's even more critical that brands manage their presence, and variations of, and have a reporting structure as part of its social media policies for dealing with security issues. Once you violate that customer trust -- inadvertently or not -- it's darn near impossible to get it back.

Some of the responsibility does lie with Twitter and other social networks, of course, as reactive security measures aren't the best measures. In a conversation on this topic yesterday, one of my colleagues, Guillaume Lovet, senior manager for Fortinet's FortiGuard Global Security Research Team, summed this thought up nicely (disclosure: Fortinet is my employer).

"A phishing operation does not require a 'long-term' brandjacking plan (should it be a Twitter account or a DNS domain) so the challenge is not so much to close these accounts upon receiving complaints, but rather to prevent their creation from starters," Lovet said.

If you've run into any other security / brand clashes let me know via the form below or in the TalkBacks.