To Catch a Fake: Machine learning sniffs out its own machine-written propaganda

The "Era of neural disinformation" is upon us, a future in which machines can generate fake news articles in enormous volume that humans will get suckered into believing is real.

The only hope for society lies with ... another machine.

That's the contention of a new bit of work from researchers at the Allen Institute of Machine learning, who have developed a natural language processing system affectionately dubbed "Grover." Grover can generate news-style articles that are fake but that fool human readers. But Grover can also do a very good job of telling that such articles are fake.

It's an "arms race," contend lead author Rowen Zellers and his colleagues at the Allen Institute and the Paul Allen School of Computer Science and Engineering at the University of Washington. It's like a battle of the bots, one malicious, one good, or perhaps like an old Western, where the former outlaw goes after the young train robber.

In reality, it's really just a fascinating meditation on what's happening with the most cutting-edge A.I. for language understanding, things such as OpenAI's "GPT-2."

The authors' elegantly simple observation is that machines that generate fake text leave a trace or signature in the way they predict word combinations — what Zellers and the team call "artifacts." A neural network that is constructed in the same way as the network that makes the fake text automatically spots those idiosyncratic artifacts. It takes one to know one, in a sense.

Grover is able to sample the likely combinations of words so well it fools humans and also other neural networks, but it leaves traces that can be detected by another grover acting to unmask it.

"Countering the spread of disinformation online presents an urgent technical and political issue," the authors write, given the increasing power of things like GPT-2. There is, happily, an "exciting opportunity for defense against neural fake news," they argue: "The best models for generating neural disinformation are also the best models at detecting it."

The research paper, "Defending Against Neural Fake News," is posted on the arXiv pre-print server and is authored by Zellers, Ari Holtzman, Hannah Rashkin, Yonatan Bisk, Ali Farhadi, Franziska Roesner, and Yejin Choi, with Farhadi and Choi having dual status at both the Allen Institute and the Paul Allen School. (There's also a Web page discussion you can check out.)

Grover is another in a line of hilarious Sesame Street neural network names, including "Bert," from Google, and "ELMo" from the Allen Institute. Grover is based on OpenAI's GPT-2, which itself was adapted from Google's "Transformer" neural network, which has become one of the most popular neural networks for language processing as well as for other tasks.

Also: No, this AI can't finish your sentence

In prior research, Zellers and colleagues showed that a powerful neural network can be adversarial in its production of text, fooling some other neural networks by generating text that seems like it's written by humans.

Here, the symmetry is taken even further: Grover is both the thing generating "fake news," called the "generator," and the thing that detects the fake news produced by the generator, called the "discriminator."

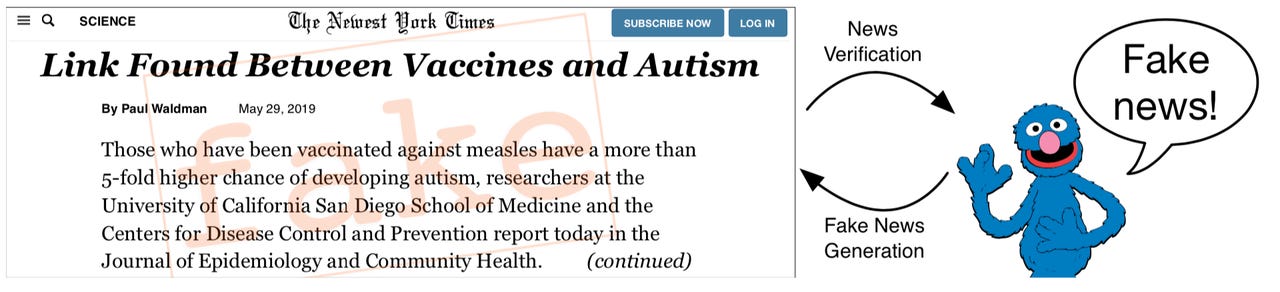

Grover, like GPT-2, can generate some impressive fake news, with headlines such as "Link Found Between Vaccines and Autism." It does so after being input with a vast amount of human-written news texts from the Web, culled by the researchers, which they humorously christen "RealNews." The news articles produce a model of language in the neural network that it then uses to generate the texts.

Tests with people on Amazon's crowd-sourcing platform, Mechanical Turk, resulted in humans rating such examples from Grover as "more plausible" than examples actually written by humans.

An example of Grover propaganda beating human-written propaganda, as judged by the misguided assessment of human reviewers.

But the second Grover, the discriminator, knows the difference, and it knows it better than other neural networks such as Bert and the original GPT-2. Why is that? The secret is in how the first Grover, the generator, assembles sentences once it's developed its model of language. The part of the generator that picks out words to assemble, called the "decoder," has a particular pattern of choosing word combinations that are most likely.

Must read

- What is AI? Everything you need to know

- What is deep learning? Everything you need to know

- What is machine learning? Everything you need to know

- What is cloud computing? Everything you need to know

Grover the discriminator responds to that telltale pattern of word combinations, it sees the architecture of how Grover is assembling fake text. More specifically, Grover the discriminator sees that the probable word combinations leave out a vast terrain of less common words, known as the "tail" of the vocabulary. Natural human language dips into that tail of uncommon words more than Grover the generator does, making Grover's fake texts more predictable to Grover the discriminator.

"Our analysis suggests that Grover might be the best at catching Grover because it is the best at knowing where the tail is, and thus whether it was truncated," the authors write.

Technically, the decoder in Grover is based on a novel decoder made by Holtzman and Choi, along with Jan Buys and Maxwell Forbes of the Paul Allen School. That approach, called "nucleus sampling," introduced earlier this year, picks word combinations that are somewhere between the most common and the outer reaches of the tail of the vocabulary. It is that nucleus sampling that shows up as a pattern in Grover the discriminator, revealing bad Grover's fakes as different from human writing.

Also: OpenAI has an inane text bot, and I still have a writing job

The authors have chosen a different path from that of OpenAI, by releasing all their code. When OpenAI introduced GPT-2 in February, they caused a stir by deciding not to release code for the most sophisticated version, arguing it was too dangerous to provide would-be miscreants with Weapons of Mass Composition. But Zellers and team argue there is no safety in obscurity.

"At first, it would seem like keeping models like Grover private would make us safer," they observe. But, "If generators are kept private, then there will be little recourse against adversarial attacks."

While it's going to be an arms race, they expect, the authors argue that at some point, a deeper human knowledge of the world needs to come into machine learning in order to refute the fakes. "Our discriminators are effective, but they primarily leverage distributional features rather than evidence," they write, referring to the distribution of language in the tail.

"In contrast, humans assess whether an article is truthful by relying on a model of the world, assessing whether the evidence in the article matches that model.

"Future work should investigate integrating knowledge into the discriminator."