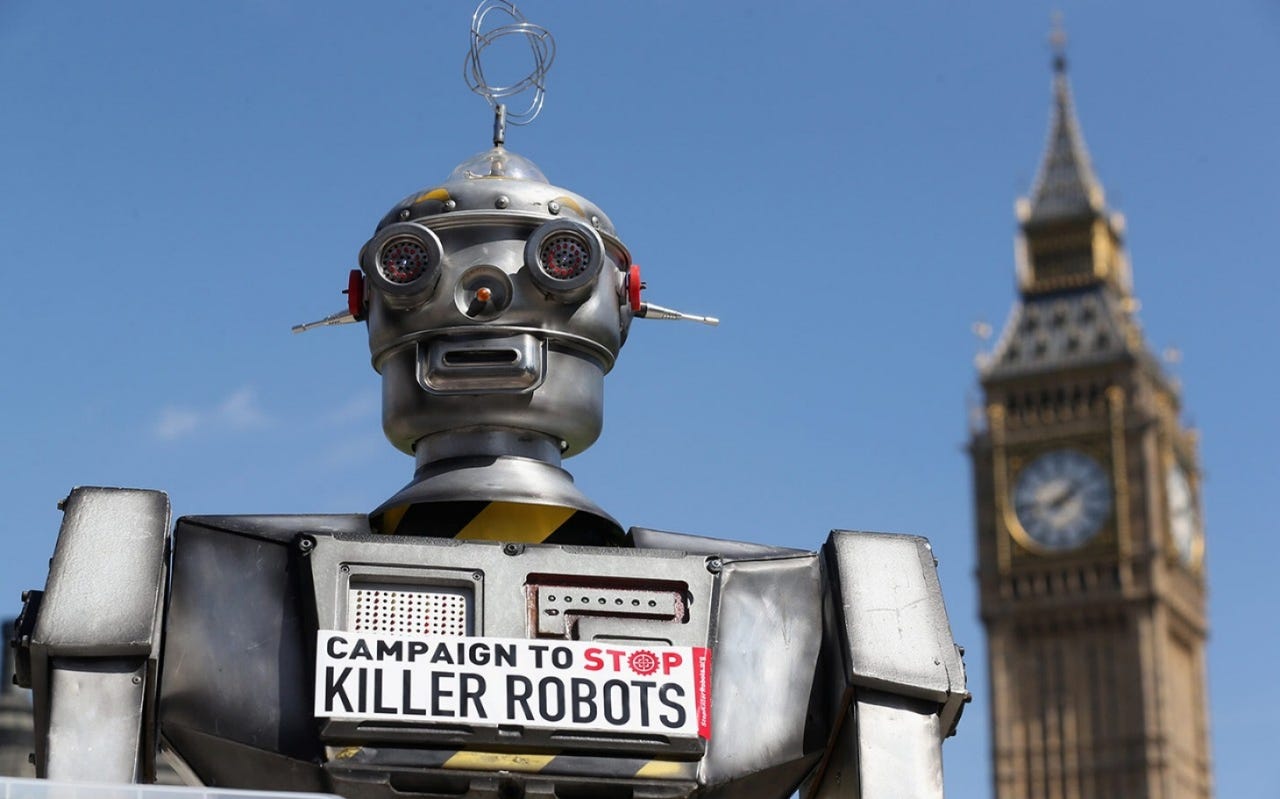

UK bans fully autonomous weapons after Elon Musk letter

The UK has sided with global robotics and AI experts in formally declaring that humans will always retain control over the country's robotic weapons systems.

In August, Elon Musk led 116 experts in robotics and AI in calling for a ban on autonomous weapons. The letter, with signatories from 26 countries, was sent to the UN with an ominous warning:

"We do not have long to act. Once this Pandora's box is opened, it will be hard to close."

The announcement from the UK Ministry of Defense coincides with the Defense and Security Equipment International show, one of the biggest arms exhibitions in the world.

Mark Lancaster, the minister for the armed forces, said: "It's absolutely right that our weapons are operated by real people capable of making incredibly important decisions, and we are guaranteeing that vital oversight."

The reason you're hearing so much about this issue recently is that autonomous weapons systems are now on the cusp of becoming a reality. Russian arms maker Kalashnikov recently announced it was developing autonomous combat drones that could acquire targets and make decisions by themselves.

Yesterday I reported that Israeli autonomous drone maker Airobotics is entering the defense industry.

Some semi-autonomous weapons, which require some human oversight, are currently in use around the world. One example is South Korean gun turrets along the border with North Korea, which can lock onto human targets.

Missile defense systems are also largely autonomous.

The U.S. Department of Defense doctrine on so-called Lethal Autonomous Weapons Systems (LAWS) is somewhat vague. DoD directive 3000.09 states that autonomous weapons systems "shall be designed to allow commanders and operators to exercise appropriate levels of human judgment over the use of force," which is strikingly non-committal.

Last year, then-current Deputy Defense Secretary Robert Work told the Boston Globe, "We will not delegate lethal authority to a machine to make a decision."

But Work went on to complicate that stance, adding, "The only time we will . . . delegate a machine authority is in things that go faster than human reaction time, like cyber or electronic warfare."