Amazon's Alexa gets a new brain on Echo, becomes smarter via AI and aims for ambience

Meet Alexa and the new Echo brain.

Amazon is making Alexa smarter with natural turn-taking, having conversations with multiple people, natural language understanding, and the ability to be taught by customers. The first target is the smart home, but Alexa for Business is also likely to follow.

Also: When is Prime Day 2020? Everything we know so far

The Alexa overhaul and artificial intelligence improvements were outlined as Amazon launched its latest batch of Echo devices.

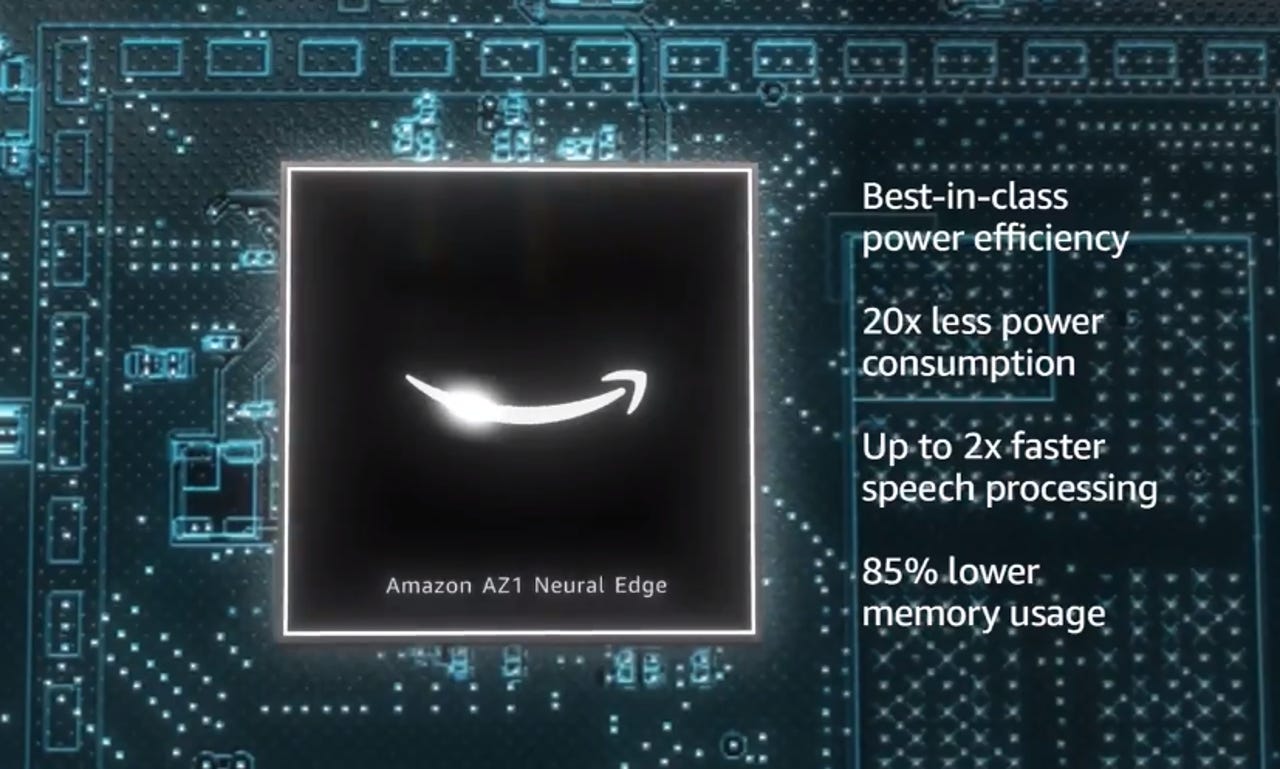

Amazon's new Echo devices are evolving to be more smart home edge computing devices. For instance, Amazon's Echo devices are using the company's AZ1 Neural Edge processor with 20x less power, double the speech processing, and 85% lower memory usage.

See it now: Echo | Echo Dot | Echo Dot with Clock | Echo Show 10

That processor building block along with Amazon's artificial intelligence advances are designed to make the Echo more ambient. Dave Limp, senior vice president of devices and services at Amazon, said the new Echo devices are designed to make "moments count."

Features such as Reading Sidekick, designed to help kids read, and conversational improvements are aimed at making Alexa more of a family member without as many "Alexa" words.

Rohit Prasad, vice president and head scientist for Alexa Artificial Intelligence at Amazon, outlined the following capabilities:

- Alexa can take interaction cues and note errors and then connect them.

- Learn from humans by asking to follow up questions when Alexa has a gap in knowledge about returns and learned modes.

- Deep learning space parsers to understand gaps and extract new concepts.

- More natural conversation and adaptation.

- Follow-up mode when interacting with humans.

Prasad noted that Alexa can use visual and acoustic cues to determine the best action to take. "This natural turn-taking allows people to interact with Alexa at their own pace," said Prasad.

Primers

In an interview, Prasad said the capabilities added to Alexa are advances in AI as much as conversational technology. The timelines for the new capabilities varied from months to years in the labs. For instance, being able to teach Alexa instantaneously took three to four years.

As for Alexa's ability to interpret context and adjust how to speak to you, Prasad said the foundational technology took years.

Alexa's ability to naturally take turns during conversation required multiple technologies. For instance, Alexa separates noise from speech and picks up linguistic cues as well as visual ones like poses when possible, said Prasad.

Aside from the AI models and software work, Alexa's new features are also enabled by Amazon's AZ1 Neural Edge processor, said Prasad. "The processor on the device is key with a fast-paced conversation," said Prasad. "The neural accelerator on the device makes decisions much faster." There's also a privacy win given data is stored and deleted locally.

Also: Amazon Alexa: How developers use AI to help Alexa understand what you mean and not what you say (TechRepublic)

Rohit Prasad teaching Alexa.

Chasing the ambient dream starting with the home

Limp's talk outlined the new Echo devices and Echo Show 10, but the overall theme was that these devices can follow you around the room as a person would.

In addition, Alexa is going to be more interconnected with services.

Add it up and Limp said the new neural processors are all running locally but can tell when there's motion. Limp added that Echo devices like Show can use smart motion as well as visual cues to keep you centered.

There's also a business use for Amazon Chime and Zoom integration and the ability to handle group calls.

Today, the Echo launch is all about a smarter Alexa and making her a part of your family. Rest assured, Alexa for Business is going to fast follow for next-gen working arrangements and hybrid offices.

Also: Amazon Prime vs Business Prime: What's the difference?

Amazon rolled out Alexa for Business more than a year ago and has steadily added features via AWS. Skill Blueprints were launched in April 2018 as a way to allow anyone to create skills and publish them to the Alexa Skills Store with a 2019 update.

Prasad wasn't going to speculate on the roadmap for Alexa for Business, but it did say Alexa's new Echo capabilities would apply to productivity as well as office settings. However, Alexa's new features could also apply to more yet-to-be-determined business use cases. "There's the potential to be able to teach Alexa anything in principle," said Prasad.