A few errors could be key to super-efficient computer chips

"Everyone makes mistakes" might be a nice "words-to-live-by" phrase, but it hardly seems like the path to greater performance from computer chips. Yet a group of university researchers has determined that processors that can tolerate a couple of errors could yield huge gains in efficiency.

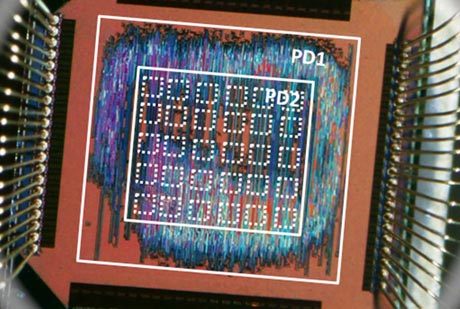

The so-called inexact computer chip would be a whopping 15 times more efficient than current CPUs that include error-correcting mechanisms, managing to be faster and less power-hungry. The concept is based around the idea that certain applications can tolerate some mistakes in processing -- image-processing software, for example.

The researchers "pruned" certain circuits out of their chip design to help provide the efficiency boost. Of course, the more errors tolerated, the greater the performance gains, which could limit the real-world applications of the approach.

The inexact chip is initially being promoted for embedded applications, such as for hearing aids. It's also being used for a low-cost tablet in India where classrooms often lack electricity. It will be interesting to see if there's ever a way to harness this approach to mainstream computer chips, where significant performance gains are getting a bit harder to come by. Would there be a way to prune circuits and still deliver the computing experience expected by the typical user? After all, a few errors in the millions of pixels of a digital image is one thing, but you don't want them showing up in your Word document.

[Via The Verge]