Datacentre energy use: there's good news and bad

Datacentres are powering down. This may sound a bit odd given that world + dog seem to be opening new facilities every other week -- though bear in mind that we rarely hear about old, outdated facilities being mothballed or re-purposed. No, the point I'm making is that datacentres are now using less power than predicted.

Let's backtrack. In 2007, if you're interested in such things you may have read about a ground-breaking, widely reported study which reported that datacentres were consuming 1.2 percent of the USA's total energy output. That's a lot of grid-sucking -- worth $2.7 billion annually.

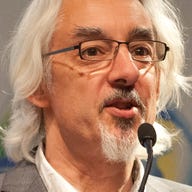

It came as quite a shock as the report's author, Jonathan G Koomey, a professor at Stanford University and a scientist at Lawrence Berkeley National Laboratory, researched and reported on the amount of electricity that servers were using both in the USA and around the world.

He said in the report: "Aggregate electricity use for data centers doubled worldwide over the period 2000–2005. Almost all of this growth was the result of growth in the number of volume servers, with only a small part of that growth being attributable to growth in the power use per unit."

He found that at least half of the consumption was being used for power and cooling and that, if current trends continued, by 2010 energy usage by servers including all the gubbins they need to keep working would increase by 76 percent. Most of this growth was attributed to the growth in the numbers of servers.

Koomey concluded that: "The total power demand in 2005 (including associated infrastructure) is equivalent (in capacity terms) to about five 1000 MW power plants for the U.S. and 14 such plants for the world. The total electricity bill for operating those servers and associated infrastructure in 2005 was about $2.7 B and $7.2 B for the U.S. and the world, respectively." Ouch.

A year later, Koomey produced a follow-up study with similar findings. But since then, there's been a recession, we've seen a huge growth in the use of virtualisation and of increased attention being paid to power usage by servers -- much of it, I suspect, driven in part at least by Koomey's findings. The combination should have reduced that power consumption -- but has it?

Koomey's latest report, released last month and entitled 'Growth in data center electricity use 2005 to 2010', that electricity use has increased but not as much as predicted between 2005 and 2010. It doubled between 2000 and 2005 but only increased by 56 percent from 2005 to 2010.

Koomey attributes this more to lower growth rates in the numbers of servers than greater efficiencies.

Google, often held up as the bad boy in class because of its large numbers of huge datacentres, is pulled out as a special case because it's not included in the main figures, which derive from sources such as IDC, as a result of its building its own servers that don't show up in sales figures. "While Google is a high profile user of computer servers, less than 1% of electricity used by data centers worldwide was attributable to that company’s data center operations."

Cloud computing has had an impact albeit a small one. That's because cloud providers' datacentres tend to be much more efficient than the PUE of 2.0 that underpin most of Koomey's assumptions, and which tends to be more the norm in in-house datacentres.

Lower usage also stems from improved power management by newer servers.

Koomey notes that the rate of growth of volume and mid-range servers has slowed but that the numbers of high-end servers -- those that presumably get used as virtualisation hosts -- is growing "rapidly", and that storage is growing rapidly, as is its power consumption.

Koomey also notes that there's a lot of uncertainty when predicting -- especially about the future. He remarks: "Anecdotal evidence indicates that 10-30% of servers in many data centers are using electricity but no longer delivering computing services. These servers have not yet been decommissioned and are probably not counted in installed base statistics. In many facilities nobody even knows these servers exist, so it is likely at least some of them are not included in the installed base statistics. Actual server electricity use could therefore be higher than estimated here."

Koomey concludes that: "the rapid rates of growth in data center electricity use that prevailed from 2000 to 2005 slowed significantly from 2005 to 2010, yielding total electricity use by data centers in 2010 of about 1.3% of all electricity use for the world, and 2% of all electricity use for the US."

So that's kind of good news, and a feather in the cap for engineers beavering away on technology that can save money and energy. It's working: keep it up!