High Dynamic Range: The quest for greater image realism

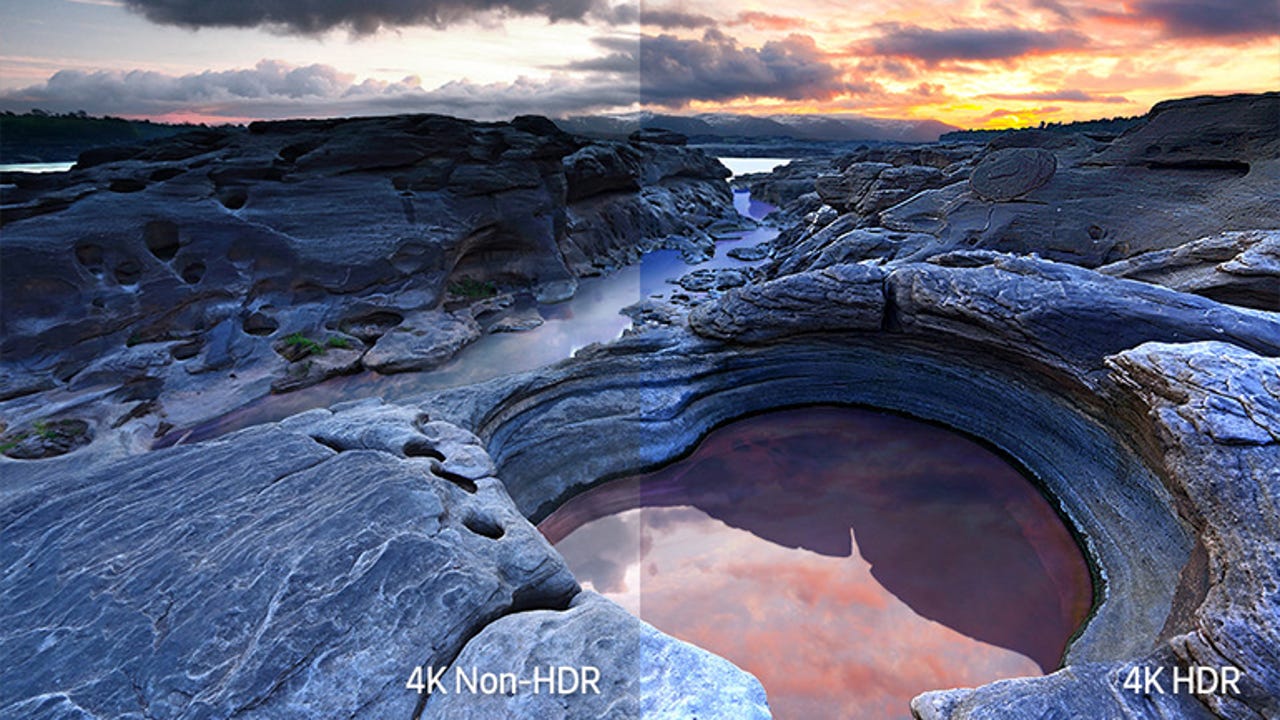

This illustration attempts to represent the difference between a 4K standard dynamic range (SDR) image and an HDR image.

In 1934 Marconi-EMI demonstrated the monochrome 405-line electronic television system that was eventually adopted by the BBC and became the basis of public television in the UK.

Since then, television has developed at an ever-accelerating pace and is the main driver of computer display technology. Today we have digital colour televisions with 4K resolution, with 8K on the horizon. With image resolution now arguably as high as it ever needs to go, attention has shifted to other aspects of image quality such as colour range (gamut), refresh rates and contrast range or High Dynamic Range (HDR).

HDR for displays should not be confused with HDR photography. Photographers have been working with HDR images for years, but in this case multiple exposures are used to capture high dynamic range and then compressed using HDR editing software into a standard dynamic range image. Many of the most recent smartphones have an option do this trick automatically.

What's so important about High Dynamic Range?

HDR can potentially deliver a bigger impact on image quality than higher resolutions. HDR extends the range of displayed contrast much closer to reality -- far beyond the range we're used to for TV and computer displays. HDR images have deeper blacks, brighter highlights and more detailed shadows and highlights. When coupled with improvements in colour gamut, the result is much more realistic images.

In addition, image quality will be more closely controlled. Until now, image quality control for broadcast television, streamed video and movies on optical disk has been open ended. The image creators output the highest-quality images they can, based on how their content appears on reference displays. How faithfully those images are reproduced on consumer displays relies on how closely display manufacturers adhere to display standards and on the individual settings, characteristics and age of consumers' display devices. HDR signal formats introduce end-to-end image management by including information (metadata) on how the original images were captured or created. On playback, this is matched to the characteristics of each display and the image is adjusted for the best possible match to the original intentions of the content creator.

SEE: How to optimize the smart office (ZDNet special report) | Download the report as a PDF (TechRepublic)

For years it was accepted that 8-bit colour with a range of 16,777,216 shades was enough, that human vision could not discriminate any finer differences. This was true, but only for the limited range of contrast, and colour gamut that the displays of the time were capable of. The HDR displays now coming onto the market not only feature HDR, but also a larger colour gamut, due to improvements in the purity of the red, green and blue elements of each pixel. HDR uses at least 10 bits per colour channel for a palette of 1,073,741,824 shades.

Image accuracy has also relied on the transfer characteristics (gamma) of CRT displays, which happened to be a reasonable match for the brightness response curve of the human visual system. When LCDs replaced CRTs, manufacturers simply replicated the gamma curve of the typical CRT in the electronics of the LCD. As part of Dolby Vision, Dolby Laboratories have developed a new transfer curve they refer to as PQ (for Perceptual Quantizer). There are other schemes such as Hybrid Log Gamma developed by the BBC and NHK.

Until quite recently the standard for HDTV has been ITU-R Rec. 709, which includes a legacy gamut standard based on the colour phosphors of the old CRT displays (shown by the dotted line in the above CIE xy colour space diagram). For HDR a new standard, Rec. 2020, is to be used which specifies a much larger gamut (shown by the solid line above).

Experiments performed by Dolby Laboratories have shown that a dynamic range of 22 stops would be a suitable range for HDR images (see Dolby White Papers 'A Unified Approach to High Dynamic range' and 'The Art of Better Pixels'). Dolby established that a display with a black level of 0.001cd/m2 and highlights of up to 10,000cd/m2 would meet 90 percent of viewer preferences. LG is claiming 21 stops for its current HDR OLED TVs.

Comparing this to current LCD technology without HDR enhancement, a VA LCD panel might have a contrast range of 2,000 -- perhaps even 5,000 -- to 1, or roughly 11 stops, while a TN or IPS panel between 800 to 1,200 to 1, or roughly 9 stops (in practice these display contrast ratios are optimistic).

HDR-capable displays

As Dolby established, a practical realisation of HDR requires displays capable of a large contrast range and expanded gamut. Right now there are two competing technologies in the display market: the relatively recent Organic Light Emitting Diode (OLED) display and the mature LCD. Sony debuted the tiny 11-inch XEL-1 OLED TV in 2007, while the first commercial LCD came on to the market in the 1990s. Both LCD and OLED require augmentation to meet the needs of HDR.

In principle, an OLED display is far simpler than LCD, each pixel consisting of a red, a green and a blue OLED, which directly emit light. In practice, the market leader in OLED, LG Display, uses an architecture called WRGB or WOLED-CF (White Organic Light Emitting Diodes with Colour Filters) which has four white OLED pixel elements, each created using OLEDs with blue and yellow emitters. Like LCD these have colour filters on top -- red, green and blue, and no filter for the fourth white element. The WRGB technology, which was developed by Kodak and purchased by LG Display, was found to be easier to scale up for large-scale OLED production, although it suffers from lower efficiency than a panel with red, green and blue pixel elements (Pels). The added white pel per pixel helps LG panels reach the peak white brightness needed for HDR.

OLED pixels can achieve an infinite contrast ratio because they emit no light when turned off. In practice, contrast is limited by ambient light reflected from the display surface. However OLED is a new technology and current OLED displays can suffer from limited lifetime, permanent image burn, short-term image retention and have a limited maximum brightness. The technology behind large OLED displays is owned by LG, and LG Displays is the only company making large format OLED displays in any volume. Other high-profile TV manufacturers offering OLED TVs are buying the panels from LG Display.

Augmented LCDs

In contrast with OLED, LCDs are a well-developed and stable technology with high manufacturing yields. LCDs use the electrically variable polarisation of liquid crystal cells to modulate light from a backlight. Most current backlights use 'white' LEDs (typically these LEDs have a Yttrium-Aluminum-Garnet yellow phosphor pumped by an Gallium Nitride blue source). The problem with WLEDs as a light source is that they don't produce a spectrally smooth white light -- or more importantly, equal levels of light centred at the red, green and blue peaks.

An LCD pixel has three elements. Each element has either a red, a green or a blue filter in front of it, and ideally the backlight should have a strong output at frequencies matching the transmission peaks of each of these filters, to produce the largest possible colour gamut and brightness. As our spectral plot shows, WLED backlights fall short in this regard:

The output of a WLED (a blue GaN LED with a YAG yellow phosphor) is neither a 'pure' white, nor a particularly good match for the transmission characteristics of the LCD colour filters as shown by the dotted lines.

Spectrum for a 'white' screen captured from an LCD with a quantum dot backlight. The difference in the R,G and B peaks is due to the colour temperature settings of the display. The close match between the backlight spectrum and the RGB colour filters is shown by the dotted lines which represent the transmission characteristics of the filters.

This image shows a range of sizes of quantum dots, manufactured by PlasmaChem GmbH, in colloidal suspension and illuminated by ultraviolet light.

The brightness and gamut of the latest LCDs are being greatly enhanced by quantum dot backlights. For a quantum dot LCD, high-brightness blue LEDs provide the backlight illumination. The light passes through a thin plastic sheet doped with quantum dot nano-crystals, fine-tuned to fluoresce at the lower frequencies of green and red when illuminated by the higher-frequency blue light. The green and red light is narrow band and can be closely matched to the characteristics of the red and green filters in each LCD pixel. Augmenting current LCDs in this way is relatively simple and doesn't require huge changes to the manufacturing line. All that's needed is to change the backlight LEDs from WLEDs to high-intensity blue LEDs and to insert a thin plastic sheet carrying embedded quantum dots, in front of the backlight. Colour gamut and backlight efficiency is improved because the red, green and blue light from the backlight closely matches the transmission characteristics of the RGB colour filters.

Unfortunately, different display manufacturers use different names for their use of nanotechnology. For example, Samsung refers to its quantum dot backlight as 'QLED', while LG calls it 'Nano Cell'. Due to high levels of secrecy it's hard to tell if these are similar technologies or not. LG's Nano Cell has been reported as a use of nano-technology to adsorb the wavelengths of light between the red and green colour filters. This may be an accurate description, or it may be a misunderstanding of the use of re-emissive red and green nano dots to improve the backlight.

In the future, quantum dot technology offers the possibility of further improvements in display quality. For example, quantum dots may be used to replace the passive colour filters in an LCD or even be used as directly emissive pixels, since as well as being photo-luminescent (light activated) quantum dot nanocrystals are also electroluminescent, so they can be driven by an electrical field. WOLED-CF panels may also benefit from quantum dot technology, improving their gamut.

Backlight modulation

The on-to-off light transmission ratio of the liquid crystal cells in a LCD sets a limit on contrast ratio. The simplest way to extend the contrast range is to modulate the brightness of the backlight. Early attempts at this -- Dynamic Contrast Ratio (DCR) -- modulated the brightness of the entire backlight and didn't prove to be very effective, although this approach is still branded as HDR-capable by VESA DisplayHDR 400 (see below).

For HDR, an LCD backlight is divided into small areas that can be individually controlled. The most effective system, known as Full Array Local Dimming (FALD), is to use an array of backlight LEDs across the entire back of the display. The drawback is that a FALD display is not as slim as an edge-backlit display.

A representation of Full Array Local Dimming (FALD) LED backlighting.

Of course with any backlight that offers zoned local brightness control, the backlight blurs across the display due to the necessary presence of an optical diffuser. In this respect any display that uses emmissive pixels, such as OLED, is superior because the full range of brightness control is available for each and every pixel.

Unfortunately edge-lit backlights, using optical light guides and diffusers to even the light out across the display, are significantly cheaper and edge-lit backlighting is common on the majority of displays. With edge lighting, backlight control can only be by zones, divided into rows and columns. With backlight arrays along the left and right edge this naturally results in two columns and for rows a division of four is not uncommon. Two columns of four rows results in only eight brightness-controlled zones, and because of diffusion these zones aren't clearly defined. This lack of precision in backlight control means that these displays cannot truly produce HDR contrast over small areas of the screen. As a result, small image highlights against dark backgrounds are surrounded by halos. Such shortcomings of edge lighting become more apparent as manufacturers attempt to push peak brightness. Manufacturers of HDR-capable LCD displays are generally coy about the number of local dimming zones their products employ. For example, Samsung's top-of-the-range TVs for 2018 do feature FALD (Samsung calls it Direct Full Array Elite and has trademarked that phrase), but the company doesn't reveal how many zones are employed.

A representation of the zoned backlight control for an edge-lit, eight-zone HDR LCD display. Although indicated here for clarity, in reality the backlight LEDs are concealed behind the bezel.

HDR standards: Dolby Vision

HDR requires new standards to specify such things as video signal formats and colour management. Streaming, broadcast and other program sources will have to support both SDR (Standard Dynamic Range) and HDR displays. At this early stage there are a number of competing standards or systems. Some commentators on HDR have said that consumers should not be concerned about formats as it's likely that HDR-capable televisions and other displays will include support for all the major competing systems. However, at present, it seems the majority of display manufacturers are electing to use the royalty-free 'open' standard HDR10 rather than pay royalties on a proprietary system.

The leading proprietary system -- Dolby Vision, shown at the International Broadcasting Convention in 2014 -- comes from Dolby Laboratories. Much of the buzz about HDR has been generated by Dolby, and Dolby Laboratories made early efforts to get major media companies and display manufacturers to sign up for Dolby Vision licensing.

Dolby Vision support initially required the use of dedicated hardware in the form of a decoding chip, but Dolby now provides it as software, so it's possible to retro-fit as a firmware upgrade to some recent televisions.

Alternative HDR standards

An alternative open -- that is, royalty free -- standard, HDR10, was formulated by the Consumer Technology Association in August 2015. A number of companies including Samsung, Dell, LG, Sharp, Sony, Vizio, Microsoft and Sony Interactive Entertainment, have adopted HDR10, which has recently been extended to HDR10+.

Metadata

Traditional colour management uses colour profiles of every device in the capture and reproduction chain. At every step in the process these profiles are used to translate colour from one device to the next, so that the end result, given the limitations of every device in the chain, is as true to the original as possible. Until now, colour management has never been applied to television.

Because there is considerable variation in the capability of each HDR display in the precision of brightness control, in peak brightness, in black level and in colour gamut, HDR video includes metadata describing the colour gamut and brightness range used during the mastering of the source material. On reception/playback this is compared with the capabilities of the individual display and translated so the original material is reproduced as faithfully as possible within the limitations of the display. Dolby calls this process 'display management'.

Dolby Vision incorporates dynamic metadata that encodes the image parameters for every frame, while the open standard HDR10 uses static metadata, where the imaging parameters are set at the start of playback and the same translation is applied to all frames. The more recent HDR10+ standard matches Dolby Vision by also using dynamic, frame-specific, metadata.

HDR media sources

YouTube launched HDR video support on 7 November 2016 and there are YouTube channels that specialize in 4K HDR content such as The HDR Channel.

The specification for Ultra HD (UHD) Blu-Ray includes HDR, although again the range of formats supported by actual products is flexible. Netflix has announced support for HDR in both Dolby Vision and other HDR formats. Amazon announced in July 2015 that HDR was available through its Prime video service.

HDR Standards for TV, mobile devices and computer displays

The Ultra HD Premium logo.The Ultra HD Premium logo.

Ultra HD Premium is a branding scheme created by the UHD Alliance (UHDA). It indicates to the consumer that a TV carrying the brand will provide "...the best possible UHD with HDR experience. Home entertainment products, mobile devices and content meeting these certification requirements bear the UHDA's Premium Logo marks, making them easy for consumers to identify and purchase with confidence."

Unfortunately as far as HDR is concerned, all the Ultra HD Premium brand appears to guarantee is that a TV will accept a 10-bit HDR signal and attempt to do something with it. Ultra HD Premium does not define the number of backlight zones there are behind the screen. A similar branding scheme and standard, Mobile HDR Premium, exists for mobile devices.

The VESA DisplayHDR logo.

HDR obviously isn't necessary for general 'office' tasks on a PC, but it will eventually be essential for graphic design, photography, video editing and playback -- and of course, for gaming. The Video Electronics Standards Association (VESA) has formulated the DisplayHDR standard for computer displays.

SEE: Executive's guide to the business value of VR and AR (free ebook)

DisplayHDR initially focuses on LCDs and is divided into three tiers, each of which guarantees a certain peak brightness level (400, 600 or 1000 cd/m2) and an associated black level and gamut performance. This set of standards says little about the number of backlight zones employed, though. Global dimming is mandated for DisplayHDR 400 and local dimming for DisplayHDR 600, while DisplayHDR 1000 has local dimming with twice the contrast of 600. The DisplayHDR website lists certified products that meet DisplayHDR standards.

DisplayHDR test software

VESA recently announced the availability of test software for performance verification of HDR displays, available for free download. At present this tool -- DisplayHDR Test -- only runs on Windows and does not distinguish the number of zones in a backlight.

LCD versus OLED: HDR pros and cons

Dolby's experimental work has set a very high bar and HDR may gain a poor reputation due to the limitations of current HDR-branded products that offer a wide range of actual performance. High dynamic range and colour gamut, although related, are two different things and an HDR display with expanded colour gamut, but poor HDR performance, will still look better than an older SDR display.

Both LCDs with a brightness-modulated backlight and OLED displays are capable of high contrast ratios. OLED displays are limited on maximum brightness, while LCD can have high brightness, but poorer black-level performance than OLED.

The full contrast range for OLED is available down to the pixel level, while for LCD it's constrained to the resolution of the backlight. So OLED is capable of pinpoint highlights, which LCD cannot manage.

Current OLED TVs are WOLED-CF -- white OLED with colour filters -- which suffer the same gamut problems as traditional LCD. OLED displays may come closer to the ideal contrast range for HDR, but as a mature technology LCD HDR displays are likely to be cheaper, provide better reliability, wider gamut and a longer operating life.

HDR is still developing, and it's yet to be determined which systems and standards will be widely adopted. Unfortunately display manufacturers are concealing features -- particularly the number of backlight zones for HDR LCDs -- that have a big effect on HDR performance. Consumers are faced with elastic 'standards' that cater for the different capabilities of LCD and OLED technology, and that allow affordable displays to be marketed that claim some HDR capability, even if it isn't very effective.

RECENT AND RELATED CONTENT

Philips Brilliance 328P6AUBREB review: A capable 31.5-inch display with HDR

This large, high-resolution display provides clear, sharp images along with a basic HDR capability.

Samsung in dialogue to form 8K standard-setting body

Samsung Electronics will approach 8K TV with an inclusive mindset having been in talks with partners to form a standard-setting alliance that includes all industry stakeholders to promote the budging ecosystem, company officials said.

Technology that changed us: The 2010s, from Amazon Echo to Pokémon Go

In this 50-year retrospective, we're not just looking at technology year by year, we're looking at technologies that had an impact on us, paved the way for the future, and changed us, in ways good and bad. (Previously: The 2000s)

Dolby Vision, HDR10, Technicolor and HLG: HDR formats explained (CNET)

Yep, there are lots of ways to get HDR on TV. We'll break 'em down.