Amazon S3 Access Points, Redshift updates as AWS aims to change the data lake game

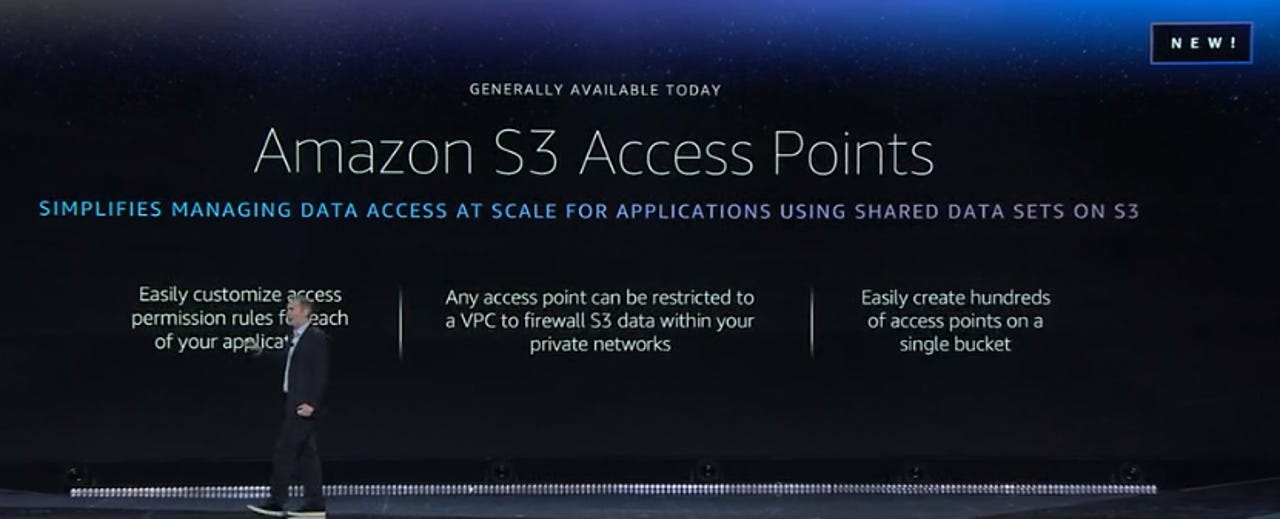

Amazon Web Services (AWS) has announced a way to manage shared data sets with Amazon S3 Access Points.

AWS re:Invent

Unveiled at AWS re:Invent on Tuesday in Las Vegas, AWS chief Andy Jassy said S3 Access Points allows users to customise access permission rules for each application.

He said the new service simplifies managing data access at scale for applications using shared datasets on S3.

"Access Points give you a customised path into a bucket," he said.

Any access point can be restricted to a Virtual Private Cloud to firewall S3 data within a private network, and Jassy said users can create hundreds of access points on a single bucket.

"S3 Access Points are unique hostnames with dedicated access policies that describe how data can be accessed using that endpoint," the company details in a blog post.

"Before S3 Access Points, shared access to data meant managing a single policy document on a bucket. These policies could represent hundreds of applications with many differing permissions, making audits, and updates a potential bottleneck affecting many systems."

"The amount of data we're trying to store and analyse is like nothing we've seen before," Jassy said. "This is a very different era."

Jassy said in a world where petabytes of data is being consumed, the new advantage is how much faster work can get done with the cloud.

"There are a few reasons why people are choosing S3 as their data lake base ... it's more reliable and scalable than anything else," Jassy said.

Redshift updated, announces AQUA

Paired with the S3 enhancements, Amazon announced updates to Redshift, its data warehouse. First, AQUA (advanced query accelerator) is a hardware-accelerated cache that runs on S3, bringing compute to the storage layer. It's completely compatible with the current version of Redshift, but it won't be available until mid-2020, Jassy said.

With AQUA, Redshift delivers up to 10X better query performance than any other cloud data warehouse solution, he said.

Jassy also announced the next generation of Nitro-powered compute instances for Redshift, RA3 instances, which enable customers to separately optimize compute power and storage. The ra3.16xlarge instances have 48 vCPUs, 384 GiB of Memory, and up to 64 TB of storage.

With this new managed storage model, if a customer has a workload that exceeds local SSD, the less-accessed data will be intelligently and automatically moved to S3. Meanwhile, if a customer isn't using all of their SSDs, they don't pay for it.

It's a "pretty significant enhancement for customers using Redshift," Jassy said.

UltraWarm (Preview) for Amazon Elasticsearch Service

Jassy also announced UltraWarm, which is being touted by the CEO as a fully managed, low-cost, warm storage tier for Amazon Elasticsearch Service.

UltraWarm is now available in preview and as Jassy said, it takes a new approach to providing hot-warm tiering in Amazon Elasticsearch Service. It offers up to 900TB of storage, at almost a 90% cost reduction over existing options, AWS claims.

With the launch of UltraWarm, Amazon Elasticsearch Service supports two storage tiers, hot and UltraWarm. The hot tier is used for indexing, updating, and providing the fastest access to data. UltraWarm complements the hot tier to add support for high volumes of older, less-frequently accessed, data to enable you to take advantage of a lower storage cost.

The UltraWarm preview is now available to all customers in the US East (N. Virginia) and US West (Oregon) Regions.

Amazon Managed Apache Cassandra Service

Jassy also launched in preview Amazon Managed Apache Cassandra Service (MCS), an Apache Cassandra-compatible database service.

Amazon MCS is serverless and the service automatically scales tables up and down in response to application traffic.

"You can build applications that serve thousands of requests per second with virtually unlimited throughput and storage," AWS said.

Amazon MCS implements the Apache Cassandra version 3.11 CQL API, allowing users to use the code and drivers already utilised within existing applications.

Asha Barbaschow travelled to re:Invent as a guest of AWS.