AMD launches MI100 GPU accelerator for high performance computing

AMD launched what it claims is the fastest GPU accelerator for high performance computing with the ability to top 10 teraflops.

The AMD Instinct MI100 accelerator is supported by platforms by Dell Technologies, Gigabyte, HPE and Supermicro and is combined with AMD's EPYC CPUs and the ROCm 4.0 open software platform. AMD Instinct MI100 is built on the chip maker's CDNA architecture.

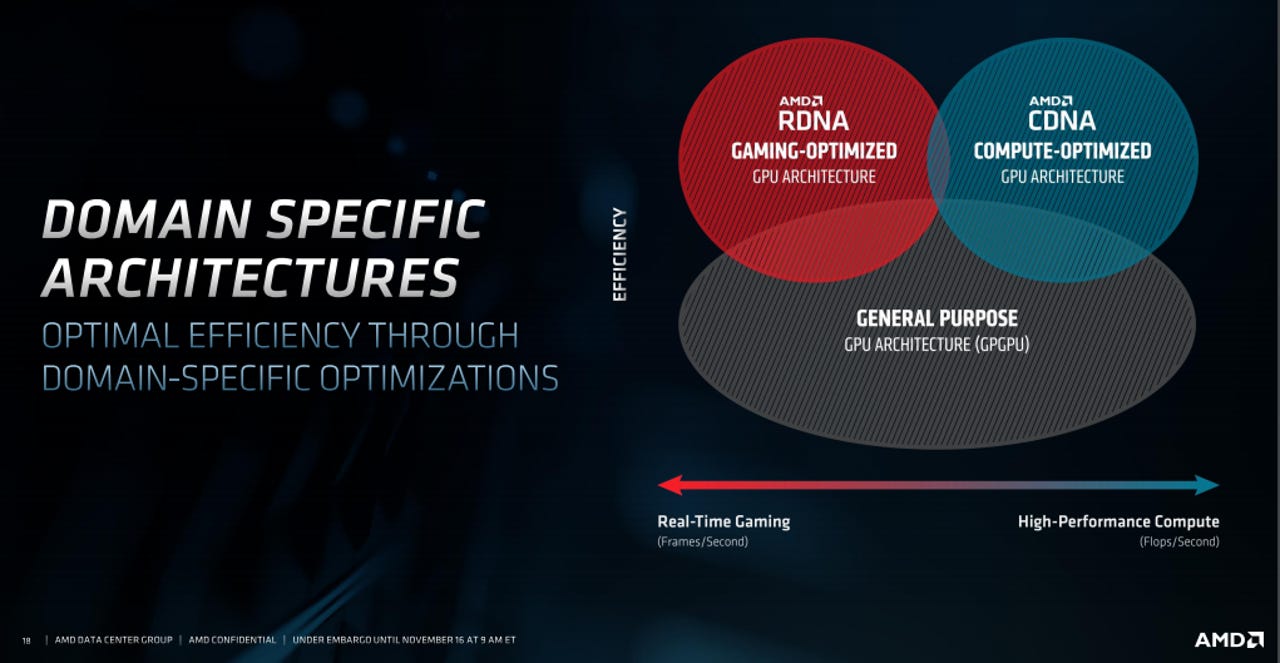

CDNA is AMD's compute optimized GPU architecture. RDNA is optimized for gaming.

For AMD, its GPU accelerator is another way to accelerate its data center and computing momentum. AMD's second generation EPYC is performing well and gaining share on Intel's Xeon franchise with the third generation on tap in the first quarter. AMD has been on a roll:

- AMD's $35 billion Xilinx purchase: Everything you need to know

- IBM, AMD to collaborate on HPC, AI, hybrid cloud, confidential computing

- AMD launches new series of Epyc processors for the enterprise

According to AMD, the combination of MI100 with EPYC produces up to 11.5 teraflops of peak FP64 performance for HPC and up to 46.1 teraflops peak FP32 Matrix performance for AI and machine learning workloads. What AMD is gunning for is a new class of workloads.

Brad McCredie, vice president of AMD's Data Center GPU and Accelerated Processing business, said the Instinct MI100 effort is aimed at "the workloads that matter in scientific computing."

The AMD Instinct MI100 is also backed by a set of software tools. AMD's ROCm developer platform features compilers and APIs and libraries. ROCm 4.0 is optimized for AMD Instinct MI100.

ROCm 4.0 supports OpenMP 5.0 and HIP and PyTorch and Tensorflow frameworks.

Key points:

AMD's MI100 accelerator is aimed at scientific computing in life sciences, energy, finance, academics, government and defense.

MI100 accelerator provides about twice the peer-to-peer peak I/O bandwidth over PCIe 4.0 up to 340 GB/s of aggregate bandwidth per card.

In a server, MI100 GPUs can be configured with up to two fully-connected quad GPU hives, each providing up to 552 GB/s of P2P I/O bandwidth for fast data sharing.

The accelerator features 32GB High-bandwidth HBM2 memory at a clock rate of 1.2 GHz and delivers an ultra-high 1.23 TB/s of memory bandwidth to support large data sets.

AMD's Instinct MI100 will be available in Dell Technologies, Gigabyte, HPE and Supermicro systems by the end of the year.