BI in 2013, the year the big boys contract terminal dis-ease?

The siren call of 'Big Data' (whatever the heck that means) has got a lot of people excited. Last year, SAP fanfared Business Warehouse on HANA as a way of massively compressing the run time for complex reporting. Vishal Sikka, executive board member SAP went so far as to create the 10,000 Club - an elite group of companies that have achieved 10,000x performance improvement by using HANA. For BI specialists, the combination of in-memory and big data represent the confluence of two mega trends that play into a sweet spot they believe provide fertile ground for new projects.

I see things differently.

I wonder whether those who are deep in the trenches of BI -- whether SAP, Oracle or others -- have really thought through why BI has been such a Cinderella, despite its obvious allure?

When I first started studying BI in 1997, it was with a view to arguing how business could rid itself of the spreadsheet. My thesis was simple: spreadsheets are prone to serious error, specialised BI systems could eradicate that problem. Fifteen plus years on and we're still seeing massive spreadsheet use. BI, while valuable, has proven expensive, slow and often requiring the services of an already stretched IT to really make it sing.

I'd go so far as to say that there has been almost no innovation in this field since I first started reviewing Cognos, Hyperion, BOBJ, FX and a myriad of other players. Sure, we have speeds and feeds but duh?

As a side note, I would exclude SAS Institute from this band of rogues because they have been steadily but relentlessly concentrating on important use cases where the value is obvious - if expensive. Advanced fraud detection and prediction springs to mind.

Then I came across this post from Mico Yuk, self proclaimed SAP BI expert who identifies five things that BI people need to do in 2013:

#1 Superior Communication Skills... no longer a NTH (nice to have)

#2 Think ‘Mobile First’ approach... or Don’t Build

#3 Deployments are either Rapid... or a Waste of Time

#4 Make ‘User Adoption’ your ONLY KPI to Measure Success

#5 Adopt Mobile, Cloud, and Big Data... they’re here to stay!

I'm not going to ding Mico for her enthusiasm, BI is her bread and butter and from everything I hear, she is very good at advocating for customers. Instead, I wonder whether those who are deep in the trenches of BI -- whether SAP, Oracle or others -- have really thought through why BI has been such a Cinderella, despite its obvious allure? It's a question I have wrestled with many times, wondering why the spreadsheet remains so popular. The answer is always the same: the spreadhseet is accessible, readily understood and easy to manipulate.

And then I saw a solution that made me sit up.

The other day Amit Bendov, CEO SiSense and I had a long converation about what his company is doing. SiSense is another of the many wannabe new generation BI companies that hopes to displace the incumbents. Normally I avoid these pitches because they tend to be very similar: we do stuff faster, we have great visuals, we put business problem resolution into the hands of users...etc. I've heard it many times.

On this occasion I was happy to speak with Amit because I know his previous track record at Panaya and his solution came recommended. (Hint to those who use this piece as a pitch launch. Read this part very carefully before applying digits to keyboard.)

SiSense technology is interesting. Like many others, the company is leveraging the benefits of an in-memory column store database. They are also using disc because in their experience with customers, some 80 percent of data is at rest and so doesn't need to be held in expensive memory. I'll sort of buy that. He then explained that they are seeing bottlenecks between CPU and memory so they are optimising for that part of the physical constraints that impact high speed operations.

But the proof has to be in the eating and this is where it gets interesting.

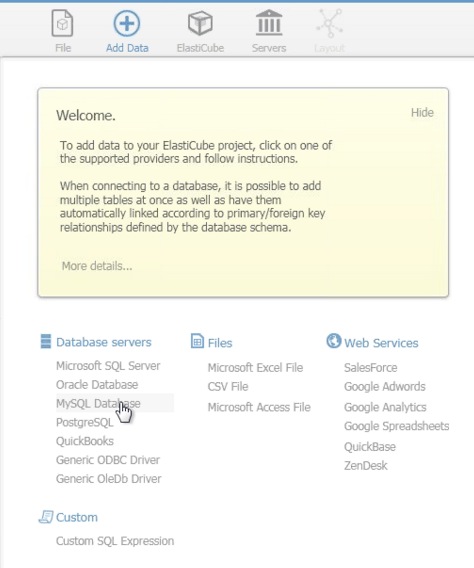

Amit ran a live aggregation of around 3.5 million HR records on an 8GB RAM laptop streamed back to me over a GoToMeeting session from scratch. We went through data source selection, manipulation of what many will know as the star schema (all drag and drop/relate), and then running the aggregation. The entire process took under four minutes. That's impressive, but in this day and age, two minutes for the processing bit is hardly exceptional. It turns out that their longest run time so far is around 50 minutes on a multi-gigabyte record store. Again, quick but not exceptional.

He then showed me a pivot table and pie chart, all created on the fly through drag and drop. The results were instant. Changes were near instant as you would expect of something held in memory. They are not the most beautiful visuals I've ever seen but they were instantly consumable and capable of being shared with whomever is in your network on any device. That got my attention. See image below:

From what I can discern, Amit's four minute demo provides a solid response to all of Mico's assertions. But there is more.

Today, SiSense, along with many of the other new gen BI vendors, is playing at the lower end of the enterprise. They all having scale issues. However, as we walked through the customer list which includes pieces of Target and Merck, it quickly became apparent that usage is shaking out this way:

- Strategic for small and medium sized businesses

- Tactical for large enterprise

The reason SiSense, along with many others, can appeal to so much of the market is:

- The price points are radically different to the incumbent solutions.

- The solution vendors are largely but not completely removing the ETL headache.

- Multiple data sources are not a problem - it's just more meta data.

However, this won't be the case forever. Amit acknowledged ETL and clean data remain important topics but he asserts it is only a matter of time before the new vendors figure out how best to overcome current limitations. He believes SiSense will be capable of taking on all but the very biggest workloads in less than three years. I'll park that for progress updates.

I would argue that what we will see in the next two to three years is something like this as it relates to the new gen vendors:

SMBs will use BI to help:

- Grow their markets through blended intelligence derived from public and private sources surfaced via vendor provided integrations

- Attack cost problems associated with small scale through better understanding of buy patterns

- Pro-actively manage flexible workforces to achieve optimal business performance based upon predictive analytics

- Do basic reporting

Large enterprise will use BI to:

- Work on business critical problems which today are considered tactical but take on increasing importance as value is proven

- Collapse time to decisions through the application of ubiquitous information sharing via in-memory based solutions that front end with HTML5

- Continue to see the incumbent players as providers of reporting for systems of record but will de-emphasize their importance

- Actively look for lower cost but more flexible solutions of the kind offered by the new gen players

The impact on the incumbents playing to large business is obvious but worth stating:

- Compliance-based reporting will remain a critical activity but become commmoditized. Buyers will consolidate their investments in systems upon which they have to report, growing their landscapes but using that growth as a lever to obtain better terms from vendors.

- High speed reporting will become commonplace so that today's inherent advantage of acceleration will rapidly diminish as something that can be sold as innovation.

- The need for new classes of analytics with the emphasis on predictive modelling across vertical markets will force vendors to either invest directly or acquire the necessary expertise to remain competitive.

- Even though the proliferation of data aka big data will be hyped as a reason to invest, customers will increasingly question why they need to pay more when application volumes rise. The vendors in turn will need to prove value in order to obtain the big bucks. This will be much harder than they think as the smaller vendors catch up and possibly overtake on capability, scale up and out.

- There will be a need for solutions that are able to sift through mountains of data very quickly, reducing the problems and cost associated with hefting petabytes of data.

- Saying you can shift zetabytes (or whatever new word is invented) will not be a badge of honor. It will be a signpost to huge cost -- that customers will avoid in all but the most extreme use cases.

Concluding comments

My concern with the new gen players is that they won't have the market scale to avoid being crushed by the incumbents. Amit has a stretch goal of reaching $100 million in revenue in a few years' time. That is a fraction of a what Oracle, SAP and others earn from this source today. However, we could see the collapsing of prices as the new gen players bite into the Fortune One Million and become market leaders by volume if not revenue.

As far as I can tell, vendor-specific BI specialists are so fixated on their vendor that they are failing to see the new gen threat. It is all very well talking about adoption as job no.1, as Mico does; but if I can be sold in a five to ten minute demo then what chance do the big boys have with all the complexity they bring to the table? This is a massive risk for big players and their fans.

In short: many of them need to get out more often and see what's happeining in the wider world. Studying the customer lists and uses cases of the SiSenses of this world might be a good start.