Cloud security: Why these solutions aren't ready for prime time

Most technology news is about the production of some device, program, or platform that someone considers a success. The exceptions typically come from the security department, where we often hear about the threat, the attack surface, the soft perimeter, the malicious actors, and the unheeded warnings.

TechRepublic: Report: Cloud storage confusion leading to major security issues

Rarely in our field do we get the opportunity or good fortune to cover the story of a technology in its embryonic state -- one that its promoter has yet to officially deem a "solution." Until recently, there hasn't been a proper format for these stories.

Featured

The search for the perimeter in cloud security, and the debate over whether there should actually be such a thing in that endeavor, leaves several open questions, all of which testify to the fact that we're not ready for prime time with this. Here are just three:

Must identity be universal to be effective? With respect to ease of implementation, it would be much simpler for engineers to limit fully-sourced, certificate-bound identity, with guaranteed uniqueness plus the identity provider's signature, to human-based accounts only. And that would just about wrap up the whole discussion of identity for entities, were it not for the theoretical possibility that a malicious user would tend to "impersonate" (except that wouldn't be the right word) a non-human account to avoid all the trouble of hacking a human account. If all entity identities were bound tightly to personal entries from a company directory, such as LDAP, then what would stop an administrator of a system full of microservices from attributing everything in her platform to herself personally? Everything, all over the platform, all belonging to one person. It would be a workable system, but like a Mac that you can commandeer without a password, what would be the point?

To whom or to what should a cloud-based "serverless" function belong? You may be familiar with Amazon's Lambda, Microsoft's Azure Functions, and Google Cloud Functions -- public cloud-based staging grounds for small programs designed to be contacted through API calls only. By design, they're supposed to be "decoupled" from the system that calls them. So on this perfectly auditable platform that architects are envisioning, when a function is effectively unplugged from the server and infrastructure that supports it, how should it signal its identity? And if it should not do so out of sheer convenience for the developer, then are we not creating at least the target area for a new attack vector?

"Machine identity" means what in a multicloud scenario, exactly? If, as CoreOS CTO Brandon Philips suggests, the orchestrator of microservices should take account of the identities of the servers and processors hosting workloads, then what happens -- or rather, what should happen when a portable workload becomes ported? Do the identities of those workloads get altered or revised when their roots of trust (which is how a TPM-based platform would perceive them) are uprooted and transplanted?

Why do these open questions matter? Because the security of systems is irrevocably tied to the sources of the programs they run. It's impossible for developers or operators to track down a bug whose source they cannot locate. And if the identity of an account can be duplicated, or if its authenticity may expire suddenly and blink back to life like a microservice, then it isn't truly an identity but just a tag or an attribute.

TechRepublic: How to plan a microservice implementation

But there's also this: A system is not just the product of the people who develop and maintain it, but a symbol of their efforts and ingenuity. Too often we discuss security in the context of preventing wrongdoing and resolving error. In any organization that deserves the moniker "enterprise," a system is a concerted effort of human beings. All technology is the culmination of human effort. To the extent that we cleanse a technology of any evidence that its creators and maintainers are indeed people, we do more than just render it non-auditable. We eliminate any feeling we should have that it belongs to us, rather than the other way around.

Most movies and novels that speculate on the evolving nature of artificial intelligence and (though they don't call it by this name) distributed systems hypothesize that a system that gets the bright idea of conquering humanity must first attain the sentience of a human being. That's the wrong direction entirely. Any A.I. smart enough to deduce a workable strategy for the defeat of humankind will have thoroughly digested the history of every minute of the year 2017, and will conclude that the secret ingredient is indeed inhumanity. The less like people it acts, the better chance it has.

Put another way: The more of ourselves we see in the great things that we build and accomplish, the more secure we will be in how we use them.

PREVIOUS AND RELATED COVERAGE

Searching for the perimeter in cloud security: From microservices to chaos

Where we encounter the problem of applying the most modern security model we have to the most capable data centers we run and discover the two may come from different eras.

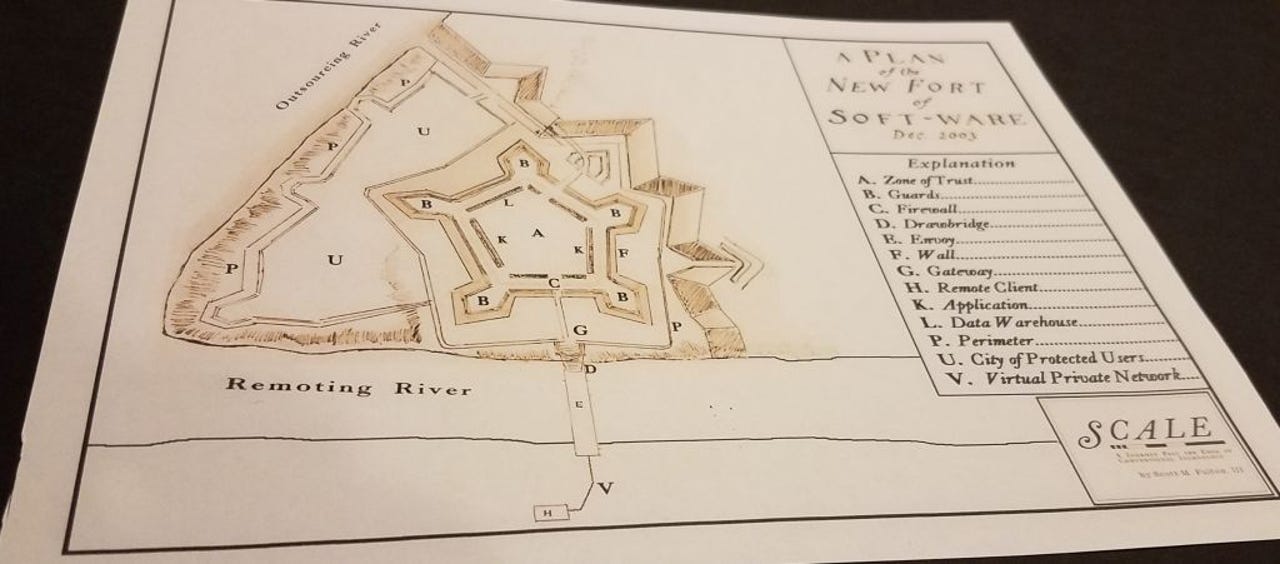

Micro-fortresses everywhere: The cloud security model and the software-defined perimeter

Microservices and the invasion of the identity entities

Where we apply the solution of unimpeachable identity to the new realm of microservices, and grapple with the strange philosophical dichotomies we create in the process.

Machine learning and the spectre of the wrong solution

Where a beautiful relic of history stares us in the face to remind us that if we take all the time in the world to render our most perfect ideas, reality will leave us in the dust.