Data in AWS cross hairs: How the cloud giant is gunning for more enterprise workloads

Amazon Web Services (AWS) made it clear this week that for companies large or small, the biggest asset is data. It's something AWS says it will focus on heading into 2020.

The cloud giant's CEO Andy Jassy spent his re:Invent keynote running through announcement after announcement centred on what an organisation could achieve with data. While clean data is good for customers, AWS is betting that data movement between storage, database, and analytics workflows will be the secret sauce for gaining more workloads from legacy players.

"To have the ability to move data from data store to data store is transformational," Jassy said.

Jassy said that just in the last few years, more than 350,000 databases have migrated to AWS using its database migration service or schema conversion tool. But what has been missing, until now, is the ability to work around the application code that's tied to that proprietary database.

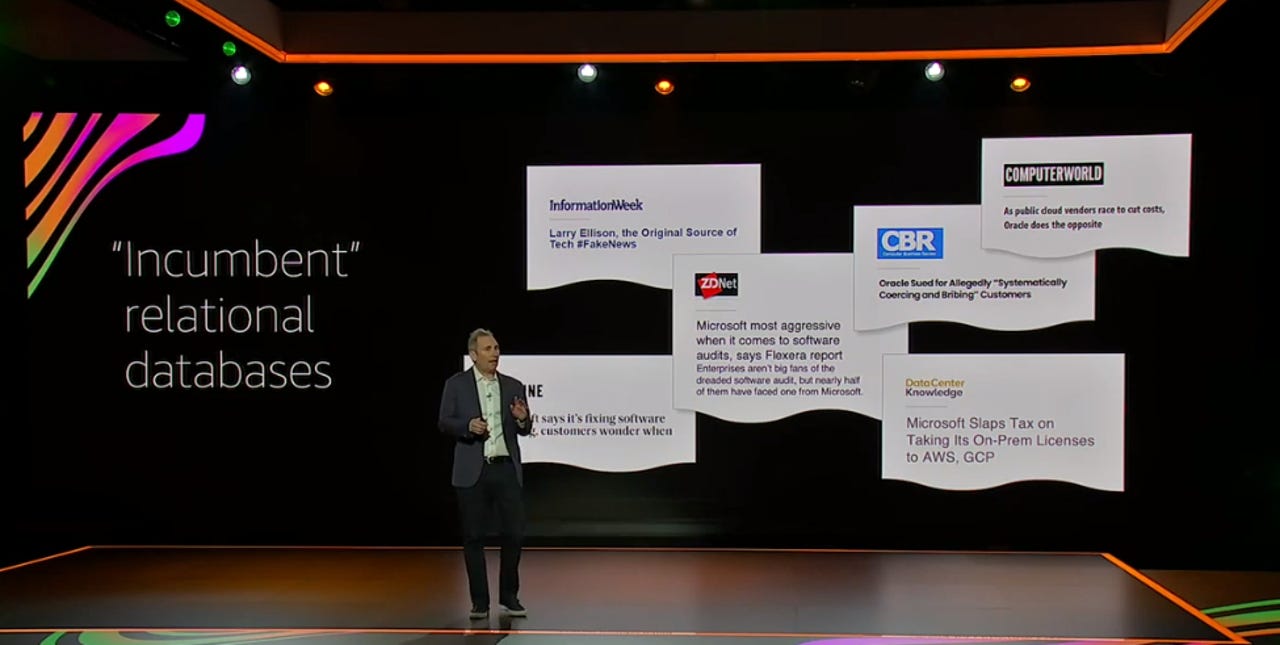

"Especially as they've watched Microsoft get more punitive, more constrained, and more aggressive with their licensing," Jassy said.

AWS is open-sourcing Babelfish for PostgreSQL, an Apache-licensed project that acts as a new translation layer for PostgreSQL. This means that PostgreSQL can now understand commands from applications written for Microsoft SQL Server without the developer having to change database schema, libraries, or SQL statements.

Read more: Amazon just open sourced an easier path to PostgreSQL (TechRepublic)

The new translation capability will see more workloads hosted on the AWS cloud.

"Customers that we've spoken to privately about this, to say that they're excited would be one of the more glorious understatements I could make," he said. "People really want the freedom to move away from these proprietary databases."

Jassy said his company has a different way of thinking about its business when compared to more traditional database companies, which is that it's "trying to build a set of relationships" and "listening to what customers care about".

"If we help you build more, more efficiently, more effectively, change your experience for the long term, even if it means short term pain for us, or less revenue for us, we're willing to do it because we're in this with you for the long haul and I think that's one of the reasons why customers trust AWS in general and trust us in the database space over the last number of years," the CEO said.

"This is a huge enabler for customers to move away from these frustrating, old guard proprietary databases."

With a trove of new services added to the AWS portfolio this year, there seems to be no other option than for an organisation to bring its data across if it wants to access what AWS can offer.

AWS hosts a lot of the world's data and many new companies founded over the last decade have taken advantage of AWS' free storage offerings and low-cost additions, building their business from day one on the AWS cloud. But as more and more enterprise customers jump on board, more and more enterprise workloads have found themselves hosted on AWS.

But the strategy is centred on the value to the customer, AWS said. The company's official proposition is to help enterprises with migration and modernisation, data, and customer experience.

In Australia, AWS customers include four of the five largest banks, mining and resources firms, and retail players. This means it services many of the country's largest companies by market cap.

"That's all about moving the applications up and modernising either the software that they're running or using the API platform to help those applications interact with more modern mobile apps," AWS Australia and New Zealand managing director Adam Beavis told ZDNet, explaining how many large local enterprises use AWS.

"2021, definitely the year of data," he said. "And what's driving that is customers really wanting to be able to make more informed decisions to get a bit of insight from their data, those disparate systems -- you've got ERP and CRM and IoT data and operational data coming in, having customers start to centralise that to be able to then make more insightful decisions for their customers."

Then finally, once the data is up there, the trend of these larger enterprises is to think about how to drive a better customer experience, Beavis said.

The pitch to customers is to first talk about efficiency and how they can use data to become more efficient for their customers. The second is experimentation and the third is expanding and continuing to learn.

"We're starting to see organisations better understand the importance of being able to use data and make those decisions quicker … getting the data up to help them to start experiment because trying to do some of these things on premise years ago was much more difficult, trying to do these things on traditional data warehouses is much more difficult," he said.

"One, the ability to be able to scale it, but two, even the cost of experimenting. A lot of these experiments can be quite small, and if they work, fantastic, they've become innovation within the business. If they fail, you turn it off -- try something different without that big sunk heavy investment."