Fixing IT in the cloud computing era

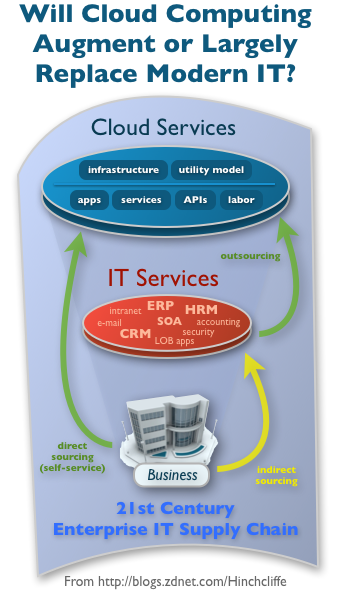

Both businesses and workers are now faced with real alternatives to how they acquire IT solutions. The reality of cloud computing as it exists today already offers significant potential to IT departments that want to cut costs, lighten their infrastructure footprint, and adopt agile new technologies. Whether it's private clouds or public ones, all signs point towards it being one of the top new approaches for enterprise IT for 2010.

It's also not inconvenient that this is coming right at a time when traditional enterprise models for IT have come under increasingly sharp criticism for failure to perform, including most recently SOA and just about any kind of "cathedral-style" enterprise project these days.

Unfortunately, actual change isn't necessarily in the air yet, it's mostly still on the edge at the moment as actual disruption just starts to build up. I'm still seeing little inclination on the ground to change today's enterprise IT habits despite mounting evidence that they need to. But that may be about to change, one way or another. Cloud computing is increasingly in a position to be a game-changer both as a key departure point and a line of demarcation between the old and potentially new worlds of information technology in the 21st century.

At this point there's little mistaking that cloud computing itself is a truly potent convergence of SaaS, virtualization, and outsourcing, combined with a pragmatic new utility mindset. Thus it has rapidly emerged on the short lists of businesses looking for ways to improve how they operate their IT infrastructure and applications.

However as we'll see shortly with some of the latest industry discussions, we seem to be at a major fork in the road: Will cloud computing "merely" help organizations achieve economic, efficiency, and waste avoidance goals, or can it successfully drive even more strategic objectives like effective IT/business alignment, genuine business agility, and the achievement of meaningful new levels of innovation?

Strategic astronautics or hard-headed business?

Some might say that there's an even more fundamental question to be answered in this discussion:

Will cloud computing help businesses acquire fundamentally better IT solutions? Ones that solve their real problems in a timely fashion and without budget-breaking and career-impacting delays and risks? There is some urgency to this question not just because businesses are trying particularly hard to perform better or even just recover in the current economic climate. For the first time (or second time if you count the original personal computer revolution), both businesses and workers are now faced with real alternatives to how they acquire IT solutions.

One emerging force at the moment is so-called shadow IT. This is an increasingly common phenomenon in today's workplace and represents up to 15% of IT already in some organizations according to Forrester. This often nearly free and surprisingly high-quality IT has become available from a vast number of sources lately, particularly through online and mobile channels. They frequently can't be dealt with adequately using current compliance strategies and are becoming a significant presence in many organizations.

Related: The cloud computing battleground shapes up. Will it be winner-take-all?

In this way, the discrete fork in the road between cost/efficiency and strategic opportunity might now only exist as concept in centralized IT planning as cloud apps do an end-run. In truth, one of the cloud's biggest implications is that it is enabling (and "empowering" if you like) anyone to self-service with the software, business solutions, infrastructure, and other computing services that they need, more easily and readily than any other source, including their own IT department.

This poses a dire quandary for IT planners and strategists: Join 'em or fight 'em? At the same time it can be truly enabling for business users even if, at least according to some, it also puts them on a slippery slope towards potential technology anarchy. The point: Businesses are often the ones reaching out for IT solutions that are increasingly formulated for their direct consumption as a service.

Is it about people or the cloud?

Fellow ZDNet blogger Phil Wainewright made some important points on this topic, which he is starting to call People-Oriented Architecture, earlier this week in "People-centric IT for a new decade":

I believe we’re at a breakthrough point precisely because technology has matured to the point that it’s flexible enough to be adapted to what the people who use it are trying to achieve — to empower them to refine the automation and processes that help them fulfill their roles as effectively as possible. We no longer have to ask people to change their processes to fit in with the demands of punch card runs or application stovepipes or implementation and upgrade cycles. We now have information technology that’s sophisticated enough to fit in with how human beings work and behave, and that’s why people-centric IT is a defining theme for the new decade, requiring information technologists to develop new skills and ways of working that deliver results the people demand.

I've been bullish on people-guided IT for several years now with concepts like end-user mashups and other self-service software models such as today's highly configurable and easily customizable SaaS applications, especially the new business-focused peer production models like Social CRM in which people are the app. So Phil's point is important here because all of these trends, particularly as they are embodied in today's self-onboarding, "instant-on", open, data-portable, viral, social, and disruptively unblockable cloud services. Not that all cloud computing systems have these attributes of course, but they tend to be based on often hard-to-govern Web (and increasingly mobile) technologies and design models.

You can broadly call this the consumerization of IT or the democratization of the enterprise, but the on-demand, utility model of computing combined with the seamless presence of today's Internet within most organizations poses a clear challenge to traditional IT. It also points clearly to a -- likely irreversible -- slide into decentralized, lightweight, Web-oriented IT and what some are now calling emergent architecture.

Tim Bray: We're doing it wrong

As I mentioned above, the discussion of how to get enterprise systems to meet business needs is heating up. Tim Bray, a highly respected industry thinker and co-inventor of XML, wrote a widely discussed post this week about "Doing it Wrong", which paints a fairly bleak picture of how well the traditional, top-down model of creating IT systems (doesn't) actually work:

Enterprise Systems, I mean. And not just a little bit, either. Orders of magnitude wrong. Billions and billions of dollars worth of wrong. Hang-our-heads-in-shame wrong. It’s time to stop the madness.

These last five years at Sun, I’ve been lucky: I live in the Open-Source and “Web 2.0” communities, and at the same time I’ve been given significant quality time with senior IT people among our Enterprise customers.

What I’m writing here is the single most important take-away from my Sun years, and it fits in a sentence: The community of developers whose work you see on the Web, who probably don’t know what ADO or UML or JPA even stand for, deploy better systems at less cost in less time at lower risk than we see in the Enterprise. This is true even when you factor in the greater flexibility and velocity of startups.

This is unacceptable. The Fortune 1,000 are bleeding money and missing huge opportunities to excel and compete. I’m not going to say that these are low-hanging fruit, because if it were easy to bridge this gap, it’d have been bridged. But the gap is so big, the rewards are so huge, that it’s time for some serious bridge-building investment. I don’t know what my future is right now, but this seems by far the most important thing for my profession to be working on.

It's a pretty damning portrait of the state of the profession and one that CEOs, CIOs, and other executive leaders will not-so-eventually take note of as they look for better ways to run their businesses. But Tim's point is right on ("the best thing, of course, is to simply not build your own systems.") Just about any numbers you consult will show that businesses just aren't very good at solving problems with their own software. Tim's observation about the cloud being a key direction that the industry is (rightly) headed that will help address all this is my point here.

ZDNet's own Michael Krigsman and world-renowned expert in IT project failures covered this topic today as well in the "Enterprise 2.0 Conundrum":

Technology is not the problem. These problems are not technical matters, but arise from preconceived notions, deeply embedded work processes, and heavily invested expectation mismatches between IT and the lines of business they serve.

Michael goes on to ask whether we are hitting upon "seemingly-intractable problems that run deep into an organization’s technology, culture, and economics" that have kept the status quo of IT and business that they way that they have been for decades now. I think the question has begun to answer itself, with the Web having become the most effective "proving ground" for new approaches to software as well as world's largest R&D laboratory. As I've pointed out a number of times with SOA in the past, models emerging from the Web are proving more effective. This has been the case with software development (open source), Web development (productivity-oriented platforms), Web 2.0, open business models, and other areas.

The big issue of course is that the Web is not the enterprise. While cloud computing offers a landscape in which Web-sourcing of just about anything is not only possible but often a much more compelling alternative, the reality is that there is a major divergence between the way many enterprises work and the way that the Web works best. It's not going to work to directly transplant many of the effective processes of Web development and architecture to the enterprise.

So I fully agree with Phil that lack of people-orientation is the a significant cause of many IT failures and I concur with Michael that cultural issues often form a surmountable roadblock. Tim is also right on the mark that we're doing enterprise systems wrong. But these statements, as useful as they are, are diagnoses, not solutions. And, like so many things in software these days, the Web is stepping into the vacuum.

Can the cloud really fix IT?

The cultural and organizational institutions in most large enterprises are so embedded that change is not likely to happen within, at least in the short term. Much more likely, as in any competitive environment (which by the way internal IT has not operated in up until now), it will be external disruption that will most often force change. This is the most likely manner in which the cloud, which while itself is decentralized, as a collective force will drive change by introducing direct competition for IT within most businesses (and, just as interestingly, government as well.) This will take place both through Shadow IT coming in the back door as well as by departments and divisions voting with their budgets for official cloud solutions that are much more easily acquired, consumed, and customized than classical enterprise IT solutions.

But it won't be as simple as a simple transition from build to buy-from-the-cloud. As I said in "How the Web OS has begun to reshape IT and business" organizations will have to change their DNA as all businesses get more digital. But the buy model in the form of PaaS and SaaS will let them acquire some new 21st century-ready DNA quickly, at least below the waterline. And while aggressive adoption of agile enterprise processes, an enlightened revamping of enterprise architecture, or other stop-gap measures might offer some short term hope, the reality is that what Salesforce, Amazon, Google, and others are increasingly offering is the most compelling IT/business destination.

Related: Eight ways that cloud computing will change business.

This is a future in which whatever business solution you need is already in the cloud running with best practices, always up-to-date, always backed up, always pliable, and (usually) interchangeable, not to mention cheap and feature-rich to due intense competition. In this future, enterprise architects truly become business architects and business people become their own IT experts. Some of this is already here though much of it is not and there are certainly many issues to be worked out. But the writing is increasingly on the wall that this is the future of IT in the cloud computing era.

Will cloud computing transform your IT department or get rid of it? Your feedback is encouraged below in Talkback.