Google adds the last piece to its cloud database stack

Google takes the wraps off, announcing a public beta for the new Cloud Spanner database service. (Image: Google/Usenix.org)

Over the past 18 months, Google has transformed itself from sleeping giant to long-term challenger to the likes of AWS and Azure for the cloud. And last summer, Google rolled out key pieces of its data platform including several SQL and NoSQL databases.

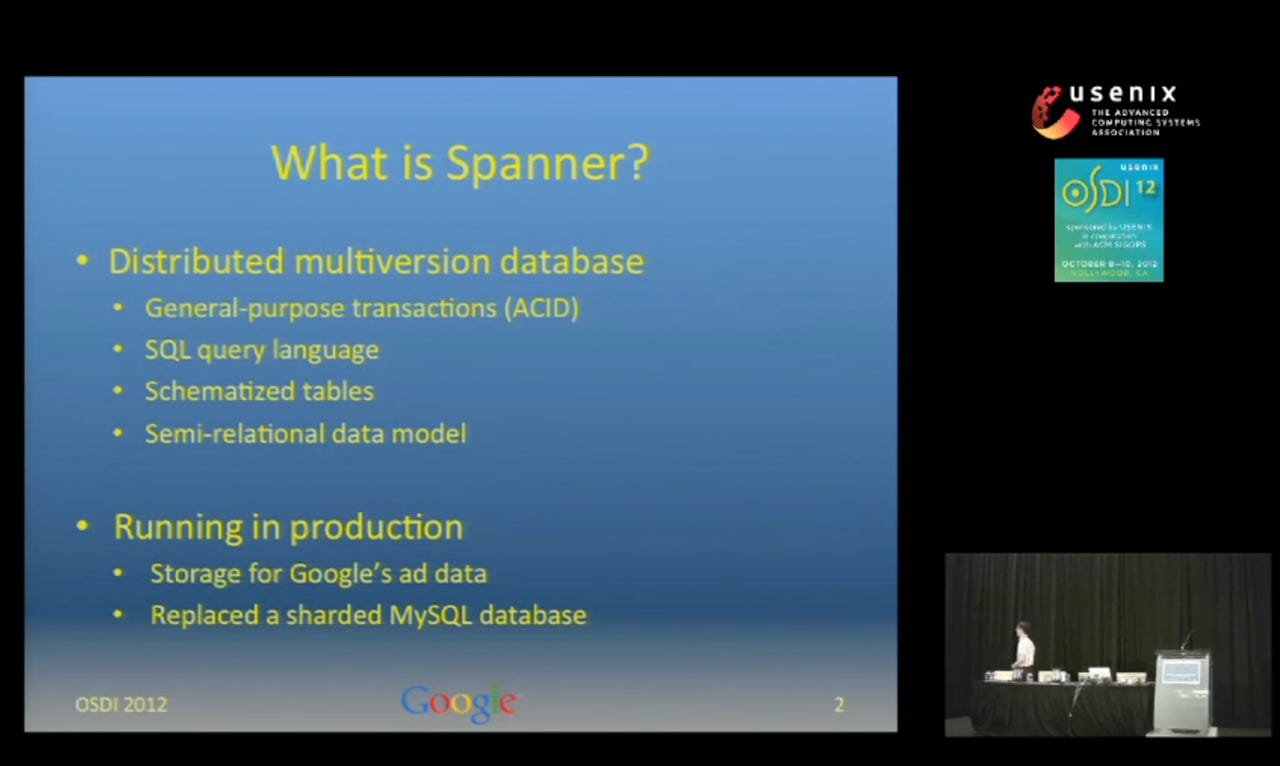

Now, Google has added the last piece of the puzzle. Significantly, it is one that is not (yet) duplicated by AWS or Azure: Google Cloud Spanner. It's one of those legendary Google platforms for which the public has only received access through published research papers. It provides the underlying distributed transaction processing platform for the F1 relational database that powers the core of Google's revenue machine: AdWords.

It's been rumored for a while. This week, Google takes the wraps off, announcing a public beta for the new Cloud Spanner database service. It will provide a globally distributed cloud-based transactional database with SQL support and strong consistency, which blows out in scale anything else currently on the market. It is the same Spanner database that Google has described in research papers, but now with an API accessible through the Google Cloud Platform (GCP). While Google is pitching it for use cases such as global database consolidation, our take is that in the short run it's best suited for new generation use cases that are being disrupted by data that lives in the cloud, such as global supply chain management.

Cloud Spanner takes its place alongside Cloud SQL for more modest ecommerce deployments; Cloud Bigtable as the key/value NoSQL database for large scale reads and writes; Cloud Datastore as the document database for user profiles and online gaming; Big Query for data warehousing; and Cloud Dataproc for Hadoop and Spark. For the most part, Google's database portfolio lines up with Amazon AWS and Microsoft Azure, but Cloud Spanner is, literally, the big exception. Of course, the key question for Cloud Spanner is whether it's for companies that are not of Google's scale.

A hard nut to crack

The trick with distributed transaction databases is balancing the conflicting needs for keeping records consistent (the "C" of "ACID") while also keeping the database available. Normally when you update a record, you must lock it to avoid two (or more) conflicting updates coming in at once, cancelling each other out and/or corrupting the data.

That means several things. First, the most common use cases for distributed databases have involved either operational scenarios (e.g., updating user profiles, managing live gaming interactions), where ACID requirements are not so steep, or analytics, which won't have the issue of transaction updates.

Secondly, transactional database platforms have emerged to deal with the distributed challenge. There was Oracle RAC (Real Application Clusters), which relied on a multi-tiered architecture involving a cluster manager. Then came a wave of NewSQL databases that provided varying approaches to maintaining consistency. More recently, sharding became popular with NoSQL data stores such as MongoDB, and SQL transactional databases such as Amazon Aurora and Oracle. With sharding, you're not necessarily distributing the database per se, but partitioning tables across storage arrays to improve access and performance across one or more instances.

Big and Consistent

Spanner changes our assumptions about scale, such as that transaction databases can't grow as large as analytic ones. While we may have become jaded to the idea of Hadoop clusters running thousands of nodes, Spanner's global scale is mind-boggling: It can run on up to millions of nodes across hundreds of data centers, handling up to trillions of rows. And, by the way, it's designed to run 24/7.

Google is positioning Spanner as a fully managed, mission-critical relational database service with Five 9s availability that will deliver strong consistency and global scale. While Google in its research paper termed Spanner as a semi-relational database with a query language that was SQL-like, the commercial offering will be mostly ANSI SQL 2011 compliant. There will also be access through client libraries in languages such as Java, Python, Go, Node.js, and Ruby, and there will be a JDBC driver for integrating with third-party BI and data integration tools.

The origins of Spanner should sound familiar: Google developed it when its implementation of MySQL ran out of gas. When it reached tens of terabytes, Google engineers had to manually shard the database -- the last such effort took a couple years. While Google also had Bigtable, a NoSQL database from which HBase was later derived, it wasn't designed for global ACID compliance.

Spanner got over that hump with automated sharding and replication that would balance storage of data globally. While Spanner automates placement of data, applications can control where the data physically goes. That is critical for global databases where data sovereignty laws require data to be stored inside national borders or for scenarios where you want to control data locality to optimize performance.

Like many distributed transaction databases, it uses a form of Paxos mechanism where commits are determined by a consensus of which node to make the leader (the one that has the authority to commit the change). Google claims that its Paxos implementation is faster than most open source implementations available in the wild.

The secret sauce of Spanner is Google's TrueTime API, the global wall clock with operations that are too detailed to review here (the Spanner research paper will tell you plenty). This is what keeps Spanner in sync. Suffice it to say that all commits are time stamped, and time stamps are applied once the system has verified the actual time. Or, to quote the Spanner research paper, "The API directly exposes clock uncertainty... If the uncertainty is large, Spanner slows down to wait out that uncertainty." Well, slowing down in this case means latencies of maybe 10 milliseconds or so.

So, who needs all this scalability?

The push for distributed transaction databases has been driven by the internet -- where interaction is global and the expectation of customers is for continuous uptime. The classic examples include online commerce or gaming, where customers will not tolerate delays because the database needs to update their record. Google is promoting Cloud Spanner for use cases such as database consolidation or for NoSQL applications that need more robust transactional consistency.

The obvious niche is globally distributed applications that require a single consistent database. Global trading systems come to mind; today, these systems are typically divided into multiple regional instances with wide area, near real-time replication. There is also a potential play for database consolidation there.

But keep in mind that there is plenty of inertia from enterprises when it comes to switching transaction systems -- and for very good reasons. These systems run the core of the business, which is the last thing you want to disrupt. Unlike analytics or operational use cases, transaction systems typically don't create new revenue opportunities. Transaction processing is highly complex, with household names like Oracle, SQL Server, or DB2 having decades of development behind their robust engines. Challengers to the old order will be considered guilty till proven innocent. For instance, under the covers, Spanner's architecture differs a bit from SQL databases. The onus of proof will be on Google to prove that Spanner has the full SQL compatibility and support that enterprises expect.

As challenger, Google will compete on price. But established providers like Oracle won't shrink from pricing wars. The real challenge by Google won't come overnight. But Google is well positioned to play the long game, with the architecture of Spanner being sufficiently unique that it could redefine globalized transaction processing over the long haul.

Video: Google goes completely green to tackle climate change