Google: Here's how we're going to crack down on terrorist propaganda

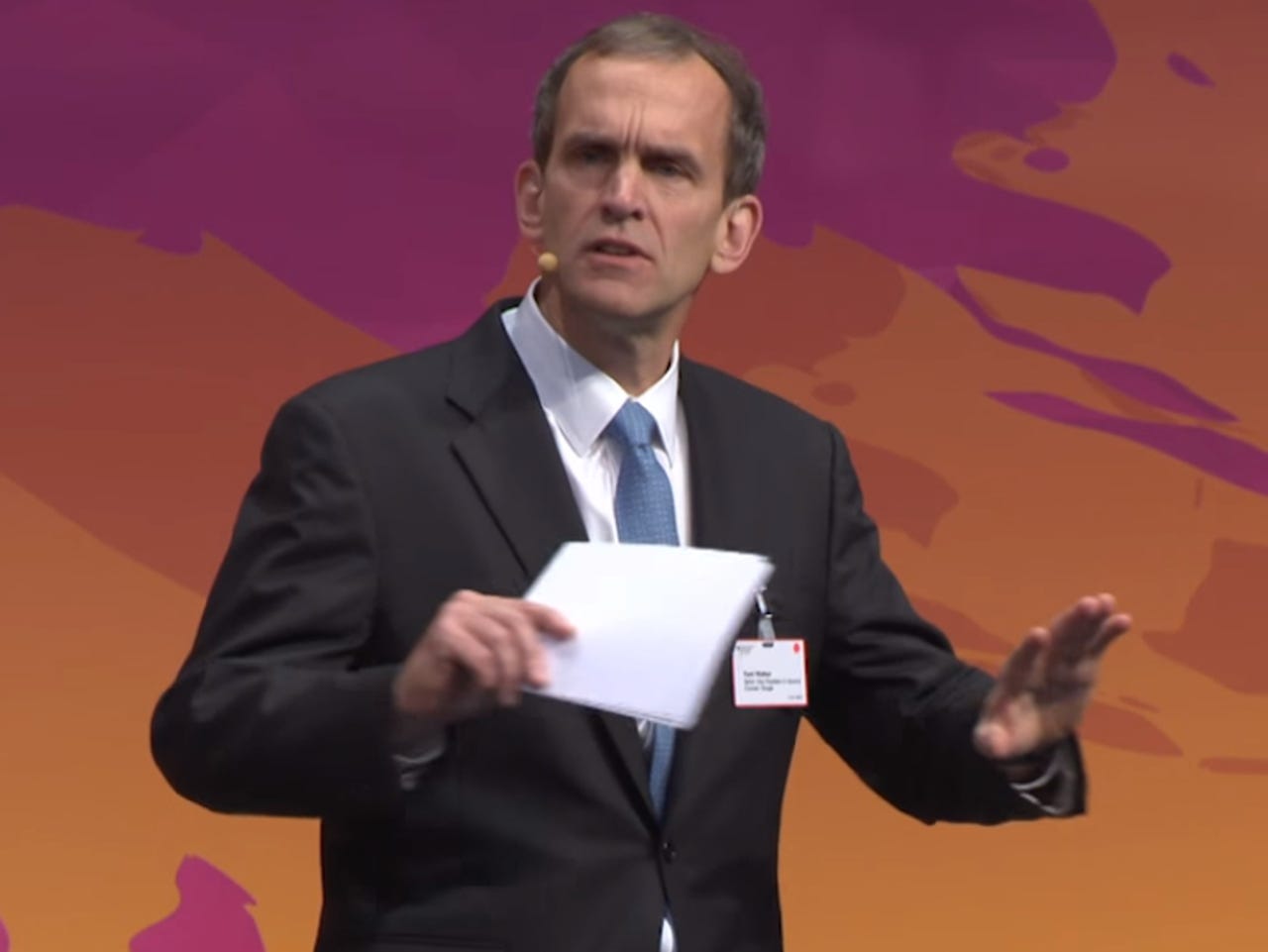

Google general counsel Kent Walker: "The uncomfortable truth is that we, as an industry, must acknowledge that more needs to be done."

Google has vowed to do more to prevent groups from using YouTube to spread terrorist propaganda and entice recruits.

Google general counsel Kent Walker in an op-ed in the Financial Times on Sunday admitted that the internet industry should be doing more to combat terrorist propaganda online.

"Terrorism is an attack on open societies, and addressing the threat posed by violence and hate is a critical challenge for us all. Google and YouTube are committed to being part of the solution. We are working with government, law enforcement and civil society groups to tackle the problem of violent extremism online. There should be no place for terrorist content on our services," he said.

"While we and others have worked for years to identify and remove content that violates our policies, the uncomfortable truth is that we, as an industry, must acknowledge that more needs to be done."

The op-ed follows UK prime minister Theresa May's recent call for an end to "safe spaces" provided by internet-based services in the wake of this month's attack in London that left seven dead and dozens others injured. May has also touted fines for tech firms that don't remove terrorist content. Facebook last week laid out its strategy to tackle this type of content.

The UK Home Affairs Committee last year asked representatives from Google, Facebook, and Twitter why they weren't using technology to combat terrorist propaganda when, for example, Facebook was using it to censor nudity.

Walker said Google will be dedicating more technology resources coupled with an expansion of YouTube's Trusted Flagger program, which relies on human expert reviewers to flag content that violate Google's policies.

"We will now devote more engineering resources to apply our most advanced machine-learning research to train new 'content classifiers' to help us more quickly identify and remove extremist and terrorism-related content," he said.

Google is already using image-matching technology to block reuploads of known terrorist content, he noted.

Google will also add 50 new expert NGOs to the 63 organizations that already participate in its Trusted Flagger program. Google's "operational grants" will support their work. Google argues that these Trusted Flagger reports are accurate 90 percent of the time.

"This allows us to benefit from the expertise of specialized organizations working on issues like hate speech, self-harm, and terrorism. We will also expand our work with counter-extremist groups to help identify content that may be being used to radicalize and recruit extremists," Walker explained.

Videos that don't clearly violate Google's policies, such as ones "that contain inflammatory religious or supremacist content" will soon be placed behind a full page or interstitial warning. The content won't be monetized, or available for commenting or voting-up by YouTube users.

The fourth approach is to use targeted advertising aimed at counter-radicalization. Alphabet's Jigsaw group has previously run experiments on YouTube with what it calls the Redirect Method.

The pilot program worked with former violent extremists, researchers and online ad specialists to create counter-radicalization ads and keywords to target potential ISIS recruits and lead them to existing online content that undermined ISIS propaganda. The ads were clicked on by 320,000 unique users over eight weeks.

"We are working with Jigsaw to implement the Redirect Method more broadly across Europe," Walker said.

Google will also work with Facebook, Microsoft, and Twitter to "establish an international forum to share and develop technology and support smaller companies and accelerate our joint efforts to tackle terrorism online".

More on terrorism and tech

- Backdoors, encryption and internet surveillance: Which way now?

- It is time for big internet to join terrorism fight: ALP

- British PM on terror threat: Let's blame Facebook, not our police cuts

- Facebook outlines its AI-driven efforts to fight terrorism

- Citing terror threat, US confirms electronics ban on some US-bound flights