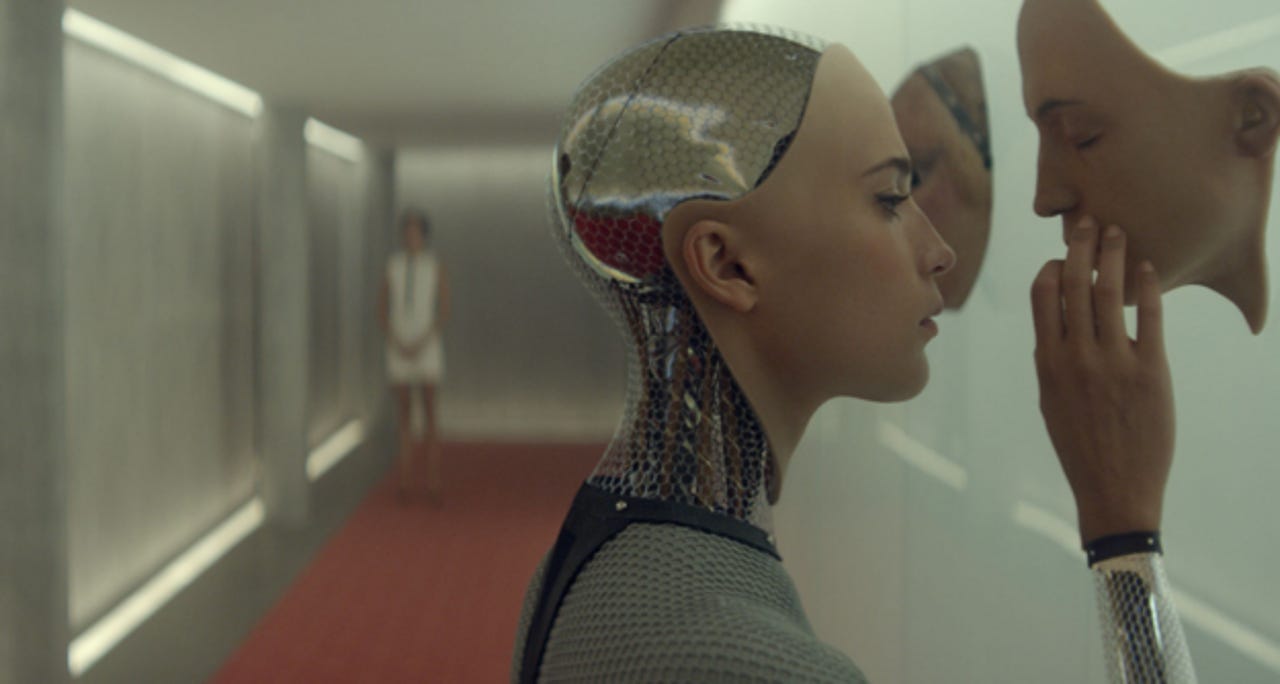

Hollywood movies vs. the real future of AI

Hollywood has had a long love affair with the future.

The film industry's affinity for prognostication dates back almost 100 years to the 1927 classic sci-fi flick Metropolis, when moviegoers got their first taste of the terrifying technology that may wait to greet mankind.

Like many movies that would follow, Metropolis gave a dystopian depiction of the distant future, bringing to question whether advances in technology could evolve beyond our control.

For the most part that question has remained very much alive, especially as it relates to the evolution of artificial intelligence (AI).

But AI is a supremely complex technology to understand, let alone create, and oftentimes Hollywood blockbusters stretch the technology's limitations to fit some desired scenario. In other words, the AI popularized and propagated by Hollywood seldom reflects the direction the technology is actually headed.

"AI is nowhere near able to take over the world in the next few years," said Charlie Ortiz, senior principal manager of the Artificial Intelligence and Reasoning Group within Nuance's Natural Language and AI Laboratory. "And given the distance to that point, there are lots of other futures that could evolve. It could very well evolve into something that is more helpful and collaborative and could teach us if necessary."

Featured

So where does AI development stand right now? Overwhelmingly, the AI of today is focused on personal assistant experiences powered by voice and touch input, and is in no way self-aware.

"We are not close to creating anything remotely humanlike," said Tim Tuttle, CEO and founder of AI firm Expect Labs. "What we are close to is designing systems that are good at very specific tasks."

Systems such as Apple's Siri, Microsoft's Cortana, or the Dragon Mobile Assistant in the case of Nuance present a more accurate portrait of the AI that is available for mass consumption. More complex systems like IBM's Watson are pushing AI further with a focus on deep learning and cognitive computing.

And while AI is expected to progress rapidly over the next couple of years, the progression will remain controlled and in line with the technology's original purpose -- to assist humans and their interaction with technology.

According to Ortiz, AI products will become more conversational and dialogue based in the near future, allowing humans to have extended conversations with AI systems like they would another person. There will be a heightened level of self-awareness, but in a way that's directed more toward a system's relationship with its human counterpart.

"That is important because when systems can't help you or are confused with what you are saying, the system needs to have a sub-dialogue with you about that problem in order to give more informative responses," explained Ortiz. "We want the systems to know more about your personal preferences based on how you have interacted with them before. They don't need to keep asking questions they have already asked."

On the backend side of AI development, Ortiz said the research community is working rigorously to build bigger knowledge systems for use within AI, which he explained are necessary for AI systems to interact with humans in more than one domain. While IBM's Watson is leading the charge in this area, the industry as a whole has not caught up.

See also

"Trying to get more general intelligence into systems has been a major stumbling block," Ortiz said.

But as stumbling blocks turn into building blocks, AI technology will continue to evolve and eventually seep into every computing system humans interact with.

"In the next 10 to 15 years you will be able to walk up to a device and speak to it to make it accomplish a task that you want," said Tuttle. "That will change the way we interact with computing devices. It's going to be one of the most exciting transformations."

With all that said, there's another aspect worth keeping in mind. At this point, researchers have no idea how AI could evolve in an unpredictable way to the point of hyper-awareness. But that doesn't mean the possibility of that happening is entirely ruled out.

As Tuttle explained, that line of thinking is driven by two very simple observations. First, the rate of change in the AI space is accelerating and the computing systems we use every day are getting smarter more quickly than ever before.

The second is all about human nature. For instance, when humans interact with something that is less intelligent, they often get bored and frustrated. Based on that, if AI is both similar and smarter than humans, then that could happen to AI systems as well.

"Both of those assumptions have so much variability and conjecture that any debate around this topic is more philosophical than scientific," Tuttle said.

The view inside the AI community, Tuttle continued, is that the concerns and fears being raised within pop culture are doing more harm than good.

"The technology we have today compared to what could be is like making a stick figure drawing of Michelangelo's David," Tuttle said. "So preventing an AI apocalypse is like trying to solve the problem of over population on Mars -- it theoretically could happen in the future, but scientists now have no idea where to start."