Humans actually think like computers, a Johns Hopkins study says

Looks like a kitchen floor to me.

Artificial Intelligence

Do you realize what you've become?

Yes, you think you're a beautifully human, sentient being. You think you're a unique example of the species, fascinating in every aspect.

Or have all those gadgets you've been using turned you into something of a predictable machine?

I only ask because of a new study from Johns Hopkins University, in which researchers tested whether humans actually see things in a very similar way to computers.

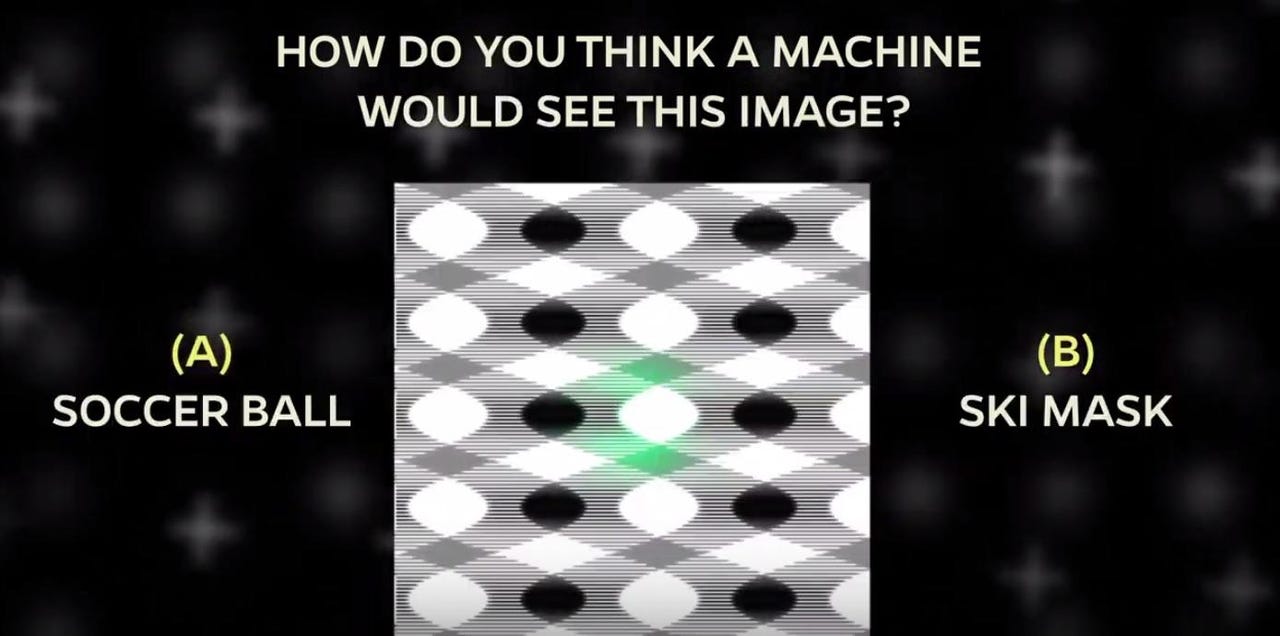

Chaz Firestone, an assistant professor in Johns Hopkins' Department of Psychological and Brain Sciences and Zhenglong Zhou, a Johns Hopkins senior majoring in cognitive science, decided to show humans the sorts of images that still fool computers' AI.

You may not know this, but computers aren't really all that clever. They have a limited vocabulary, perhaps a mere 1,000 words. The researchers therefore gave humans the choice of two descriptive options: One the computer's answer and one a random answer.

In a thoroughly depressing conclusion for humanity, the humans made the same choices as the computer 75 percent of the time.

Perhaps elated at these results, the researchers then gave the humans the computer's answer and the computer's next-best guess answer.

The humans went with the computer's answer 91 percent of the time.

Oh, humans. You're almost past your sell-by date.

There's more evidence, you see. As a final attempt to hammer an additional nail into humanity's self-regarding coffin, the researchers gave humans a choice of 48 answers to various trippy images.

Even when they were shown television static, a greater than random number of humans agreed with the computer's description.

Please now see for yourself. See how you do with this video test.

Did you see a soccer ball there? I didn't. How about the tile roof? I merely saw drapes.

As for the freight car vs. school bus, I had no idea what it was. Both choices seems ludicrous.

Yet the researchers promise that they tested such images on 1,800 people.

Firestone told me that one important aspect of our future lives is whether we'll be able to anticipate when a machine will get things wrong.

"Whether we like it or not, AI technologies are going to be entering our lives," he said. "You have to learn a bunch of things about how it works -- well and poorly."

He explained that we're already doing it by knowing, for example, not to use slang when we're talking to Siri.

"There are very roughly two ways to make machines," he told me. "One, let's make machines people-smart. Two, let's forget everything we know about human intelligence. Let's get the machine to work things out as best as it can. The second type are the sort of machines we used in this research."

The next step, then, is to see whether humans can become machine psychologists -- experts in knowing how a machine will think and when it will fail. Another question: How long will it take humans to become experts at machine thinking?

I asked Firestone whether these results made him feel optimistic or pessimistic about humanity.

He lurched toward the optimistic. Yes, but I only gave him two choices, didn't I?

I wonder what a machine would think.