Making the public cloud your own

In my last post, I talked about SaaS applications that are operated by enterprises for employees, partners or customers. Some of these instances operate in a mode that people have been describing as 'private SaaS', which means that the application is only visible to users who have logged onto a private network. While these applications may behave like SaaS, they lack an important dimension by not being exposed to the challenges and pressures of the public Internet. In other words, they are not built to perform at what I call cloud scale.

In many cases, though, enterprises are fooling themselves if they believe that these 'private SaaS' instances are safer than third-party public SaaS alternatives. If they're being delivered to laptop users logging in from public Wi-Fi hotspots and hotel LANs, or if there are mobile clients being used on smartphones, they're still exposed to numerous security threats. Customer-facing applications — especially if they're part of a customer acquisition strategy — are even more exposed, because they have to be open to the Internet to fulfil their mission.

The riposte I often hear in such circumstances is that, well, at least the infrastructure is behind our own firewalls, so we know the back-end is safe. But this is a somewhat naive point of view, as I recently discussed in a post [disclosure: sponsored by IBM] on the Future of Enterprise Computing site: In the Cloud, Governance Trumps Ownership. The truth is that our faith in the potency of direct ownership often masks a lack of proper processes:

"The real reason we like ownership is that, whenever we need to, we know we can just walk in and make a hands-on assessment of the situation on the ground. If we're honest with ourselves, that sense of direct, actionable accountability is probably covering a multitude of sins. We know there are times when our own people or our contractors, whether through lack of training, process flaws or sheer carelessness, get things wrong. We probably tolerate errors within our own organization that we would never accept from a third-party provider because we know we have the power to put things right to our own satisfaction if we ever need to."

I went on to argue that the quality of real-time instrumentation that's available today means that we no longer have to rely on walk-in inspections to enforce proper process. Instead, we can automate governance — and if we do that, we can have just as much confidence in a provider's infrastructure as in our own; perhaps more, because the provider can offer us an all-new, state-of-the-art implementation that would be beyond our own purchasing power.

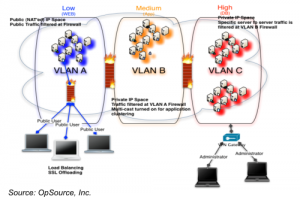

Yesterday, cloud infrastructure provider OpSource announced a new offering that perfectly illustrates my point [disclosure: OpSource is a past client]. The attention-grabbing part of the announcement is free bundling of a virtual private network that secures the customer's cloud instances. By doing away with the 20c per hour charge for the VLAN (which provides firewalls, load balancing, VPN and other access controls), OpSource effectively cuts the price of a single server by almost two thirds. But of course the point is that most customers who are interested in the platform will end up with at least half-a-dozen servers, often many more, so by 'loss-leading' on the first server OpSource is onboarding customers that will become more profitable as they scale up to production levels.

What caught my interest was the infrastructure underlying this offering. It's an all-Cisco hardware platform, part of the vendor's Data Center Business Advantage architecture. So all the firewall, VPN and load balancing surrounding the customer's server instances, as well as the instances themselves, are running on the same, shared, physical internal bus. The principal advantage of this is that it removes the latency inherent in other cloud platforms where servers are connected at LAN or WAN speeds. "We offer sub-millisecond latency speeds," OpSource's CMO Keao Caindec told me in a briefing last week. Whether tying a cloud infrastructure to a specific hardware architecture is truly in the spirit of cloud computing is a discussion I'd like to defer to a separate blog post; there are a number of angles to consider, and it's not as clear-cut as it might seem. One argument in favor is the other advantage that OpSource exploits, which is the ability to offer customized configuration and governance of a customer's cloud instances within the shared infrastructure.

"In this case the customization of the environment from a security viewpoint is included in the cost and it scales economically," Caindec explained. "The customers gain the benefits of scale without having to pay additional fees for customization. That's right at the heart of why we're doing this. Right now, to customize the public cloud or to secure it costs you extra money."

"That is almost impossible to do in a normal public cloud," Caindec told me. "But because we've deployed actual networking gear that creates that same networking environment, it is exactly the same as what you would do in your own data center.

"There really is no difference between a public and a private cloud," he continued. "The level of security has more to do with the architecture than whether it's in a public cloud provider or a private customer data center."

This ability to privately "own" a slice of the public cloud is especially attractive to SaaS ISVs, who form an important segment of OpSource's customer base. Because, of course, a SaaS ISV is merely an enterprise whose business model is based on making customer-facing applications available on the public Web. Hosting their application on a virtual-private LAN within a public cloud provider's infrastructure gives them the right combination of internal control and outward-facing cloud scale, provisioned with cloud economics. Whether they then go on to architect the internals of their application to really exploit the cloud environment is another matter, but having a highly customizable, high-performance infrastructure that's tuned for cloud scale seems like a great starting point.