OpenAI is training GPT-4's successor. Here are 3 big upgrades to expect from GPT-5

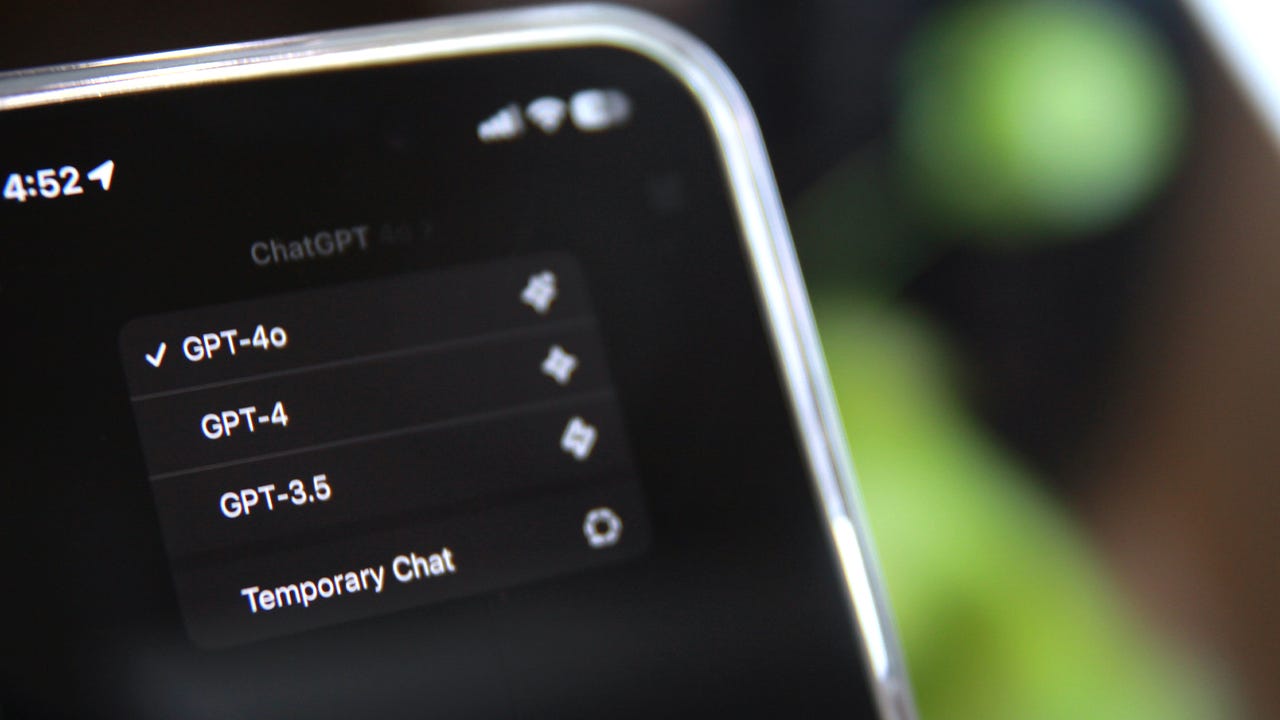

Even though OpenAI's most recently launched model, GPT-4o, significantly raised the large language model (LLM) ante, the startup is already working on its next flagship model, GPT-5.

Leading up to the spring event that featured GPT-4o's announcement, many hoped the company would launch the highly anticipated GPT-5. To curtail the speculation, CEO Sam Altman posted on X, "not gpt-5, not a search engine."

not gpt-5, not a search engine, but we’ve been hard at work on some new stuff we think people will love! feels like magic to me.

— Sam Altman (@sama) May 10, 2024

monday 10am PT. https://t.co/nqftf6lRL1

Now, just two weeks later, in a blog post unveiling a new Safety and Security Committee formed by OpenAI's board to recommend safety and security decisions, the startup confirmed that it is training its next flagship model, most likely referring to GPT-4 successor's, GPT-5.

"OpenAI has recently begun training its next frontier model, and we anticipate the resulting systems to bring us to the next level of capabilities on our path to AGI [artificial general intelligence]," the company said in a blog post.

Also: How to use ChatGPT Plus: From GPT-4o to interactive tables

Although it may be months if not longer before GPT-5 is available for customers -- LLMs can take a long time to be trained -- here are some expectations of what OpenAI's next-gen model will be able to do, ranked from least exciting to most exciting.

Better accuracy

Following past trends, we can expect GPT-5 to become more accurate in its responses, as it will be trained on more data. Generative AI models depend on training data to fuel the answers they provide. Therefore, the more data a model is trained on, the better the model's ability to generate coherent content, leading to better performance.

Also: How to use ChatGPT to make charts and tables with Advanced Data Analysis

With each model released thus far, the amount of training data has increased. For example, reports have suggested that GPT-3.5 was trained on 175 billion parameters, while GPT-4 was trained on 1 trillion. We will likely see an even bigger jump for GPT-5.

Increased multimodality

When predicting GPT-5's capabilities, we can look at the differences between every major flagship model since GPT-3.5, including GPT-4 and GPT-4o. With each jump, the model became more intelligent and boasted improvements, including to price, speed, context length, and modality.

GPT-3.5 can only input and output text. With GPT-4 Turbo, users can input text and image inputs to get text outputs. With GPT-4o, users can input a combination of text, audio, image, and video and receive any combination of text, audio, and image outputs.

Also: What does GPT stand for? Understanding GPT-3.5, GPT-4, GPT-4o, and more

Following this trend, the next step for GPT-5 could be the ability to output video. In February, OpenAI unveiled its text-to-video model Sora, which may be incorporated into GPT-5 to output video.

The ability to act autonomously (preview of AGI)

There is no denying that chatbots are impressive AI tools capable of helping people with many tasks, including generating code, Excel formulas, essays, resumes, apps, charts, tables, and more. However, there is a growing desire for AI that knows what you want done and can do it with minimal instruction -- a tenet of artificial general intelligence, or AGI.

GPT-5 is unlikely to be fully capable of AGI, but it could be capable of using autonomous agents to accomplish an end goal by reasoning what needs to be done, planning how to do it, and carrying out the task.

For example, in an ideal scenario, you would be able to request GPT-5 to, for example, "order a burger from McDonald's for me." The AI model could then use agents to complete a series of tasks that include opening the McDonald's website and inputting your order, address, and payment method. All you'd have to worry about is eating the burger.

Also: What is artificial general intelligence really about? Conquering the last leg of the AI arms race

Rabbit is trying to accomplish something similar by creating a gadget that can use agents to create a frictionless experience with tasks in the real world, such as booking an Uber or ordering food. Rabbit's R1 has sold out multiple times, despite being unable to carry out the more advanced tasks mentioned above.

As the next frontier of AI, AGI could completely upgrade the type of assistance we get from AI and change how we think of assistants altogether. Instead of relying on AI assistants to tell us, say, how the weather is, they could help accomplish tasks for us from start to finish. Even though GPT-5 may not be there yet, it will give us a glimpse, and if you ask me, that's something to look forward to.