Please, Facebook, give these chatbots a subtext!

Imagine yourself in a restaurant, waiting for a table. A stranger approaches and asks, "Do you come here often?" Do you

- Reply, "Yes, and how come I've never seen your type here before?" because you agree to pick up on the hint and flirt?

- Glower and say nothing because you want to be left alone?

- Say, "Excuse me," because the maître d' is signaling your table is ready and you want to get out of this exchange and get to your table?

People do and say things because of a subtext, what they're really, ultimately after. The subtext underlies all human interaction.

Machines, at least in the form of current machine learning, lack a subtext. Or, rather, their subtext is as dull as dishwater.

That much is clear from the latest work by Facebook's AI Research scientists. They trained a neural network model to make utterances, select actions, and choose ways to emote, based on the structure of a text-based role-playing game. The results suggest interactions with chatbots and other artificial agents won't be compelling anytime soon.

Also: Why chatbots still leave us cold

The underlying problem, as seen in another recent work by the team, last year's Conversational Intelligence Challenge, is that these bots don't have much of a subtext. What subtext they do have is merely forming context-appropriate outputs. As such, there's no driving force, no real reason for speaking or acting, and the results aren't pretty.

The research, "Learning to Speak and Act in a Fantasy Text Adventure Game," is posted on the arXiv pre-print server. It is authored by several of the Facebook researchers who helped organize last year's Challenge, including Jack Urbanek, Angela Fan, Siddharth Karamcheti, Saachi Jain, Emily Dinan, Tim Rocktächel, Douwe Kiela, Arthur Szlam, Samuel Humeau, and Jason Weston.

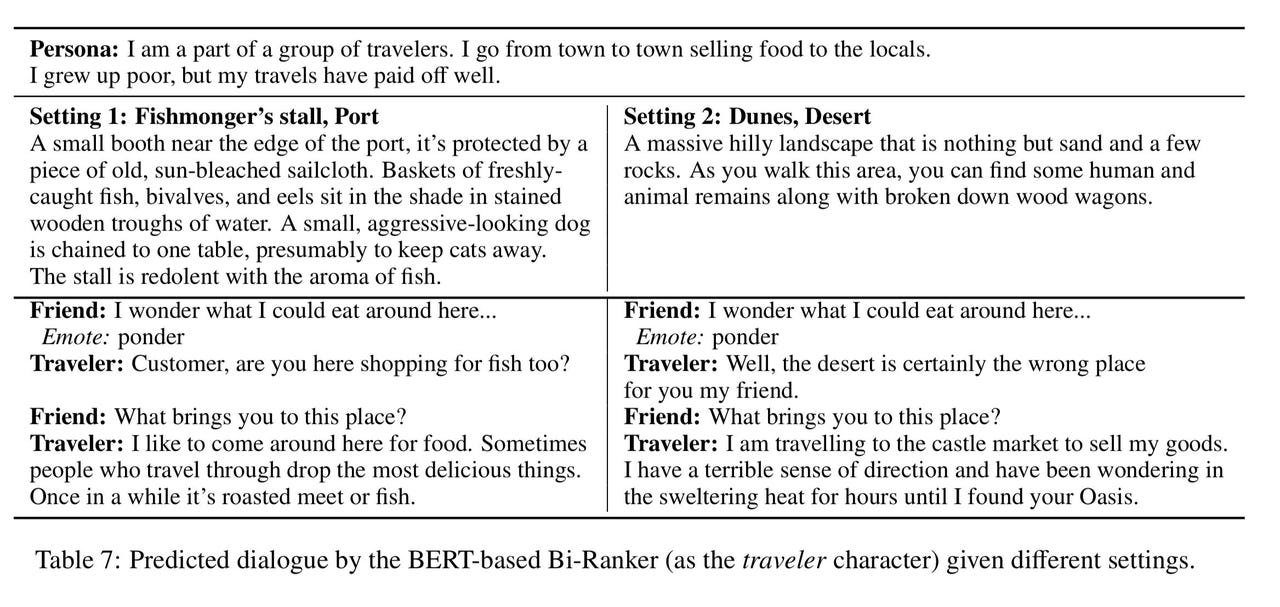

Snippets of text generated from Facebook's "LIGHT" artificial agent system. The machine plays the role of "Traveler" in response to the human-generated "Friend" exclamations.

The authors offer a new setting for language tasks, called "LIGHT," which stands for "Learning in Interactive Games with Humans and Text." LIGHT is constructed via a crowd-sourcing approach, getting people to invent descriptions of made-up locations, including countryside, bazaar, desert, and castle, totaling 663 in all. The human volunteers then populated those settings with characters, from humans to animals to orcs, and objects that could be found in them, including weapons and clothing and food and drink. They also had the humans create almost 11,000 example dialogues as they interacted in pairs in the given environment.

The challenge, then, was to train the machine to pick out things to do, say, and "emote" by learning from the human examples. As the authors put it, to "learn and evaluate agents that can act and speak within" the created environments.

For that task, the authors trained four different kinds of machine learning models. Each one is attempting to learn various "embeddings," representations of the places and things and utterances and actions and emotions that are appropriate in combination.

Also: Fear not deep fakes: OpenAI's machine writes as senselessly as a chatbot speaks

The first model is a "baseline" model based on StarSpace, a 2017 model, also crafted by Facebook, that can perform a wide variety of tasks such as applying labels to things and conducting information retrieval.

The second model is an adaptation by Dinan and colleagues of the "transformer" neural network developed in 2017 at Google, whose use has exploded in the last two years, especially for language tasks. The third is "BERT," an adaptation by Google of the transformer that makes associations between elements in a "bi-directional" fashion (think left to right, in the case of strings of words.)

The fourth neural network approach tried is known as a "generative" network, using the transformer not only to pay attention to information but to output utterances and actions.

The test for all of this is how the different approaches perform in producing dialogue and actions and emoting, once given a human prompt. The short answer is that the transformer and the BERT models did better than baseline results, while the generative approach didn't do so well.

Most important, "Human performance is still above all these models, leaving space for future improvements in these tasks," the authors write.

Indeed, even though only a few examples are provided of the machine's output, it seems the same overall problem crops up again as appeared in last year's Challenge.

In that chatbot competition, the over-arching goal, for human and machine, could be described as "make friends." The subtext was to show interest in one's interlocutor, to learn about them, and to get the other side to know a little bit about oneself. On that task, the neural networks in Challenge failed hard. Chatbots repeatedly spewed out streams of information that were repetitive and that seemed poorly attuned to the cues and clues coming from the human interlocutor.

Must read

- 'AI is very, very stupid,' says Google's AI leader (CNET)

- How to get all of Google Assistant's new voices right now (CNET)

- Unified Google AI division a clear signal of AI's future (TechRepublic)

- Top 5: Things to know about AI (TechRepublic)

Similarly, in the snippets of computer-generated dialogue shown in the LIGHT system, the machine generates utterances and actions appropriate to the setting and to the utterance of the human interlocutor, but it is strictly a prediction of linguistic structure, absent of much purpose. When the authors performed an ablation, meaning, tried removing different pieces of information, such as actions and emotions, they found the most significant thing the machine could be supplied was the history of dialogue for a given scene. Their work remains essentially a task of word prediction, in other words.

Artificial agents of this sort may have an expanded vocabulary, but it's unlikely they have any greater sense of why they're interacting. They still lack a subtext like their human counterparts.

All is not lost. The Facebook authors have shown how they can take word and sentence prediction models and experiment with their text abilities by combining more factors, such as a sense of place and a sense of action.

Until, however, their bots have a sense of why they're interacting, it's quite likely artificial-human interactions will remain a fairly dull affair.

Best of MWC 2019: Cool tech you can buy or pre-order this year

Previous and related coverage:

What is AI? Everything you need to know

An executive guide to artificial intelligence, from machine learning and general AI to neural networks.

What is deep learning? Everything you need to know

The lowdown on deep learning: from how it relates to the wider field of machine learning through to how to get started with it.

What is machine learning? Everything you need to know

This guide explains what machine learning is, how it is related to artificial intelligence, how it works and why it matters.

What is cloud computing? Everything you need to know about

An introduction to cloud computing right from the basics up to IaaS and PaaS, hybrid, public, and private cloud.