Review: SQL Server 2017 adds Python, graph processing and runs on Linux

Today, at its Ignite conference in Orlando, Microsoft is announcing the general availability of a new version of its flagship operational database, SQL Server 2017.

SQL Server is a product I have worked with for nearly my entire career. I started with version 4.2 for Windows in 1993 and have worked with and carefully reviewed each subsequent release, including this one. The thing I've taken away from all this work and evaluation is that while SQL Server has constantly improved, while it has modernized and added numerous features and bundled technologies, in many ways, it still feels like the same database I started working with almost 25 years ago.

At times, I think developers and database administrators need that familiarity. At other times they need the innovation and confidence that the product team has more new stuff in the pipeline. Customers need SQL Server to stay the same and yet they need it to change and improve. That really is SQL Server's unique challenge: it must be true to its legacy, and yet avoid stasis, staying competitive with the seemingly endless array of new data technologies, both commercial and open source.

Summary of SQL Server 2017 new features

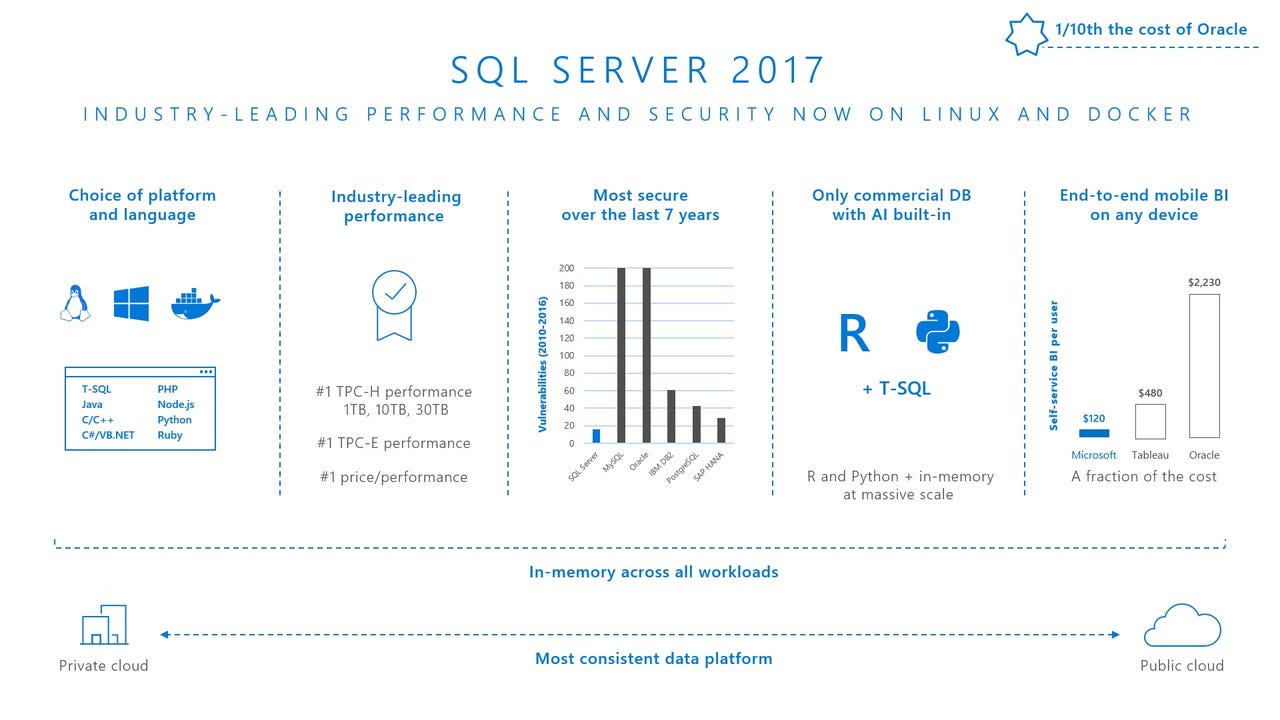

This new version of SQL Server continues to meet these twin demands. It adds new features from the worlds of data science and NoSQL. It offers cross-platform capabilities and Docker container compatibility. But it also reinforces its investment in core database engine performance, ease of index maintenance, high availability and data warehouse performance. That's a difficult balance and one that other database vendors don't have to meet. While this may be Microsoft's cross to bear, the company does pretty well with it, turning a formidable challenge into a positive market differentiator.

Neo-retro: SQL Server for Linux

In a move that may seem ironic to the market and is yet quite consistent with SQL Server's legacy, the release of SQL Server 2017 heralds SQL Server's return to the *NIX platform, with a new Linux version of the product. And despite this development being legacy-consistent (early versions of SQL Server, co-developed with Sybase, also ran on UNIX), it is still a very big deal.

Also read: Microsoft's SQL Server 2017 for Linux and Windows moves ahead with first Release Candidate

SQL Server has grown quite significantly in popularity over the years. But the popularity of server-side Linux has grown too, and Macs have grown in popularity with developers. This has meant some customers have run a number of Windows servers in their data center just for SQL Server. It's also meant that Mac and Linux development machines have needed to connect to SQL Server over the network, and haven't been able to run it locally unless on a Windows virtual machine. Both of these issues have been blockers to adoption.

Also read: Why Microsoft needs SQL Server on Linux

With Linux compatibility, Microsoft begins the work of eliminating these adoption blockers. SQL Server can now run on Windows servers, Linux-based servers, or some combination of the two. The Linux version can also run in Docker containers (which can also be Windows- or Linux-based) making it almost trivial to run on a Mac. In fact, if a developer's machine already has Docker installed, running and has allocated at least 4GB of memory to it, then two commands, entered in a Mac or Linux machine's Terminal window, will download, install and start the product running. This same technique works on Windows machines, despite the fact the Windows version can be installed on the metal.

Windows-based SQL Server tools can connect to Windows or Linux instances of SQL Server, so Windows developers needn't change their toolsets. Meanwhile, these tools aren't required: Microsoft provides Linux command line tools for SQL Server, and the most important ones are installed into the Docker image by default. For people who want something more graphical, but still lightweight, Microsoft offers the mssql extension for its Visual Studio Code development tool, which runs on both Linux and Mac. It also runs Windows, allowing even Microsoft platform developers the option to use light-weight tools.

Moving from tools to companion technologies, many of them, including SQL Server Analysis Services and SQL Server Reporting Services, are not available in Linux versions, at least not yet. But while packages created for SQL Server Integration Services (SSIS), Microsoft's stalwart ETL (extract, transform and load) tool, must be authored on Windows machines, the service itself can run on Linux and SSIS packages can be run at the Linux command line, using the command dtexec.

Unifying architecture

While not all SQL Server companion technologies are available on Linux, all features within the SQL Server relational database engine are available there. Even SQL Server's Always On high availability feature works - and Windows and Linux instances can be mixed together in the same Availability Group. Performance is also comparable on the two platforms.

The reason for feature parity between the Windows and Linux versions of the product is the SQL Platform Abstraction Layer (PAL), which is implemented on each OS. As the full name might suggest, the PALs abstract away the differences between the two operating systems, providing a common interface on which a single codebase can run.

With this architecture, any feature created for the SQL Server database engine on one platform is available on the other. And the PALs are implemented very efficiently. Microsoft attained record setting non-clustered TPC-H and 2-socket TPC-E benchmark results with SQL Server 2017, and one of the TPC-H results was achieved with the Linux version of the product.

She blinded me with (data) science!

While SQL Server for Linux is the 2017 release's banner feature, there are two additional major enhancements, as well as a slew of smaller ones which, together, make SQL Server much more robust.

SQL Server Machine Learning Services

On the Data Science side, Microsoft has taken SQL Server R Services, introduced in the 2016 release, and added support for the Python programming language. The enhanced feature, now dubbed SQL Server Machine Learning Services (ML Services), allows code in either language to execute natively on the SQL Server itself. Another option is to write and execute the R/Python code on a workstation but have it delegate the heavy work to run on the SQL Server.

Also read: Microsoft Data Amp heralds Python in SQL Server 2017, and lots more

Also read: Microsoft's R Strategy

R and Python already had access SQL Server, of course, but only if running externally, and thus transferring data queried from the SQL Server to the machine running the code. Running the code natively on SQL Server avoids such data movement, allowing for the creation and training of predictive ML models on the server itself, over large volumes of data, analyzed in-place.

Keeping score

Those models can then be serialized and stored in SQL Server databases. Later, the models can be retrieved and de-serialized, and predictions can be run against them, using SQL Server data as the input. This process, called scoring, can be implemented in code written either in R or Python, via ad hoc scripts or stored procedures. And since stored procedure calls can run on a scheduled basis using a component called the SQL Server Agent, the entire scoring process can be server-based and thoroughly operationalized. That's a big deal, given that scoring has customarily been run on a more ad hoc basis, on data scientists' own machines.

The scoring story gets even better in SQL Server 2017. Beyond the addition of Python as a language that can used for the scoring process, T-SQL code itself can now perform "native scoring" as well, using the language's new PREDICT function. As long as the models being scored against have been trained and are stored in the database in the prescribed RevoScaleR or RevoScalePy format, T-SQL can be used to score against them, whether or not R or Python is even installed on the server.

This allows for a very important separation of concerns, whereby Data Scientists can build and train ML models in their own environment, then store them in the database, where any developer can write T-SQL queries to score against them. And if the SQL Server host has Graphical Processing Units (GPUs) on board, data scientists can see even bigger gains, beyond elimination of data movement, by training their models there.

Enterprise, ready or not

Another T-SQL/Data Science integration in SQL Server 2017 is the ability to add R packages for (modules that act as language extensions) via T-SQL's new CREATE EXTERNAL LIBRARY command, and stored in specific databases, rather than added through R's install.packages() function and made available globally. This gives database administrators greater and more granular control over resources and capabilities that are turned on or off.

This combination of features makes substantial progress toward bringing Data Science forward into mainstream enterprise computing. The ubiquity of SQL Server in enterprise technology environments brings operationalized Data Science onto a bigger stage. Now it's up to Microsoft to drive adoption of these features, rather than just making them available.

Graphic language

The other major addition to SQL Server 2017 is new support for graph processing.

So-called NoSQL databases, of which graph databases comprise one major category, have been a thorn in Microsoft's side for at least six years. And despite the fact that they can be a bit esoteric and pale in adoption levels compared to database products based on conventional relational technology (like SQL Server), they can be very useful for particular database scenarios.

Graph processing is all about nodes and edges

Instead of rows and columns, tables in graph databases contain "nodes" (sometimes called "vertices") and "edges." Nodes represent entities while edges represent relationships between them. With relationships codified at the entity level, rather than the table level, it becomes much easier to do things like get all the entities related to another one in particular.

That type of query is common when working with any domain where networks are involved, including social networks. Want to get all the comments for a given post? Easy peasy. Want to know all the friends two people have in common? Piece of cake.

SQL Server developers can simply specify the AS NODE or AS EDGE keywords in their CREATE TABLE commands when building tables that will store graph entities. When querying any such table, they can then use the MATCH keyword in their SELECT statements to answer the network-related questions, like the social media one we just discussed.

SQL Server can query across a heterogeneous mix of graph tables and conventional ones. It can also enlist columnstore indexes in accelerating queries that benefit from them.

In other news

Yes, Linux, Python and graph processing is a lot, but there's more. Here's a final roundup of what's new in the relational database engine.

SQL Server's vector processing-based batch execution mode is now available to the entire execution of R or Python code. Since much of the work that tends to be done in R and Python involves aggregation, batch mode - which processes rows of data several at a time, can be very helpful.

Two other new batch mode features, memory grant feedback and adaptive joins, will enhance SQL Server's performance and efficiency as well.

In the operational analytics department, the non-clustered variety of Columnstore indexes (NCCIs), introduced in SQL Server 2012, can now be built and rebuilt without taking the tables they index offline, making operational analytics more feasible to implement.

BLOBs (binary large objects, such as whole files, stored in a table) in Columnstore indexes can now be compressed, to save storage space, and the Columnstore index can process them in the compressed format, which also speeds backups.

SQL 2017's new resumable index operations feature allows index rebuilds to be resumed after a database failure or manual abort of the operation, rather than needing to be restarted from scratch, once the database is back online.

SQL Server's query processor can now automatically detect and remediate so-called plan regressions, which occur when it reformulates the best way to query a table but "forgets" certain optimizations it made to the prior plan that may still apply.

SQL Server's In-Memory OLTP facility (a.k.a. "Hekaton"), which processes data in memory and compiles T-SQL stored procedures down to native code, has shed many of its restrictions, allowing the feature to be used in many more scenarios.

Beyond relational

Although the bulk of the new features in SQL Server 2017 are delivered in the core relational database management system (RDBMS) engine, other components of the SQL Server suite of technologies are seeing some improvement as well.

Let's start with SQL Server Analysis Services (SSAS), whose Tabular Mode engine is the Enterprise version of the technology behind Excel's data model and Power Pivot; Power BI Desktop; the Power BI Cloud Service and the new Azure Analysis Services preview service. SSAS Tabular continues to see investment that brings its feature set into near-parity with SSAS' classic Multidimensional model.

First off, Tabular mode is now the default installation option for Analysis Services. A new 1400 compatibility level allows SSAS Tabular's tooling (part of the Visual Studio-based SQL Server Data Tools - SSDT) to provide the same data acquisition dialogs and much of the data source connectivity that's available in the Excel 2016 for Windows and Power BI Desktop. This includes an equivalent data preparation interface and use of the same underlying M programming language that's available in Power BI and Excel. The SSDT tooling for SSAS also now includes an editor for the Tabular engine's DAX (Data Analysis eXpressions) language.

Encoding hints speed up data refresh; a Hide Members property finally allows ragged hierarchies in dimensions; show details is now supported for drillthrough actions from clients like Excel; object-level security has been added; dynamic management views have been improved; and an IN operator and a CONTAINSROW function have been added to DAX.

SQL Server Reporting Services (SSRS) has added a comments feature, for collaborative review of reports, and a DAX editor has been added to the query designer, both in SSDT and the standalone Report Builder application. SSRS is also available in an enhanced incarnation, as Power BI Report Server. More details on that appear below.

SQL Server Integration Services has added a new scale-out feature and, as already discussed, can run on SQL Server for Linux. It also offers enhanced connectivity to Microsoft Dynamics AX Online and Microsoft Dynamics CRM Online.

On the business side

There's more to SQL Server than product features, of course; the business side is important too.

Microsoft is not introducing any price increases with this version of the product. In fact, for the Linux version, Microsoft is offering a promotional (limited time) Software Assurance (SA)-only subscription for the Standard and Enterprise editions of the product. This is a bit like getting a free license as long as you sign up for maintenance and, according to Microsoft, represents a 30% discount in all-in fees. When you combine this promotion with the fact that SQL Server Developer Edition is free (a policy Microsoft instituted in early last year), customers get open source-like economics with SQL Server.

And speaking of SA, SQL Server Enterprise Edition (EE) customers who have it will be entitled to a new on-premises analytics product: Power BI Report Server (PBIRS). PBIRS allows for Enterprise-wide on-premises delivery of Microsoft Power BI reports, previously available only through a cloud subscription (or, for those so determined, email attachment-based file sharing).

As it turns out, PBIRS is a full superset of SQL Server Reporting Services (SSRS), thus consolidating all of Microsoft's reporting and data visualization products (SSRS, SSRS Mobile Reports and Power BI) into one Enterprise reporting platform and Web-based portal. The only other way to acquire PBIRS is through a Power BI Premium subscription, which starts at $4,995/month for a single dedicated P1 node, with 8 virtual cores.

Just 16 months later, a big release

That enables the SQL Server team to shorten release cycles for the conventional on-premises editions (the "box product" in Microsoft parlance) and include features that have already been battle-tested. That's why we're getting SQL Server 2017 approximately 16 months after the release of SQL Server 2016, and we're getting major new functionality like Linux, Python, graph processing, and a slew of smaller improvements that keep previously released features well-tuned and relevant.

SQL Server releases used to come every 2-3 years, with some fairly "big bang" changes, and accompanying calls to action around training, readiness and upgrades. Now, with the SQL Server code base being used by Azure SQL Database as well, feature enhancements are deployed, and used in production, at shorter intervals and with less...well...hoopla.

For SQL Server, big bang releases become more like occasional roll-ups of slipstreamed cloud product features. Distinctions between SQL Server and dedicated NoSQL databases become less acute. Data Science, analytics and operational technologies are blending together and becoming more operationalized. BI on the desktop, server, SaaS cloud and PaaS cloud share the same core engine technology. Operating system/platform differences are literally getting abstracted away.

A new database world order

I'm a Microsoft Data Platform MVP, and in no way an unbiased observer. That said, my own earnest observation is that Microsoft's combination of Enterprise legacy, public cloud prowess, developer-friendliness and recent school-of-hard-knocks education puts it in a leading position within the industry to take on this new normal in the world of data, and technology overall. SQL Server 2017 is a significant artefact in that transition to leadership.

In the database world, velocity is increasing and the speed bumps are smoothing out. There's a constant stream of new capabilities, with little time to rest, but with less inertia to overcome, as practitioners and users are constantly in motion, much like data itself.