The internet of things and big data: Unlocking the power

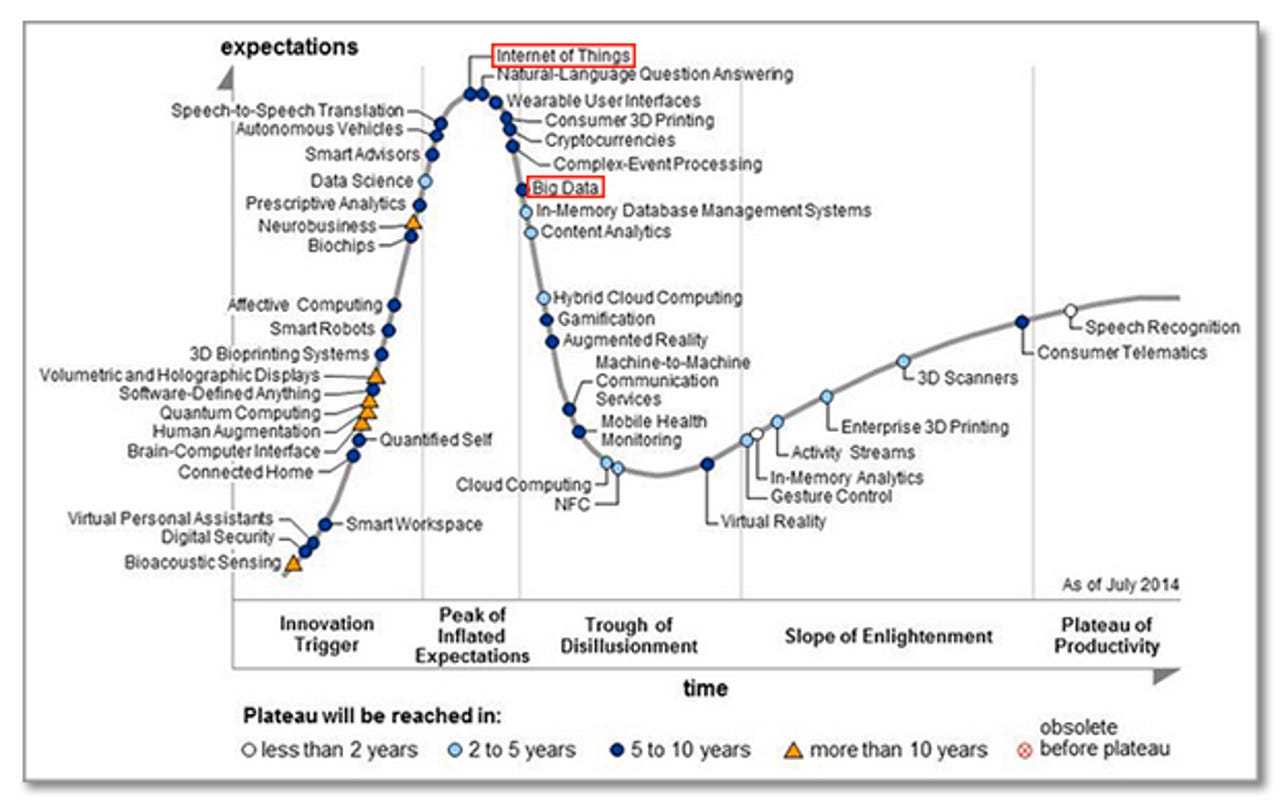

The 'internet of things' (IoT) and 'big data' are two of the most-talked-about technology topics in recent years, which is why they occupy places at or near the peak of analyst firm Gartner's most recent Hype Cycle for Emerging Technologies:

If you have somehow missed the hype, the IoT is a fast-growing constellation of internet-connected sensors attached to a wide variety of 'things'. Sensors can take a multitude of possible measurements, internet connections can be wired or wireless, while 'things' can literally be any object (living or inanimate) to which you can attach or embed a sensor. If you carry a smartphone, for example, you become a multi-sensor IoT 'thing', and many of your day-to-day activities can be tracked, analysed and acted upon.

Big data, meanwhile, is characterised by 'four Vs': volume, variety, velocity and veracity. That is, big data comes in large amounts (volume), is a mixture of structured and unstructured information (variety), arrives at (often real-time) speed (velocity) and can be of uncertain provenance (veracity). Such information is unsuitable for processing using traditional SQL-queried relational database management systems (RDBMSs), which is why a constellation of alternative tools -- notably Apache's open-source Hadoop distributed data processing system, plus various NoSQL databases and a range of business intelligence platforms -- has evolved to service this market.

The IoT and big data are clearly intimately connected: billions of internet-connected 'things' will, by definition, generate massive amounts of data. However, that in itself won't usher in another industrial revolution, transform day-to-day digital living, or deliver a planet-saving early warning system. As EMC and IDC point out in their latest Digital Universe report, organisations need to hone in on high-value, 'target-rich' data that is (1) easy to access; (2) available in real time; (3) has a large footprint (affecting major parts of the organisation or its customer base); and/or (4) can effect meaningful change, given the appropriate analysis and follow-up action.

As we shall see, there's a great deal less of this actionable data than you might think if you simply looked at the size of the 'digital universe' and the number of internet-connected 'things'.

How many IoT 'things'?

A huge number of 'things' could join the IoT, whose recent rise to prominence is the result of several trends conspiring to cause a tipping point: low-cost, low-power sensor technology; widespread wireless connectivity; huge amounts of available and affordable (largely cloud-based) storage and compute power; and plenty of internet addresses to go round, courtesy of the IPv6 protocol (2^128 addresses, versus 2^32 for IPv4).

Estimates and projections of the current and future number of internet-connected objects vary, depending on the definitions used and the optimism of whoever is doing the estimating and projecting. The best-known figures come from Cisco, which puts the current (February 2015) number at around 14.8 billion and the expected number in 2020 at around 50 billion (and that's just 2.77 percent of an estimated 1.8 trillion potentially connectable 'things'):

EMC and IDC are somwhat more conservative, putting the 2020 IoT population at 32 billion, while Gartner comes in with 26 billion.

How much 'big' data?

Data was getting seriously 'big' even before IoT devices entered the picture. EMC and IDC have been tracking the size of the 'Digital Universe', or DU, since 2007 (the DU is all the digital data created, replicated and consumed in a single year). In 2012 EMC and IDC estimated that the DU would double every two years to reach 40 zettabytes (ZB) by 2020 -- a number since revised upwards to 44ZB (that's 44 trillion gigabytes). Astronomical numbers perhaps need astronomical illustrations, which may be why EMC/IDC pictured 44ZB as 6.6 stacks of 128GB iPad Air tablets reaching from Earth to the moon. The DU estimate for 2013 was 4.4ZB (or one stack of iPads reaching two-thirds of the way to the moon).

The 2013 4.4ZB DU estimate breaks down into 2.9ZB generated by consumers and 1.5ZB generated by enterprises. However, only 0.6ZB (15%) of the consumer portion is not touched by enterprises in some way, leaving enterprises responsible for the vast majority of the world's data (about 3.8ZB in 2013). As noted above, EMC and IDC forecast that the IoT will grow to 32 billion connected devices by 2020, which will contribute 10 percent of the DU (up from 2% in 2013). In terms of geography, EMC and IDC predict that the balance will swing from mature markets, which accounted for 60 percent of the DU in 2013, to emerging markets, which will generate a similar percentage in 2020, with the inflection point happening around 2016/17.

Fortunately for enterprises, the 'target-rich' data that can potentially deliver actionable business insights is estimated by EMC and IDC to be a more manageable 1.5 percent of the 2014 total (that's 0.066ZB, or 66 million terabytes).

Cisco's large-scale data tracking project, the Global Cloud Index (GCI), focuses on data centre and cloud-based IP traffic, estimating the 2013 amount at 3.1ZB/year (1.5ZB in 'traditional' data centres, 1.6ZB in cloud data centres). By 2018, this is predicted to have risen to 8.6ZB, with the majority of growth happening in cloud data centres (2.1ZB traditional, 6.5ZB cloud). According to Cisco, 75 percent of the 2018 traffic (6.4ZB) will remain within data centres (storage, production, development, and authentication traffic), with 17 percent (1.5ZB) flowing between data centres to users, and 8 percent (0.7ZB) flowing between data centres (replication and inter-database links).

What's startling, however, is Cisco's estimate of the amount of data generated by Internet of Everything (IoE) devices -- which encompasses people-to-people (P2P), machine-to-people (M2P), and machine-to-machine (M2M) connections -- by 2018: 403ZB, which is 47 times the estimated total data centre traffic and 267 times the estimated amount flowing between data centres and users (see Cisco's Forecast and Methodology white paper for more details). No wonder service providers and mobile operators are taking the IoT extremely seriously.

Where will IoT/big data make an impact?

The IoT and big data are clearly growing apace, and are set to transform many areas of business and everyday life. But which particular sectors are likely to feel the IoT/big data disruption first? In its 2015 Internet of Things predictions, IDC notes that: "Today, over 50% of IoT activity is centered in manufacturing, transportation, smart city, and consumer applications, but within five years all industries will have rolled out IoT initiatives".

In its 2014 Digital Universe report, EMC and IDC see the IoT creating new business opportunities in five main areas, summarised in this slide:

To deliver on these opportunities, according to EMC's Bill Schmarzo, a new generation of IoT applications is required to address specific business needs such as: predictive maintenance; loss prevention; asset utilization; inventory tracking; disaster planning and recovery; downtime minimization; energy usage optimization; device performance effectiveness; network performance management; capacity utilization; capacity planning; demand forecasting; pricing optimization; yield management; and load balancing optimization.

If these and other nuts and bolts of the IoT/big data revolution can be put in place, there's a great deal of economic value to play for. For example, Cisco published a pair of studies in 2013 that evaluated the expected value at stake from the Internet of Everything (IoE) and came up with $14.4 trillion for the private sector and $4.6 trillion for the public sector.

In the private sector, Cisco expects the value to be driven in five main areas: asset utilisation ($2.5 trillion); employee productivity ($2.5 trillion); supply chain and logistics ($2.7 trillion); customer experience ($3.7 trillion); and innovation, including reducing time to market ($3.0 trillion). In the public sector the main proposed drivers are: employee productivity ($1.8 trillion); connected militarized defense ($1.5 trillion); cost reductions ($740 billion); citizen experience ($412 billion); and increased revenue ($125 billion).

As usual when it comes to the IoE/IoT, Cisco's economic value predictions err on the optimistic side; other analysts are generally more conservative -- although the numbers bandied about are still huge.

Barriers to widespread IoT/big data value delivery

Before the IoT/big data nexus can deliver on its promise, there are a number of barriers to overcome. The main ones are summarised below.

Standards

For the IoT to work, there must be a framework within which devices and applications can exchange data securely over wired or wireless networks. One player in this area is OneM2M, an umbrella orgainsation including seven standards bodies, five global ICT fora and over 200 companies (mostly from the telecoms and IT industries). In February this year, OneM2M issued Release 1, a set of 10 specifications covering requirements, architecture, API specifications, security solutions and mapping to common industry protocols (such as CoAP, MQTT and HTTP). "Release 1 utilises well-proven protocols to allow applications across industry segments to communicate with each other as never before -- not only moving M2M forward but actually enabling the Internet of Things," said Dr Omar Elloumi, Head of M2M and Smart Grid Standards at Alcatel-Lucent and OneM2M Technical Plenary Chair, in a statement.

OneM2M has also published a useful white paper that characterises the background to its mission thus: "The emerging need for interoperability across different industries and applications has necessitated a move away from an industry-specific approach to one that involves a common platform bringing together connected cars, healthcare, smart meters, emergency services, local authority services and the many other stakeholders in the ecosystem".

Not surprisingly, given the scope and potential value of the IoT market, there are plenty of other standards bodies vying to get their ideas adopted. These include: the AllSeen Alliance, Google's The Physical Web, the Industrial Internet Consortium, the Open Interconnect Consortium and Thread.

Security & privacy

According to IDC, "Within two years, 90% of all IT networks will have an IoT-based security breach, although many will be considered 'inconveniences'...Chief Information Security Officers (CISOs) will be forced to adopt new IoT policies". Progress on data standards (see above) will help here, but there's no doubt that security and privacy is a big worry with the IoT and big data -- particularly when it comes to areas like healthcare or critical national infrastructure.

The IoT was certainly prominent in the security predictions for 2015 issued by analysts and other pundits at the beginning of the year. Here's a selection:

- Your refrigerator is not an IT security threat. Industrial sensors are (Websense)

- Attacks on the Internet of Things will focus on smart home automation (Symantec)

- Internet of Things attacks move from proof-of-concept to mainstream risks (Sophos)

- The gap between ICS/SCADA and real world security only grows bigger (Sophos)

- Technological diversity will save IoE/IoT devices from mass attacks but the same won't be true for the data they process (Trend Micro)

- A wearables health data breach will spur FTC action (Forrester)

It's not all doom and gloom, though. Two commentators foresaw security tightening around critical infrastructure in 2015, for example:

- Critical infrastructure will see security improvements (Neohapsis)

- Greater focus on securing our critical infrastructure (Damballa)

Big data featured in the 2015 security predictions too, but not to such an extent: Rise of Salami attacks will leave a bad taste at the big data banquet (Varonis Systems); Machine learning will be a game-changer in the fight against cyber-crime (Symantec); and Big data will become a buzzword for the bad guys too (Neohapsis).

As OneM2M points out, security in the IoT is tricky because the multiple stakeholders will have different needs: "For a telecoms operator, security is about ensuring availability; for a customer organisation, it's about protecting their data; and for an M2M and IoT provider it's about ensuring uptime...The huge diversity of M2M and IoT device types, their different capabilities and the range of deployment scenarios makes security a unique challenge for the M2M and IoT industry".

Network & data centre infrastructure

The vast amounts of data that will be generated by IoT devices will put enormous pressure on network and data centre infrastructure. IoT data flows will be primarily from sensors to applications, and will range between continuous and bursty depending on the type of application:

As Gartner points out, the magnitude of IoT-related network connections and data volumes is likely to favour a distributed approach to data centre management, with multiple 'mini-data centres' performing initial processing and relevant data forwarded over WAN links to a central site for further analysis. This will present serious issues around storage for (necessarily selective) data backup, network bandwidth and data centre capacity planning, where Data Center Infrastructure Management, or DCIM, tools will become increasingly important. Cisco, meanwhile, has coined the term 'fog computing' to describe data processing at the network edge, to get around location-based and/or network latency issues -- something that will be a feature of the IoT.

Analytics tools

The volume, velocity and variety (not to mention variable veracity) of IoT-generated data makes it no easy task to select or build an analytics solution that can generate useful business insight. The most recent (Q4 2014) analyst report to specifically address this issue is from ABI Research, which includes a section on vendors that operate in different parts of the analytics pipeline (data integration, data storage, core analytics and data presentation), as well as 'full-stack' vendors like IBM, Microsoft, Oracle, SAP and Software AG.

Skills

The intersection of the IoT and big data is a multi-disciplinary field, and specialised skills will be required if businesses are to extract maximum value from it. Two kinds of people will be in demand: business analysts who can frame appropriate questions to be asked of the available data and present the results to decision makers; and data scientists who can orchestrate the (rapidly evolving) cast of analytical tools and curate the veracity of the data entering the analysis pipeline. In rare cases, the business analyst and the data scientist may be one and the same (valuable) person.

Outlook

We may well be on the threshold of an IoT/big data revolution, but if Gartner's Hype Cycle is a reasonable model, then we still have the backlash (a.k.a. the Trough of Disillusionment) and the rehabilitation (a.k.a. the Slope of Enlightenment) to contend with. Barriers to mainstream adoption that need to be addressed include data standards, security and privacy, network and data centre infrastructure, the availability of appropriate analytical tools, and access to business analysis and data science skills that will allow actionable insights to be extracted from these tools.

Looking further out, it's possible to imagine a world where augmented-reality platforms like Microsoft's recently unveiled HoloLens have matured, and people routinely work and play within an information-rich amalgam of the physical and the IoT-augmented environment.

The UK government's Walport report and an analyst's response

The key section of the report is the executive summary, which contains ten clear and specific recommendations:

- Government needs to foster and promote a clear aspiration and vision for the Internet of Things. The aspiration should be that the UK will be a world leader in the development and implementation of the Internet of Things. The vision is that the Internet of Things will enable goods to be produced more imaginatively, services to be provided more effectively and scarce resources to be used more sparingly.

- Government has a leadership role to play in delivering the vision and should set high ambitions. Government should remove barriers and provide catalysis. There are eight areas for action: Commissioning; Spectrum and networks; Standards; Skills and research; Data; Regulation and legislation; Trust; Co-ordination.

- Government must be an expert and strategic customer for the Internet of Things. It should use informed buying power to define best practice and to commission technology that uses open standards, is interoperable and secure. It should encourage all entrants to market; from start-ups to established players. It should support scalable demonstrator projects to provide the environment and infrastructure for developers to try out and implement new applications.

- (a) Government should work with experts to develop a roadmap for an Internet of Things infrastructure. (b) Government should collaborate with industry, the regulator and academia to maximise connectivity and continuity, for both static and mobile devices, whether used by business or consumers.

- With the participation of industry and our research communities, Government should support the development of standards that facilitate interoperability, openness to new market entrants and security against cybercrime and terrorism. Government and others can use expert commissioning to encourage participants in demonstrator programmes to develop standards that facilitate interoperable and secure systems. Government should take a proactive role in driving harmonisation of standards internationally.

- Developing skilled people starts at school. The maths curriculum in secondary school should move away from an emphasis on calculation per se towards using calculation to solve problems. Government, the education sector and businesses should prioritise efforts to develop a skilled workforce and a supply of capable data scientists for business, the third sector and the Civil Service.

- Open application programming interfaces should be created for all public bodies and regulated industries to enable innovative use of real-time public data, prioritising efforts in the energy and transport sectors.

- Government should develop a flexible and proportionate model for regulation in domains affected by the Internet of Things, to react quickly and effectively to technological change, and balance the consideration of potential benefits and harms. The Information Commissioner will play a key role in the area of personal data. Regulators should be held accountable for all decisions, whether these accelerate or delay applications of the Internet of Things that fall within the scope of regulation.

- The Centre for Protection of National Infrastructure (CPNI) and Communications and Electronics Security Group (CESG) should work with industry and international partners to agree best practice security and privacy principles based on "security by default".

- The Digital Economy Council should create an Internet of Things advisory board, bringing together the private and public sectors. The board would have a remit to: co-ordinate government and private sector funding and support of the relevant technologies; foster public-private collaboration where this will maximise the efficiency and effectiveness of implementation of the Internet of Things; work with government to advise policymakers when regulation or legislation may be needed; maintain oversight and awareness of potential risks and vulnerabilities associated with the implementation of the Internet of Things; and promote public dialogue. To be effective this board should be supported by an adequately funded secretariat.

Responding to the Walport report, Jeremy Green, principal analyst at IoT/big data specialist Machina Research, commended it as a "a surprisingly strong document with an excellent analysis of the role government can play in co-ordinating and promoting a healthy IoT sector". Green did criticise the report for "a haphazard and partial set of supporting case studies" and noted "scope for a more joined-up approach to maintaining a database of deployments". However, overall Machina Research feels that "this report deserves to be read widely and taken forward at a departmental level".