Verta launches new ModelOps product for hybrid environments

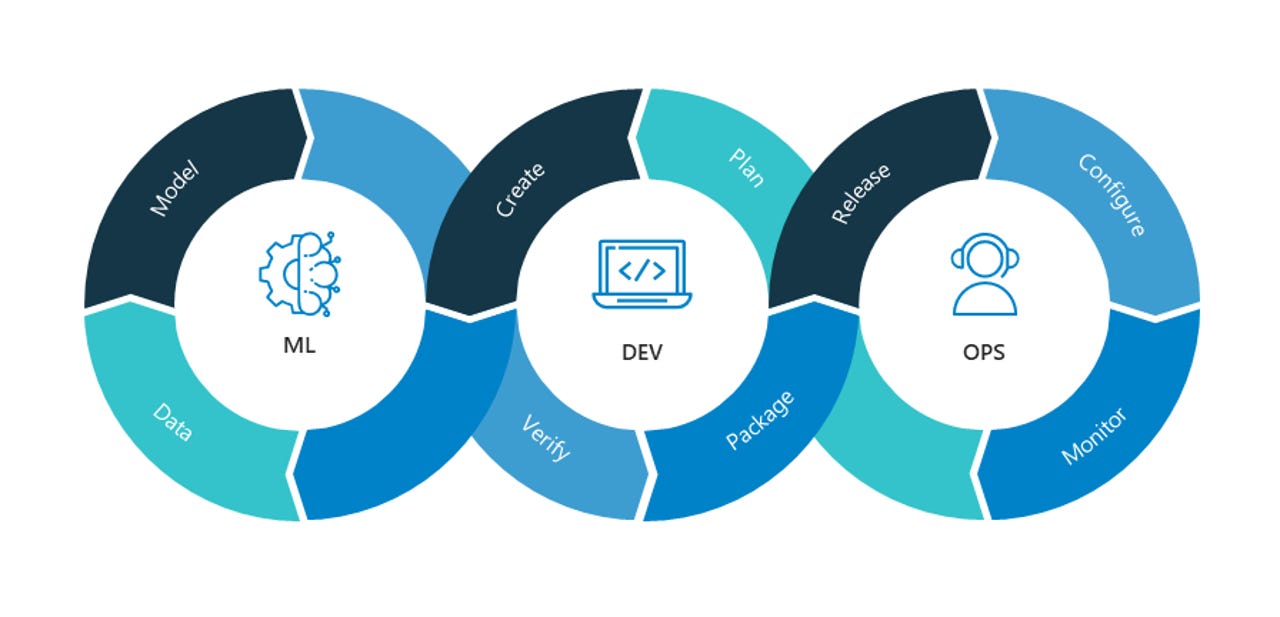

Verta, an AI/ModelOps company whose founder created the open source ModelDB catalog for versioning models, has launched with a $10 million Series A led by Intel Capital. The Verta system tackles what is becoming an increasingly familiar problem: not only enabling ML models to get operationalized, but to track their performance and drift over time. Verta is hardly the only tool in the market to do so, but the founder claims that it tracks additional parameters not always caught by model lifecycle management systems.

While Verta shares some capabilities with the variety of data science platforms that have grown fairly abundant, its focus is more on the operational challenges of deploying models and keeping them on track.

As noted, it starts with model versioning, ModelDB was created by Verta founder Manasi Vartak, a software engineering veteran of Facebook, Google, Microsoft, and Twitter, as part of her doctoral work at MIT. It versions four aspects of models, encompassing code, data sources, hyperparameters, and the compute environment on which the model was designed to run. Since release of the ModelDB project on GitHub, it's been used by numerous Fortune 500 companies for version tracking.

With version tracking as the cornerstone, Verta includes a registry for managing production versions and approval workflows. Like other model monitoring tools, it deploys agents (in this case, proxies) sitting in front of the model tracking data ingress and egress, which can be used for monitoring performance. But it also tracks a number of interdependencies that are not always covered by ModelOps tools, such as the libraries that are called upon by models that are deployed through containers. Often overlooked by many ML monitoring tools, models using deprecated libraries can often run under the radar. If sufficient metadata is stored in ModelDB, Verta can detect when a model is being deployed for its specified purpose or used "off label" (for an unapproved use case or context).

What Verta doesn't tackle is the data management side of machine learning – data ingestion, preparation, and exploration are typically handled by data science platforms. It also doesn't manage, for instance, model testing, but there's overlap when it comes to deployment and monitoring. Verta's chief distinction is that its monitoring covers more facets than most data science platforms. Furthermore, many of the model deployment, lifecycle management, and monitoring functions are covered in end-to-end cloud machine learning services, such as Amazon SageMaker, Azure Machine Learning, and Google Cloud AutoML.

Verta plays to hybrid environments – either where models are designed and run on premises or in a hybrid cloud, or when you have a mix of on-premise models and use of cloud managed services.

Admittedly, in an age of COVID, Series A rounds are a bit unusual; most VCs for the moment are doubling down on existing investments until they can better read the tea leaves for the post-COVID world. Clearly, Verta's $10 million round of financing was in the works for some time, aided by the prominence of its founder creating an open source model version control system that developed traction. With the 1.0 product hitting general release, the next step for the 10-person company will be building the go-to-market team.