11 bio-inspired robots that walk, wiggle, and soar like the real things

BionicWheelBot (AKA "The Rolling Spider") by Festo

The biological model for the BionicWheelBot is the flic-flac spider (cebrennus rechenbergi), which lives on the edge of the Sahara and was discovered by a bionics professor, Ingo Rechenberg.

The flic-flac can walk like a regular spider, but it can also propel itself by somersaulting. The bimodal locomotion makes it well-adapted to its environment. On even ground, it's twice as fast in rolling mode. But when terrain is uneven, normal eight-legged walking is faster.

Festo's BionicWheelBot is able to bend its legs to make its body a wheel. In rolling mode, the robot is far faster than most legged robots. But thanks to those legs, it can also traverse difficult terrain when necessary.

The robot has no practical application yet, but Festo's models could help equip future platforms with amazingly adaptable locomotion.

Secret agent fish by EPFL

Researchers at the École Polytechnique Fédérale de Lausanne, a Swiss university, have created a miniature robot that can swim with fish.

Sure, that's been done before. But this robot also learns how fish in the school it's infiltrated communicate with each other. It then mimics that communication and movement, allowing it to influence the school's behavior.

"We created a kind of 'secret agent' that can infiltrate these schools of small fish," says Frank Bonnet, a post-doc researcher at EPFL who is a co-author on the study.

J'accuse, robot fish!

Sarcos Guardian exoskeletons

Sarcos has developed two exoskeletons under its Guardian line, the Guardian GT and the Guardian XO.

The former is a massive set of robotic arms that can be mounted on a mobile base. A human operator slips their arms into a set of nearby master controllers and teleoperates the robot via normal arm and finger movements.

The Guardian XO is a more traditional exoskeleton suit worn by the operator to augment strength and endurance. There's also a more powerful XO Max version of the suit. Both will be commercially available in 2019.

The Guardian systems are mechanical approximations of various parts of the human skeletal structure. Sarcos' engineers worked hard to preserve ratios found in human anatomy.

The result is a system that can easily be controlled by a novice operator. Using these exoskeletons feels intuitive because we've had so much practice in our own bodies.

RoboBird by ClearFlight

Birds are great, but they can be nuisance at times. And in sensitive spaces like airports, they can be a downright hazard.

ClearFlight Solutions' answer is a flapping robot bird designed to look like a falcon. (They also have a hawk version.)

By mimicking a bird of prey in look and movement, and the flying robot effectively scares off smaller birds without the aid of potentially harmful netting or noise-emitting devices.

Space cleanup tool by Stanford and JPL

Okay, technically not a robot.

But this gecko-inspired gripper will almost certainly be used by robots in the future. In particular, space robots.

Turns out there's a lot of manmade space junk out there. Cleaning it up is tough. So Stanford University and JPL teamed up to create a mechanism that might grip space junk without having to lasso it.

"What we've developed is a gripper that uses gecko-inspired adhesives," according to Mark Cutkosky, professor of mechanical engineering.

Geckos have microscopic flaps on their feet. When they come into contact with an object, a molecular bond forms between their feet and the surface of that object. The weak intermolecular forces result from differences in the positions of electrons in molecules on the adhesive surface and the surface being adhered.

Crucially, the gripper doesn't require force to adhere to an object, which in space would cause the object to float away.

HEXA by Vincross

HEXA is a sensor-rich, six-legged robot that resembles a crab. It's designed to be a platform and not a finished product.

Because it has six legs, it can handle variable terrain. Sensors include a camera with night vision capability, two three-axis accelerometers, an infrared transmitter, and a distance-measuring sensor.

The idea is that developers can pick up one of these for about $500 and, using Vincross's standard developer kit, shape it into anything they'd like.

Some examples on the company's website include surveying volcanos on Mars or helping save lives after earthquakes.

Those are wonderful ideas ... but it doesn't make the idea of a robot crab any less creepy.

MantaDroid by the National University of Singapore

Manta rays move through the water as if they're flying. It's a hyper-efficient mode of undersea transportation that also gives the animals tremendous agility and speed.

Engineers at the National University of Singapore spent more than two years making an autonomous underwater vehicle that mimics the manta ray's locomotion with graceful precision.

Unlike undersea drones that use propellers, the National University of Singapore project is nearly silent. That makes it much more useful in environments where there may be sensitive sea life.

Undersea drones have a variety of applications, from inspecting undersea infrastructure to taking pollution readings in areas that are difficult for humans to access, such as spill sites.

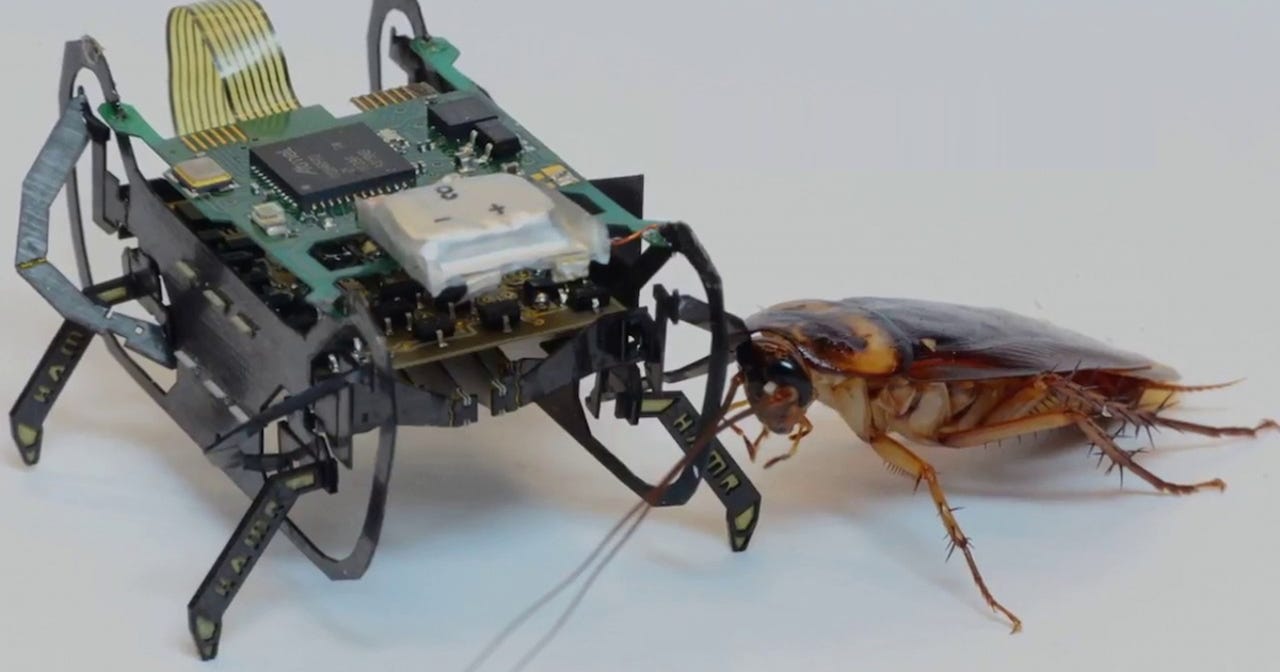

HAMR, a robotic cockroach from Harvard

Roboticists at the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) created this centimeter-scale robot resembling a cockroach.

Like the insect from which the engineers drew their inspiration, The Harvard Ambulatory Microrobot (HAMR) can turn sharply and run at high speeds, climb, carry payloads, and survive long drops unharmed.

The real breakthrough with HAMR is the way it moves. Each leg has two actuators, which closely mirror the joints of a cockroach.

"Our techniques allow us to create robots that don't sacrifice complexity as the size is reduced and enabled us to create robots that rival some of the capabilities of their biological counterparts," explains SEAS engineering professor Robert Wood.

SoFi, the robotic fish by MIT

It looks like a fish. It moves like a fish.

But it's a robot. SoFi was built by researchers at MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL).

SoFi is untethered, which we're seeing more and more of in underwater drones as wireless communications protocols evolve.

Instead of relying on propellers, however, which are noisy and could scare away marine life, SoFi uses a bio-inspired swimming motion.

The side-to-side fish tail motion is achieved via a pump that sends water into two diaphragms in alternating sequence.

Soft robotic octopus by Sant'Anna School of Advanced Studies

Professor Cecilia Laschi from the Sant'Anna School of Advanced Studies is a leader in the emerging field of soft robotics.

For this project, Professor Laschi sought to make a robot that could move through water and over the seabed like an octopus.

Her robot sucks water into a chamber and expels it like a jet to move through water. The robot also uses its soft tentacles to clamber and scramble over the uneven ocean floor.

An eye-inspired camera by NCCR Robotics

Conventional cameras can help drones fix their location, but they require ample light to function effectively. Autonomous drones that rely on camera vision are also restricted to flying below speeds that cause motion blur, which renders vision algorithms useless.

So a group of researchers from the University of Zurich and NCCR Robotics decided to give drones a new way to see. Their innovation is an eye-inspired camera that can easily cope with high-speed motion and even see in near-dark conditions.

The invention is known as an event-based camera. Event cameras are bio-inspired vision sensors that output pixel-level brightness changes instead of standard intensity frames.

To conceptualize what that means, it's helpful to understand when they're useful. Traditional video can be broken down into a series of frames containing rich information at the pixel level about brightness and color. Event cameras, by contrast, only compare brightness at each pixel from one moment to the next.

Standing still, an event camera will yield very little useable information. Put it on a drone whipping through the sky, however, and the readings can be used by a computer to visualize an environment in real time while limiting the amount of data that needs processing.