AWS launches SageMaker Studio, a web-based IDE for machine learning

Amazon Web Services on Tuesday announced SageMaker Studio, a fully-integrated development environment for machine learning. A web-based IDE, SageMaker Studio allows you to store and collect all things you need, whether it's code, notebooks or project folders, all in one place with one pane of glass.

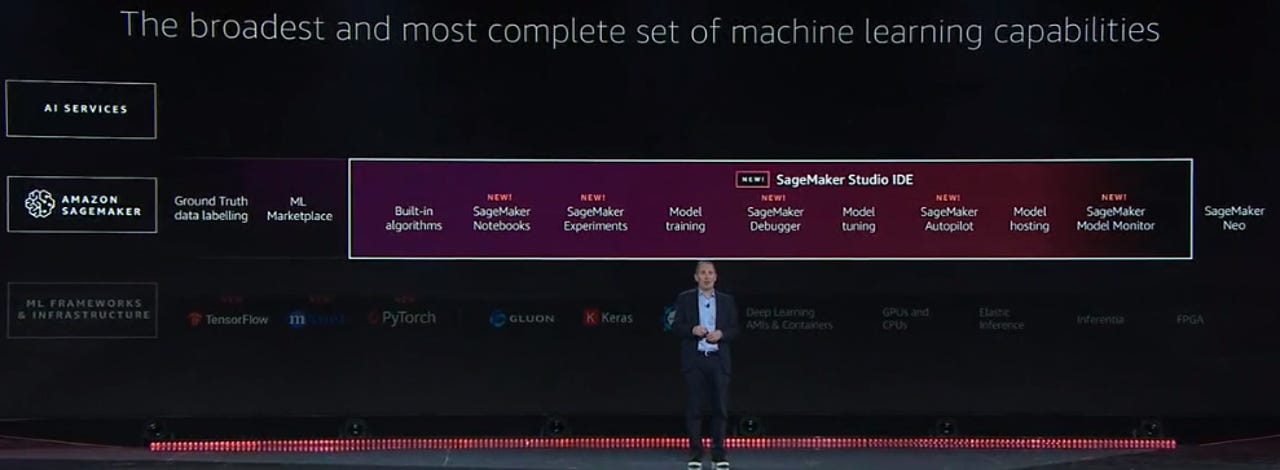

The new IDE is part of SageMaker, Amazon's end-to-end machine learning service. At the AWS re:Invent conference in Las Vegas, AWS CEO Andy Jassy said that the introduction of SageMaker was a "sea-level change" for machine learning.

"With SageMaker Studio, it's a giant leap forward," he said. "If you really want machine learning to be as expansive as we think it should be, you have to make it more accessible."

SageMaker Studio comprises several tools, including SageMaker Notebooks, SageMaker Experiments, SageMaker Debugger, SageMaker Model Monitor and SageMaker Autopilot.

In a nutshell, Jassy outlined a machine learning strategy that aims to automate a lot of headaches in building models. Here's a look at some of the key SageMaker Studio parts:

SageMaker Notebooks lets you spin up a notebook with one click using elastic compute, Jassy said, calling it "a much easier way to manage notebooks." There's no instances to provision, and notebook content is automatically copied and transferred to new instances.

SageMaker Experiments allows you to capture, organize and search every step of building, training and tuning your models, automatically. You can capture all of your model's parameters, configurations and results. You can browse them in real time, and you can search for older experiments.

SageMaker Debugger allows developers to debug and profile their model training to improve the accuracty of their machine learning models. It's on by default and can provide real-time alerts and advice for optimizing training times and improving model quality.

With a capability called feature prioritization, Debugger puts a spotlight on the dimensions or features having an impact on the model. "It turns out if you have an under-performing neural network model, you might want to know which dimension it's leaving out," Jassy said.

SageMaker Model Monitor helps customers automatically detect concept drift in deployed models. It creates baseline statistics about data during training and compares data used to make predictions against that baseline. It alerts developers whenever any drift is detected.

"People say, 'How can you help me when I have models that have been working for a period fof time and suddenly it's not working well anymore?'" Jassy said.

Lastly, withSageMaker AutoPilotthe process of building models is all automated. It handles algorithm selection, data preprocessing and model tuning, as well as all infrastructure. Using a single API call, or a few clicks in SageMaker Studio, SageMaker Autopilot first inspects your data set, and runs a number of candidates to determine the optimal combination of data preprocessing steps, machine learning algorithms and hyperparameters. Then, it trains an Inference Pipeline that you can easily deploy either on a real-time endpoint or for batch processing.