Intel launches toolkit to bring computer vision to the edge

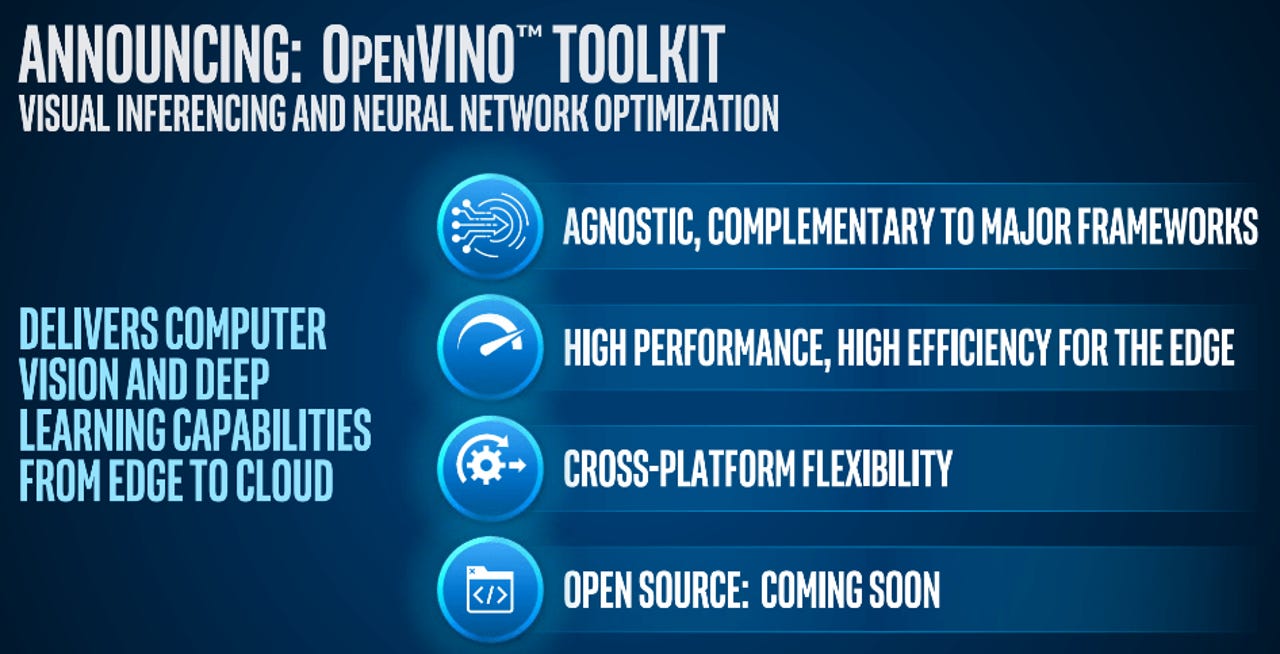

Intel on Wednesday is unveiling OpenVINO, a toolkit designed to easily bring computer vision and deep-learning inference to vision applications at the edge.

Featured

The OpenVINO (Open Visual Inference & Neural network Optimization) toolkit enables developers to build and train AI models in the cloud -- on popular frameworks such as TensorFlow, MXNet, and Caffe -- and deploy them across a broad range of products. It takes advantage of Intel's investments in different AI accelerator technologies, including CPUs, field programmable gate arrays (FPGAs), and Movidius vision processing units (VPUs).

"There's more than one architecture for intelligence," Intel's Adam Burns told ZDNet.

OpenVINO provides a set of optimization capabilities and a runtime engine that allows developers to run their model on the architecture that best suits their needs, whether it's a highly-tuned FPGA, an efficient VPU, or another choice. For instance, in a retail setting, a developer might want to deploy computer vision capabilities within a range of edge applications, including the point of sale, within digital signage and within security cameras.

Read also: Google Duplex beat the Turing test: Are we doomed?

Intel says it's seeing significant growth in IoT markets worldwide, in part beacuse of a significant increase in vision applications leveraging AI. Businesses need intelligence at the edge for a number of reasons, Burn said: They may want to store less data longterm, they may be driven by bandwidth constraints, or they may need to make immediate decisions based on the data collected. Intel's IoT business in Q1 grew at a rate of 17 percent year over year to deliver $840 million in revenue.

OpenVINO should be applicable across a broad range of markets, including industrial, retail, energy, and medical, Burns said. It's already being used for a variety of use cases -- for instance, Dahua is using it for smart city and traffic solutions, and GE Healthcare is using it for medical imaging. Other companies using it include AgentVi, Amazon Web Services, Dell, and Honeywell.

Google Assistant's big update: All the new AI tricks and features, explained

Related stories

- Research: 34 percent afraid of artificial intelligence

- Executive's guide to AI in business (free ebook)

- Ten technologies that are precursors to the AI era

- Don't fear the machine, it's already enriching your life

- Machine learning: Mainstream tools for your business

- Four ways your business can start using AI for automation

- Putting free AI-driven services to work in your business