Microsoft expands its AI partnership with Meta

Microsoft and Meta are extending their ongoing AI partnership, with Meta selecting Azure as "a strategic cloud provider" to accelerate its own AI research and development. Microsoft officials shared more details about the latest on the Microsoft-Meta partnership on Day 2 of the Microsoft Build 2022 developers conference.

Microsoft and Meta -- back when it was still known as Facebook -- announced the ONNX (Open Neural Network Exchange) format in 2017 in the name of enabling developers to move deep-learning models between different AI frameworks. Microsoft open sourced the ONNX Runtime, which is the inference engine for models in the ONNX format, in 2018.

Today, Meta officials said they'll be using Azure to accelerate research and development across the Meta AI group. Meta plays to use a dedicated Azure cluster of 5400 GPUs using the latest virtual machine (VM) series in Azure (NDm A100 v4 series, featuring

NVIDIA A100 Tensor Core 80GB GPUs) for some of their large-scale AI research workloads. Last year, Meta began using Azure VMs for some of its large-scale AI research, specifically distributed AI training.

The pair also said today that they will collaborate to scale PyTorch adoption on Azure. Last year, Microsoft committed publicly to stepping up its support for enterprise customers that are using Meta's PyTorch deep-learning framework on Azure. I think the new piece here is Microsoft committing to build "in the coming months" new PyTorch development accelerators to facilitate rapid implementation of PyTorch-based solutions on Azure.

AI development has been a big theme at Build this week, with Microsoft announcing a new Arm-based dev kit running on the Qualcomm Snapdragon platform that will be available sometime later this year. One of the biggest claims to fame of the coming "Project Volterra" dev kit is its ability to run AI workloads faster and more efficiently on Arm than on other processors. (Microsoft still hasn't released any tech specs, including information on the processors that will be onboard.)

Microsoft's newly-announced Power Apps Express Design capability uses AI models from Azure Cognitive Services to turn drawings, images, PDFs and Figma design files into applications.

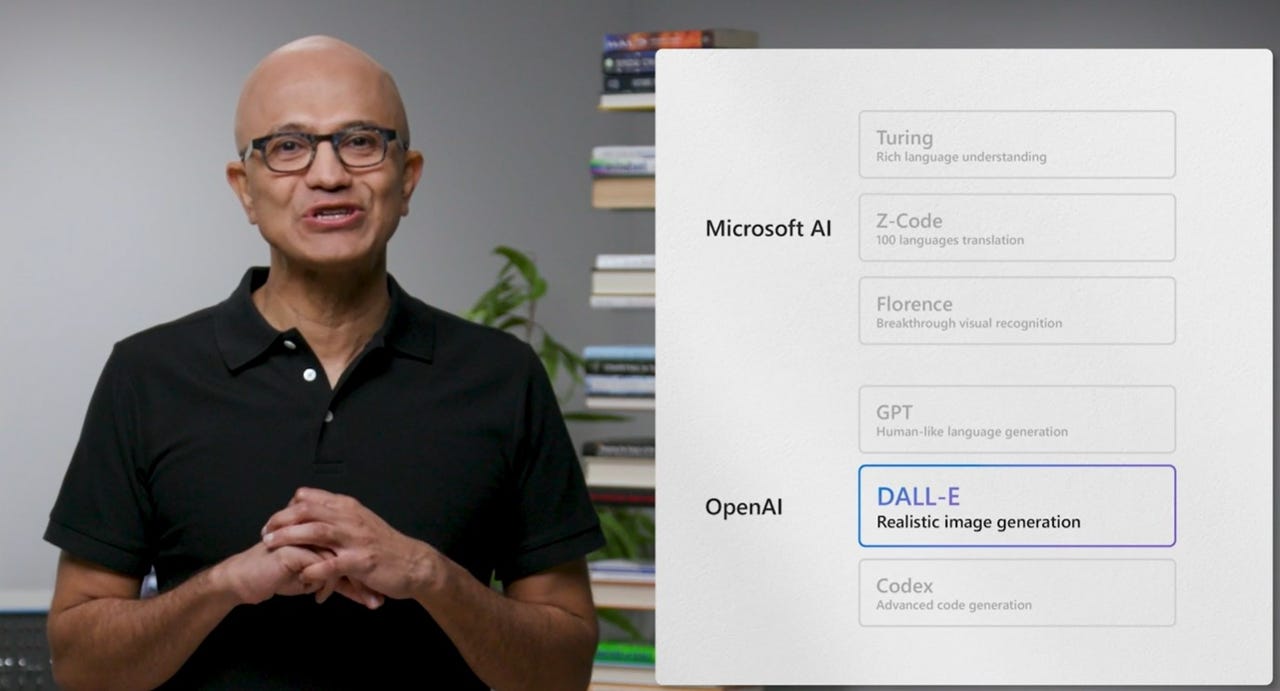

Microsoft also announced this week that the Azure OpenAI Service is now available in preview. Those in the preview will be able to access different models from OpenAI, including the GPT-3 base series (Ada, Babbage, Curie and DaVinci), Codex series and embedding models, and combine them with the enterprise capabilities of Azure. In 2019, Microsoft invested $1 billion in OpenAI in exchange for commitments from OpenAI to make Microsoft its "preferred partner for commercializing new AI technologies."

Another Microsoft Cognitive Service, the Cognitive Service for Language, now offers summarization for documents and conversations. This could prove useful for call center usage, Microsoft officials suggested. Microsoft is making new Language Service capabilities generally available, including custom-named entity recognition, which can help developers organize and categorize text with a customer's domain-specific labels, such as a support ticket or invoice.

Microsoft also announced the Azure Machine Learning responsible AI dashboard is in preview. This capability will help developers and data scientists more easily implement responsible AI, Microsoft officials said.

Microsoft's current set of AI models, include Turing for rich language understanding, Z-Code for language translation and Florence for visual recognition. Its family of Azure Cognitive Services include building-block services for Vision, Speech, Language, Decision, plus its OpenAI service. And among the existing Azure Applied AI Services are Cognitive search, Form Recognizer, Immersive Reader, Bot Service and Video Analyzer.