Apple previews new iPhone accessibility features Live Captions, Door Detection and Apple Watch Mirroring

We're only a few weeks away from the opening keynote of Apple's annual Worldwide Developer Conference, where we'll see the company preview upcoming software features and changes for the iPhone and the rest of its hardware lineup.

However, on Tuesday, Apple gave us a preview of several new accessibility features the company states will arrive on the iPhone, iPad and Apple Watch later this year -- presumably in iOS 16 and iPadOS 16.

ZDNET Recommends

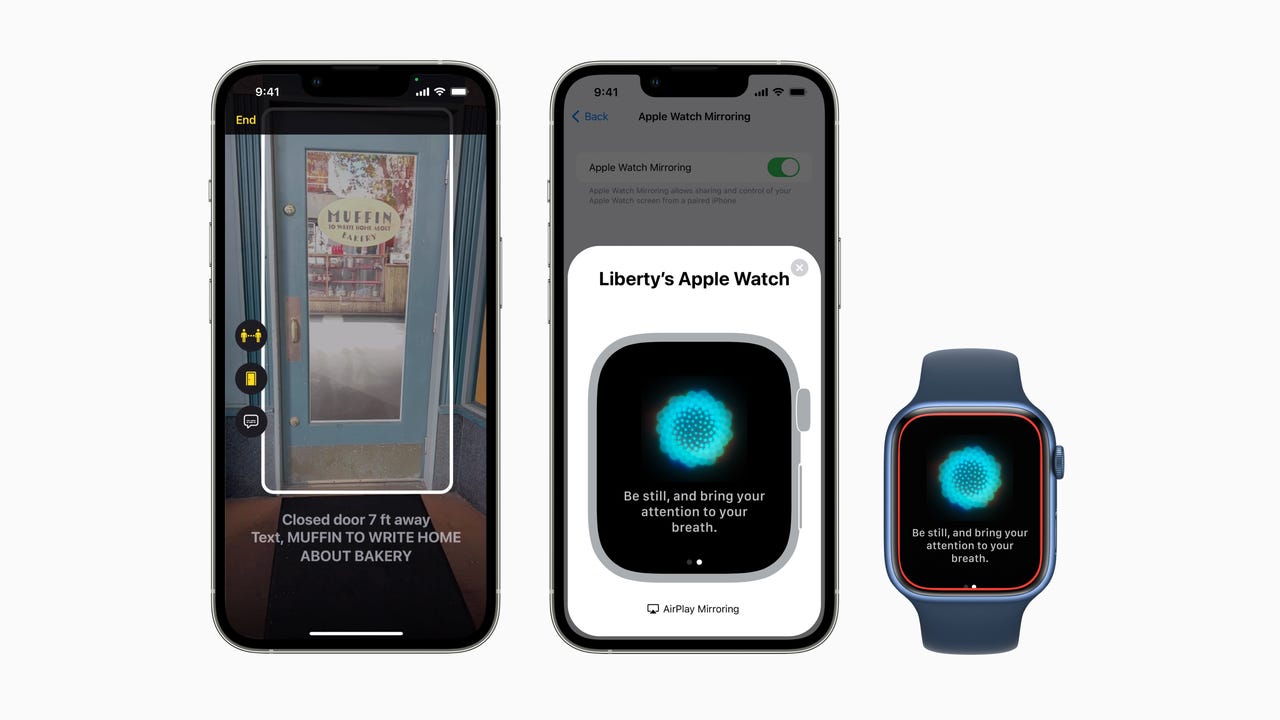

For users who are blind or have low vision, a new Door Detection uses an iPhone or iPad's Lidar Scanner and the rear camera to identify a doorway, giving the user details such as how far away it is and describing any details about the door the user needs to know -- such as if it's open, closed or whether the door opens in or out, and what kind of handle it has. Apple also states that the feature is able to read room numbers or any signs near or on the door itself.

Google added the ability to turn any audio coming from an Android phone into Live Captions a couple of years ago, and now Apple is adding a similar feature to the iPhone, iPad and Mac. The feature will work with any audio that's playing on your Apple device, including a conversation with a person who is sitting next to you.

Also: Best iPhone deals available right now

Apple Watch users will have a new option to mirror the Watch's display to their iPhone, providing larger touch targets, making it possible and easier to control the watch using your iPhone's screen. There will also be new Quick Actions on the Apple Watch that enable features like a double-pinch gesture to answer or end a call or control media playback.

Also: Apple officially discontinues the iPod Touch, the last iPod in production

Additional accessibility features coming to Apple's hardware products include a Buddy Controller feature that allows a second party to help someone play a game using a second game controller, combing the two controllers into one. There will also be an option to change how long Siri waits before responding to a request, voice control spelling mode for letter-by-letter spelling, improved sound recognition for custom sounds, and more.

These updates are nice to see, and the video demos Apple published in its press release are impressive.

However, what piques my interest the most is that if Apple is pre-announcing features for iOS 16, instead of holding it for the keynote, what else are we going to see at WWDC? Oh, and then there's the fact that the door identification feature is begging to be used on a pair of augmented reality glasses instead of holding up an iPhone or iPad as you walk down the street or through a store.