Kinesis: Amazon Web Services's answer to big data?

LAS VEGAS---If there one thing that Amazon doesn't lack, it's data.

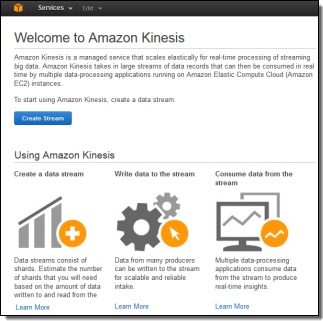

Amazon Web Services is making a bigger push into monetizing that exponentially growing treasure trove through Kinesis, a new service for processing huge volumes of streaming data in real-time.

Much like the Amazon Appstream introduced on Wednesday, Amazon appears to be promising to take on the heavy workload process, taking care of all of that costly work in the cloud.

Here, the exchange might be a little more evident given just how valuable (and debatable) real-time data analytics can be.

More from AWS re:Invent 201

Basically, Kinesis buffers the data into a storage system with checkpoints to ingest data into the Redshift cloud data warehouse.

Users can store and process terabytes of data each hour from hundreds of thousands of sources, including (but not limited to) financial transactions, social media feeds, and location-tracked events.

According to AWS, not only can these business customers (and Amazon, for that matter) get useful data back instantly, but also be able to write applications, generate alerts, and make other decisions virtually instantly.

AWS executives that up until now, most big data processing has been done with a batch-oriented approach with a hodgepodge of open source tools. During Thursday's keynote at AWS re:Invent 2013, Amazon CTO Werner Vogels also dismissed the buzzphrase "Internet of Things," suggesting that this is a much more serious issue than often realized.

Connecting the dots, users can also move this data around within the AWS cloud between the Simple Storage Service (S3), Elastic Map Reduce (EMR) and Redshift.

Amazon asserted that Kinesis can scale to support applications and data streams of any size while also replicating across multiple availablility zones.

Kinesis is launching today, albeit in limited preview availability, with pay-as-you-go pricing.

Image via the Amazon Web Services blog