Pepperdata Code Analyzer for Apache Spark

What can Pepperdata Code Analyzer for Apache Spark do and where does it sit in Pepperdata's product line?

1 of 4 Pepperdata

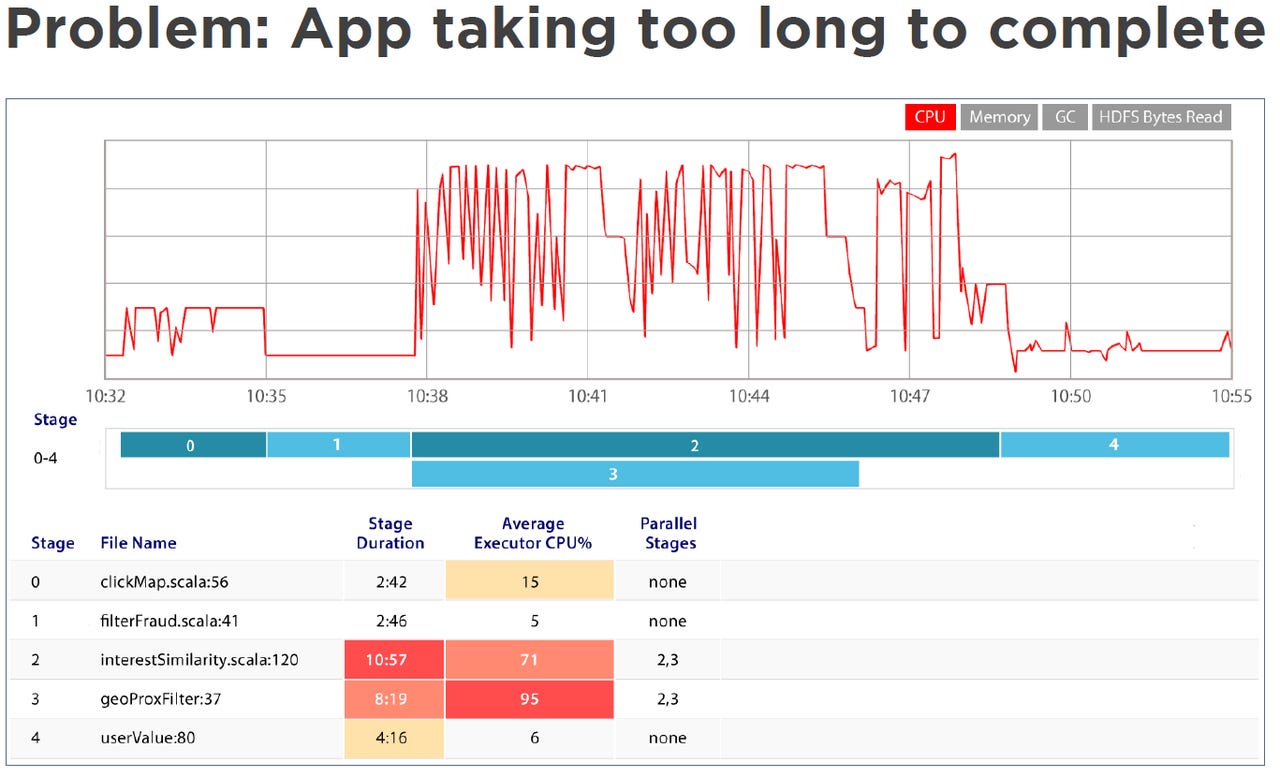

Breaking down application execution in 5 phases (0-4) can give insights in spotting run time bottlenecks.

In this case, looking at the default Spark UI (bottom) it looks as if phase 3 is the bottleneck, using up lots of CPU.

Looking at Pepperdata's custom UI however (top), it becomes clear that phases 2 and 3 run in parallel, and while phase 3 completes phase 2 continues running and using up CPU.

2 of 4 Pepperdata

Inconsistent runtimes

In 2 runs of the same application, run times are not consistent.

3 of 4 Pepperdata

Cluster weather

This has to do with cluster weather: in the 2nd run there is less memory available to the available, as there are other applications running at the same time.

4 of 4 Pepperdata

Pepperdata product line

Pepperdata Code Analyzer for Apache Spark is aimed at engineers and complements Pepperdata existing line of products

Related Galleries

Holiday wallpaper for your phone: Christmas, Hanukkah, New Year's, and winter scenes

![Holiday lights in Central Park background]()

Related Galleries

Holiday wallpaper for your phone: Christmas, Hanukkah, New Year's, and winter scenes

21 Photos

Winter backgrounds for your next virtual meeting

![Wooden lodge in pine forest with heavy snow reflection on Lake O'hara at Yoho national park]()

Related Galleries

Winter backgrounds for your next virtual meeting

21 Photos

Holiday backgrounds for Zoom: Christmas cheer, New Year's Eve, Hanukkah and winter scenes

![3D Rendering Christmas interior]()

Related Galleries

Holiday backgrounds for Zoom: Christmas cheer, New Year's Eve, Hanukkah and winter scenes

21 Photos

Hyundai Ioniq 5 and Kia EV6: Electric vehicle extravaganza

![img-8825]()

Related Galleries

Hyundai Ioniq 5 and Kia EV6: Electric vehicle extravaganza

26 Photos

A weekend with Google's Chrome OS Flex

![img-9792-2]()

Related Galleries

A weekend with Google's Chrome OS Flex

22 Photos

Cybersecurity flaws, customer experiences, smartphone losses, and more: ZDNet's research roundup

![shutterstock-1024665187.jpg]()

Related Galleries

Cybersecurity flaws, customer experiences, smartphone losses, and more: ZDNet's research roundup

8 Photos

Inside a fake $20 '16TB external M.2 SSD'

![Full of promises!]()