Nvidia GauGAN takes rough sketches and creates 'photo-realistic' landscape images

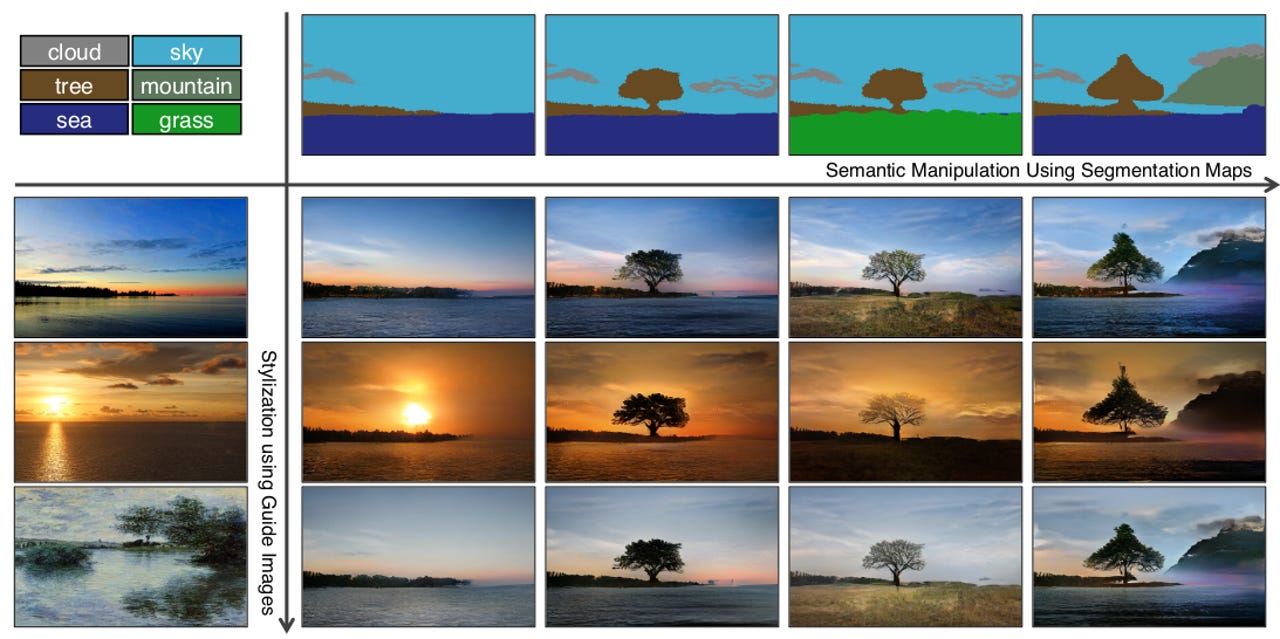

Researchers at Nvidia have created a new generative adversarial network model for producing realistic landscape images from a rough sketch or segmentation map, and while it's not perfect, it is certainly a step towards allowing people to create their own synthetic scenery.

see also

The GauGAN model is initially being touted as a tool to help urban planners, game designers, and architects quickly create synthetic images. The model was trained on over a million images, including 41,000 from Flickr, with researchers stating it acts as a "smart paintbrush" as it fills in the details on the sketch.

"It's like a colouring book picture that describes where a tree is, where the sun is, where the sky is," Nvidia vice president of applied deep learning research Bryan Catanzaro said. "And then the neural network is able to fill in all of the detail and texture, and the reflections, shadows and colours, based on what it has learned about real images."

In a demonstration to journalists at its GTC conference on Monday, the researchers showed GauGAN in action, and how it was able to render images in real-time, switch the styling between different seasons, and how water reflected and interacted with the landscape.

The machine used for the task contained a recently released Titan RTX, however Catanzaro said it could be possible to run the same application on a CPU if the rendering of the image was limited to once every few seconds, or created on-demand.

Must read

- Nvidia trains innocent AI to clean watermarks off photos (CNET)

- New NVIDIA chip could enable AI and robotics in more industries (TechRepublic)

"This technology is not just stitching together pieces of other images, or cutting and pasting textures," Catanzaro said. "It's actually synthesising new images, very similar to how an artist would draw something."

In a research paper to be presented as an oral presentation at CVPR conference in June, the researchers said using human testing via Mechanical Turk showed its images were preferred to those generated by CRN, pix2pixHD, and SIMS algorithms, although in the category of cityscapes, it barely beat out the latter two techniques. Compared to other algorithms, Catanzaro said GauGAN had a better vocabulary, and required fewer parameters.

At the end of 2018, a team of researchers including Catanzaro presented a paper on predicting future frames of video for synthesised city scenes.

Nvidia also used generative adversarial networks to create artificial brain MRI imagery, to help overcome a lack of brain imagery to train networks on.

Diversity is critical to success when training neural networks, but medical imaging data is usually imbalanced," Hoo Chang Shin, a senior research scientist at Nvidia, explained to ZDNet in September. "There are so many more normal cases than abnormal cases, when abnormal cases are what we care about, to try to detect and diagnose."

Disclosure: Chris Duckett travelled to GTC as a guest of Nvidia.

Related Coverage

Nvidia's purchase of Mellanox turns up heat on Intel rivalry, data center ambitions

Nvidia's $6.9 billion purchase of Mellanox highlights the company's bet that next-gen data center architecture will revolve around data and artificial intelligence.

Dell EMC, Nvidia make AI reference architecture available

AI is becoming a critical workload for enterprises and storage giants are rolling out building blocks to build out machine learning and AI workloads.

China's AI scientists teach a neural net to train itself

Researchers at China's Sun Yat-Sen University, with help from Chinese startup SenseTime, improved upon their own attempt to get a computer to discern human poses in images by adding a bit of self-supervised training. The work suggests continued efforts to limit the reliance on human labels and "ground truth" in AI.

CES 2019: Nvidia's new GeForce RTX 2060 is just $349

On the eve of CES, Nvidia also announced that 40 new laptop models from every major OEM will feature RTX GPUs.

NVIDIA's new Turing architecture could make life much easier for video producers (TechRepublic)

The new chipset has real-time ray tracing capabilities, which could cut down hours of work for creative professionals.

Cheat sheet: TensorFlow, an open source software library for machine learning (TechRepublic)

TensorFlow is an open source software library developed by Google for numerical computation with data flow graphs. This TensorFlow guide covers why the library matters, how to use it, and more.

Why it could soon be much easier to get your hands on NVIDIA GPUs (TechRepublic)

The cryptocurrency demand is dying off, as NVIDIA fell below Wall Street targets.