VMware ESXi

ESXi is the latest version of VMware's industry-leading hypervisor. Although you can still buy its big brother, ESX Server, ESXi is now the main front-line VMware hypervisor. Rather than choosing between ESX Server and ESXi, people should probably install ESXi and pick a suitable license. More detail on the differences between the two products are available on VMware's web site.

The entry-level ESXi is available free of charge for servers with two processors, each one with up to six cores. Although it makes a solid platform for running virtual machines (VMs), it doesn't provide datacentre automation capabilities such as High Availability (HA) or Distributed Resource Scheduling (DRS). HA automatically restarts a failed VM on an alternative ESX server, while DRS automatically balances the workload of several VMs across a farm of ESX servers. If you need these features, you must buy one of a range of licences for ESXi and VMware VirtualCenter Server (VCS). ESXi licences can be bought individually or in one of three bundles — Foundation, Standard and Enterprise. Licences for VCS cost extra, and can be bought as part of bundles called Foundation, Standard and Enterprise Accelerator Kits, which also include ESXi licences.

Installation and setup

We tested ESXi in ZDNet UK's Labs using an HP ProLiant DL380 server fitted with two 2.8GHz Xeon CPUs and 4GB RAM. Our server had an embedded HP SmartArray 5i Ultra 320 SCSI RAID controller and a SmartArray 5304-256 Ultra 320 SCSI RAID card connected to seven 36.4GB SCSI disks. We configured two RAID 0 LUNS on the second RAID controller, the first was 40GB, and the second 200GB.

Our server hardware is not brand-new, but it's very popular in datacentres, and we had no compatibility problems with our installation. Having said that, you need to check that your server hardware is on the VMware hardware compatibility list if you want to be sure of a successful installation. Although most modern server hardware is supported, a few very old or very recent servers may be missing.

The VMware software is very easy to get hold of. We downloaded an ISO image of ESXi and copied it to a CD. Installation was a matter of booting the ProLiant server from the CD, waiting about 5 minutes for it to load the Thin ESX Installer tool, and then making four keystrokes to begin copying the software, one of which was used to select the LUN on which ESXi would be installed. The installation took a further 90 seconds to finish.

We were impressed with the simplicity and speed of the installation process: ESXi is certainly less hassle to install than either ESX Server or Microsoft's Hyper-V.

However, the main difference between ESXi and ESX Server — and other hypervisors — is that ESXi does not use a console operating system to host the hypervisor. In contrast, ESX Server uses Red Hat Linux to store and load the hypervisor software, and although VMware administrators will rarely touch the Red Hat console, from time to time they may need to do so — for example, to move files used by VMs to appropriate locations, or (more rarely) to configure the IP address settings used by the service console. Similarly, Hyper-V sits on top of Windows. Some administrators may like the idea of managing their hypervisor via Red Hat or Windows, but in practice we suspect most will prefer the simplicity of the ESXi approach.

With ESXi, the few settings that must be entered on the hypervisor console are made using a simple text-based menu; other operations, such as moving VM related files, are carried out using remote management tools such as VMware Virtual Infrastructure Client (VIC) and VMware Infrastructure Remote Command Line Interface (RCLI), both discussed below. The console operating system is also a significant potential point of failure. Overall, the absence of a console operating system makes ESXi easier to use, and means it requires less maintenance and patching than an ESX Server or Hyper-V based system.

Following the first boot of our ESXi server, the ProLiant DL380 screen showed the simple text-based menu mentioned above, which allowed us to do some basic configuration of the ESXi environment. For example, we could configure suitable networking parameters and set an administrator password.

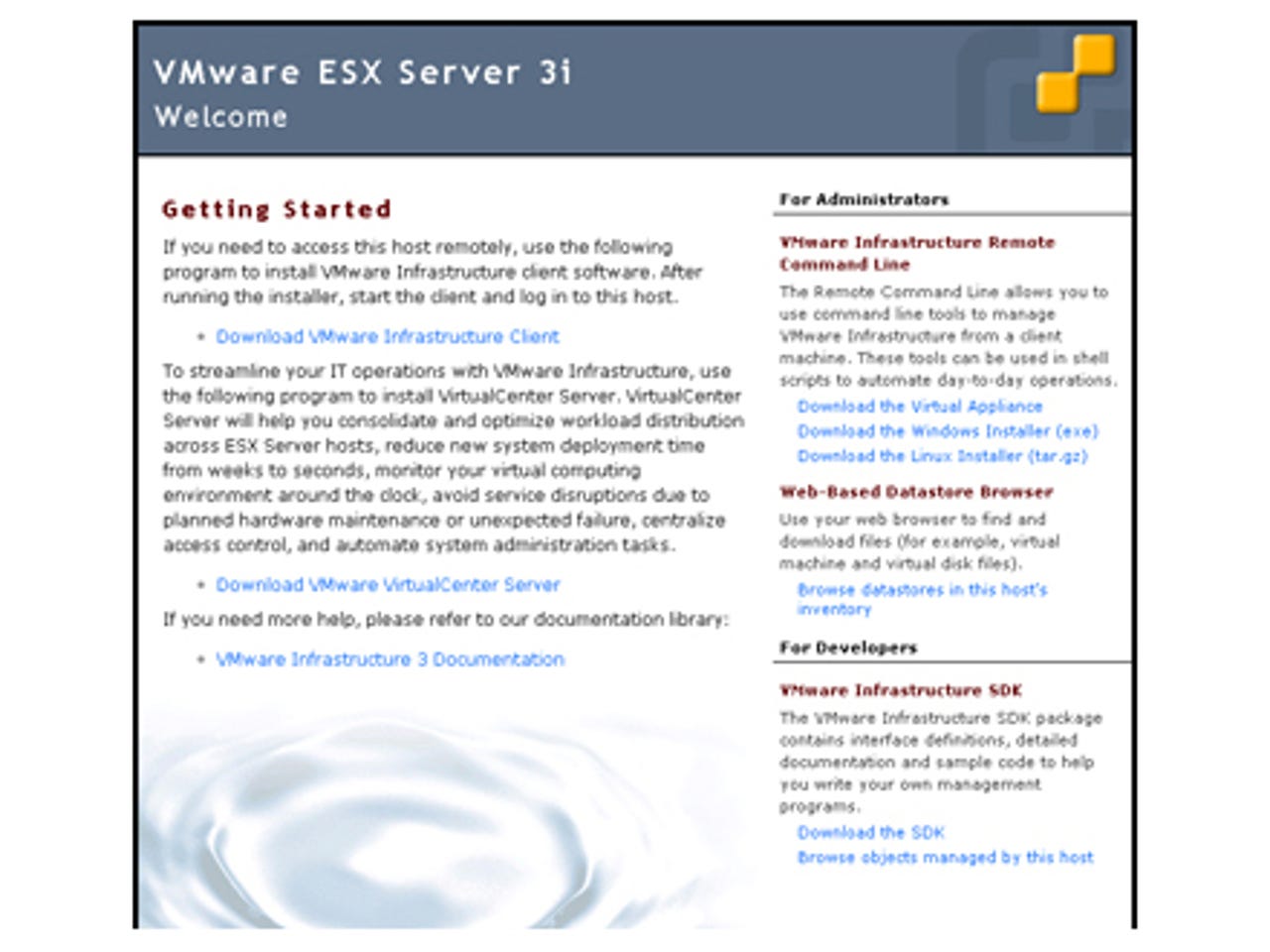

The ESXi welcome page has links for downloading Virtual Infrastructure Client (VIC), which you can use to add virtual machines (VMs) to the server.

It took a moment to activate these changes, whereupon we could make our initial network connection to the hypervisor using a web browser on our management server. This took us to the ESXi welcome page (see above), which had links for downloading VIC — which most people will use to add VMs to their server. Once VMs have been added, VIC is also used to start and stop them, and configure all the other settings used in the virtual environment, such as where they are stored and which networks they are connected to.

The welcome page also has a link to download VCS (VirtualCenter Server), but you'll need to buy licences to use this after the 60-day trial period. The main benefit of the entry-level VCS version is that it allows multiple ESX systems to be managed from a single graphical console. VCS Standard edition also provides the automated datacentre HA feature, while VCS Enterprise includes DRS and VMotion. The latter enables VMs to be moved from one ESX server to another without incurring any downtime. VMotion is considered one of VMware's crown jewels because it means VMs can be kept running while being moved to another host server, allowing the original hypervisor software or even the host hardware to be upgraded or replaced without any downtime. A similar feature, allowing a VM to be moved to a different host with only a slight pause to the VM operating system, can be accomplished without VCS using PowerShell and RCLI scripts.

We downloaded and installed VIC. The ESXi Welcome page also had a link to download RCLI tools. This led to a page on the VMware web site from where we could download the tools as software for Windows and Linux, as well as in a Virtual Appliance (VA, which is a preconfigured VM). The VA format is not recommended for production environments, but as we didn't want to install too much software on our management system we took this option.

Virtual Infrastructure Client (VIC) has a link for importing a Virtual Appliance (VA), although this didn't work in our test setup.

Once we downloaded the VA we clicked a link in VIC to 'Import an appliance' (see above). Unfortunately this link didn't work in our test setup, so we used VIC's File\ Virtual Appliance\ Import option instead. This started a wizard that imported the appliance from our Windows desktop to the ESXi server.

We used the VA version of RCLI (Remote Command-Line Interface), which is a Linux-based virtual machine.

The VA version of the RCLI package is actually a Linux VM, which we booted by clicking on its 'Start' button shown in the VIC console. As this was the first time we had run it, we needed to click on a tab in the VIC console to show the Linux VM's screen (see above), and from there read and accept a licence agreement and enter a password for the RCLI system.

ESXi in operation

The RCLI allowed us to perform many of the operations available with the ESX Server 3 service console, such as starting and stopping VMs. A few of the popular service console commands required an update to work in the ESXi environment. For example, esxtop has been replaced by resxtop, and useradd is replaced by vicfg-user.pl. However, RCLI commands can be used in scripts that run on ESXi and ESX Server 3.5 hosts. Thus RCLI provides a way to manage several ESX-based systems from a single workstation, so it should make it easier to manage ESX farms than using the older service console approach.

For the most part though, VIC makes it very easy to manage all aspects of an ESXi server and most system administrators will normally use VIC to manage VMs running on 3i systems. VIC is also used to manage ESX Servers, either operating in isolation or as part of a VirtualCenter Server farm. Anyone familiar with VirtualCenter or with standalone ESX Server systems will find the switch to ESXi extremely easy. In fact, you need to dig deep into the darkest corners of VIC to find any significant differences between managing ESXi or ESX servers.

VIC makes it very easy to manage all aspects of an ESXi server.

One difference worth mentioning is that that the LUN holding the ESXi software was formatted with the VMFS 3.31 file system and all but 500MB of the capacity of this disk was available for us to store VMs. Traditional ESX Server systems store the hypervisor in the Linux Console Operating file system, which is not used to store VMs. The Thin ESX Installer had also formatted our empty 200GB LUN with VMFS 3.31, but had left the 67GB LUN containing our factory installation of Windows untouched. To all intents and purposes though, ESXi provides the same capabilities as the older ESX Server software. Consequently anyone familiar with the older VMware product range will find ESXi straightforward to install and use.